How to Optimize Content for AI Search: ChatGPT, Gemini, and Perplexity

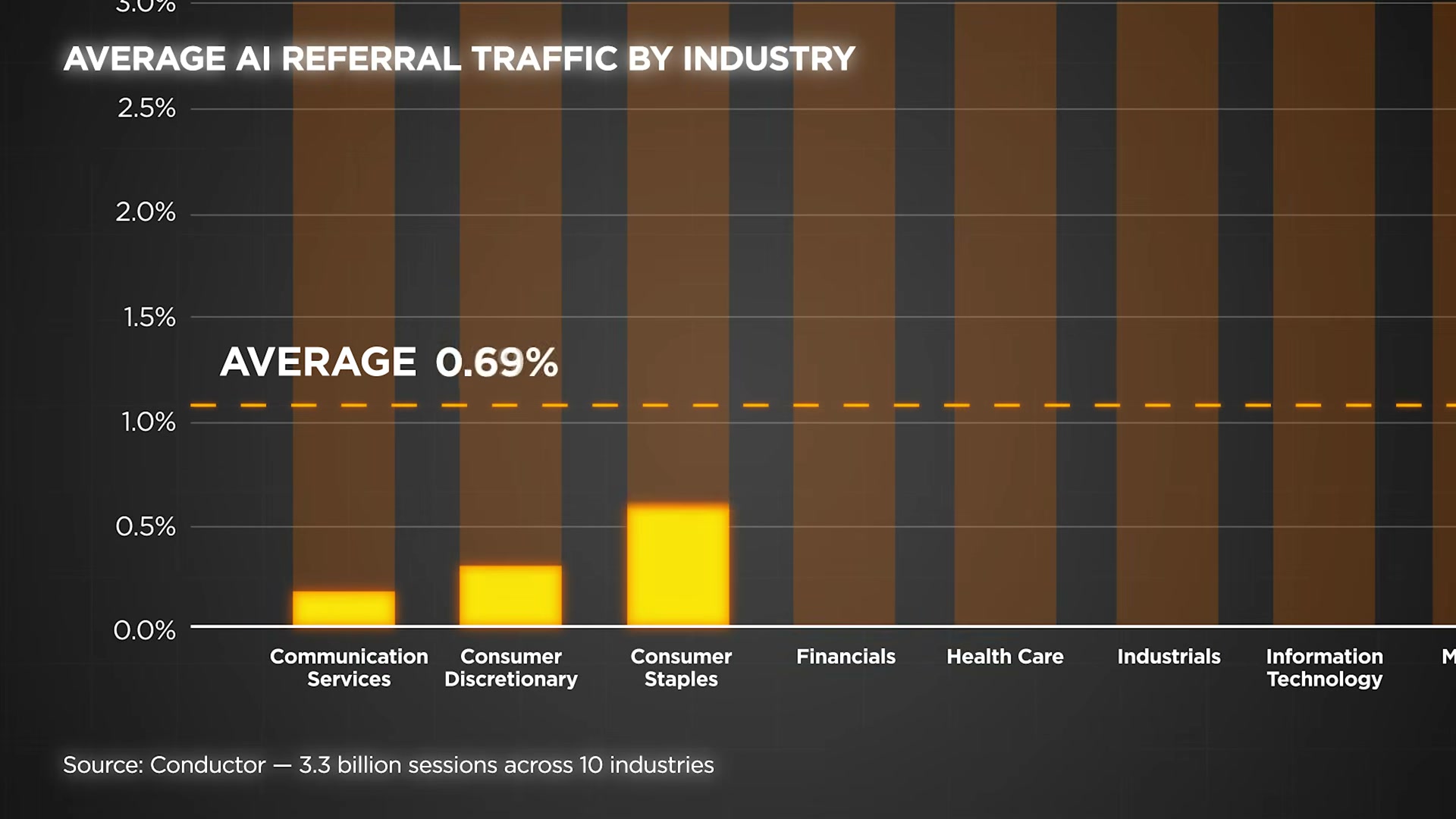

AI search is redistributing traffic in ways traditional SEO metrics won’t catch. After completing this tutorial, you’ll understand how retrieval-augmented generation (RAG) determines which pages get cited — and which get ignored — and you’ll have a concrete checklist to make your content retrievable across ChatGPT, Gemini, and Perplexity. NP Digital tracked this shift across 22 brands and watched GEO-driven leads grow from 3.1% to 7.4% in a single year.

- Understand that AI search runs on a two-step RAG process. When a user submits a query, the system first retrieves a small set of pages it considers relevant and trustworthy, then generates a cited answer from those pages. Getting retrieved is the entire game — content that isn’t in that initial retrieval set never appears in the answer, regardless of its quality.

- Recognize that AI retrieval evaluates four signals: relevancy (topical association, not just keywords), authority (brand mentions, backlinks, E-E-A-T signals), structure (clean HTML the AI can parse top to bottom), and freshness (AI-cited content skews significantly newer than traditional search results). All four must be present — weakness in any one reduces your retrieval probability.

- Go to

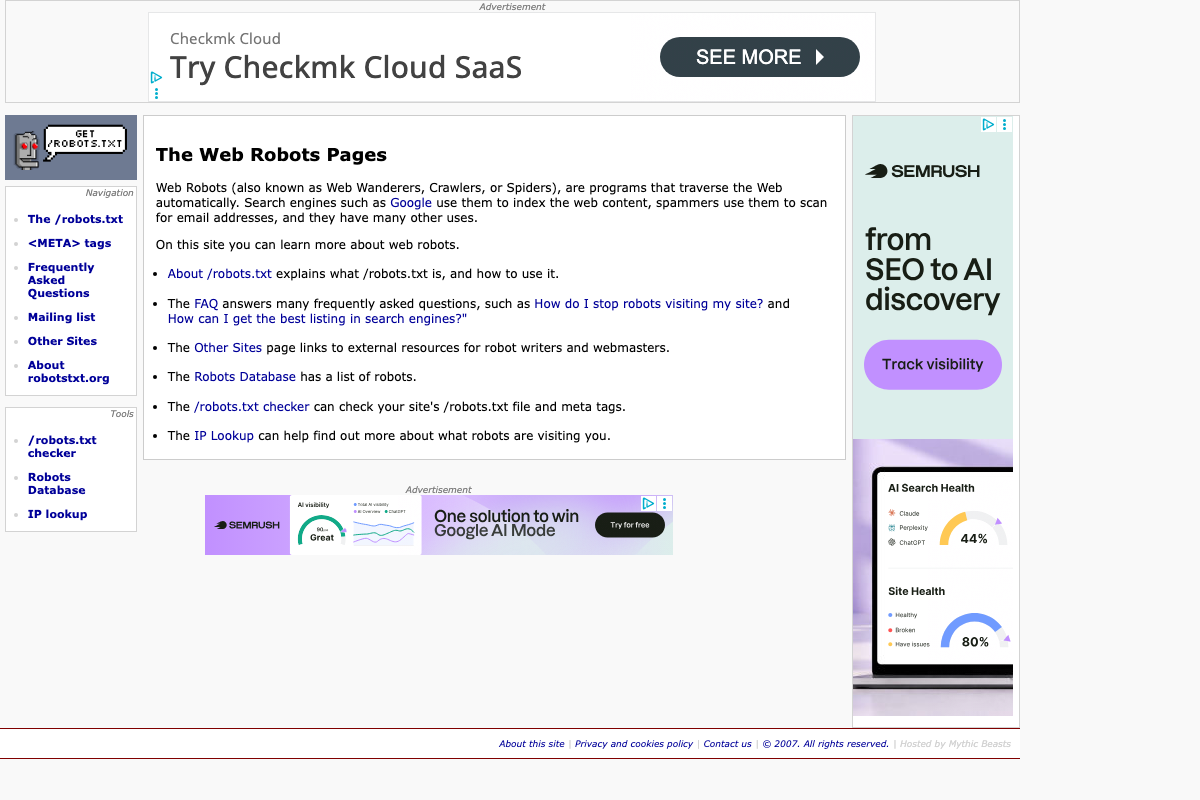

yourdomain.com/robots.txtright now and confirm thatGPTBotandPerplexityBotare not blocked. An Ahrefs study of 140 million websites found nearly 6% were accidentally blocking AI crawlers. No other optimization matters if the bots cannot read your pages.

- Build branded mentions on credible, topically relevant sites through PR campaigns, podcast appearances, outreach, and product reviews. Branded mentions correlate more strongly with AI citation visibility than backlinks or domain rating — because language models learn brand-to-category associations by reading the web at scale. The more your brand name appears alongside your core topic across authoritative sources, the more confidently AI systems recommend you.

-

Build deep topic clusters rather than broad generalist content. Create multiple interconnected pieces that fully cover a narrow subject area from every angle. AI systems treat comprehensive coverage of a specific topic as an authority signal — a site that owns one subject thoroughly outranks a site that lightly covers many.

-

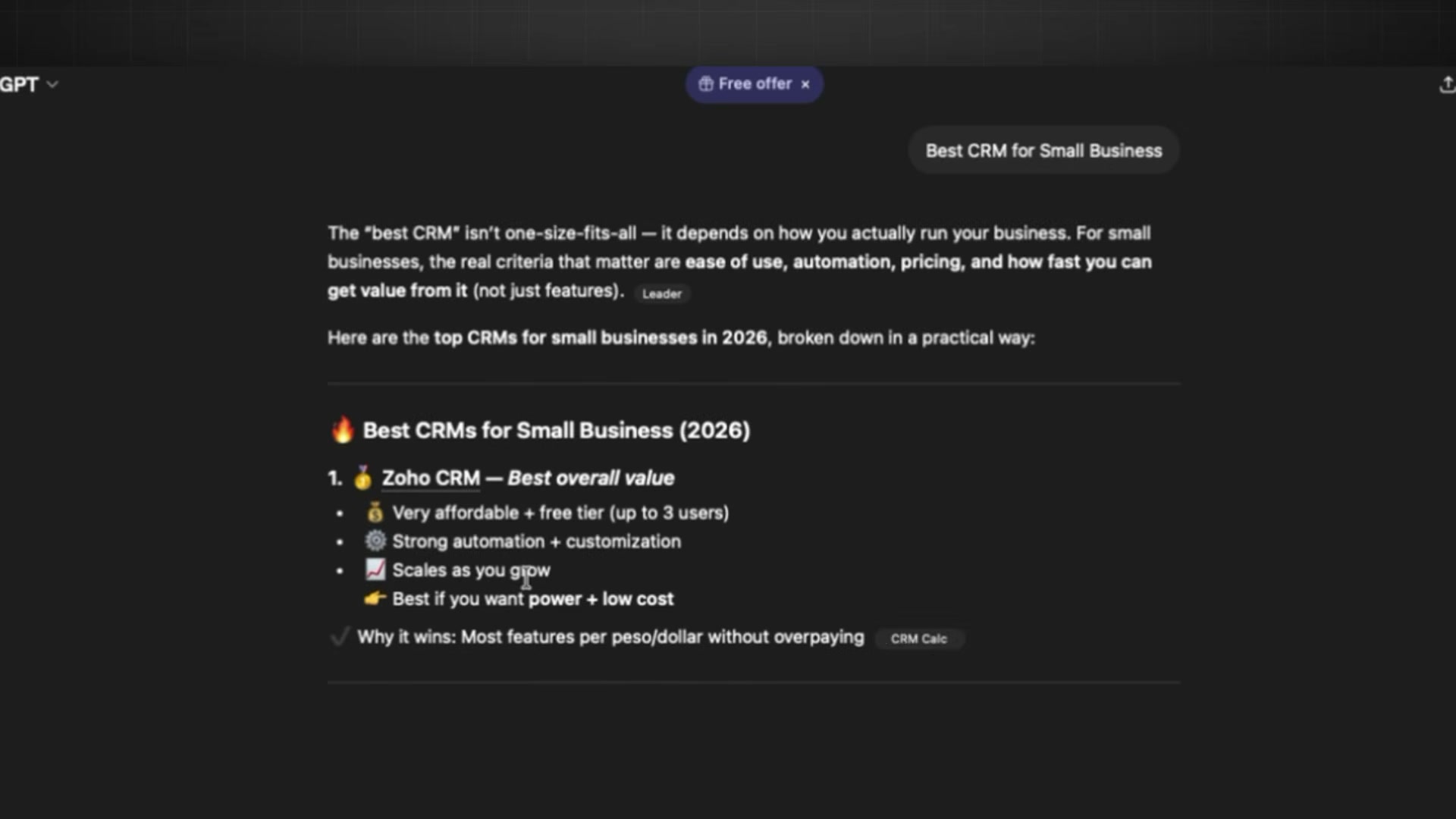

Structure every page with clear H-tags, numbered lists, bullet points, and FAQ sections. Google’s AI reads HTML using a tree-walking algorithm from top to bottom — disorganized pages get skipped. Structure is not a style preference; it is the mechanism by which AI extracts your answer.

-

Lead with your most important insight at the top of the page. RAG systems chunk content paragraph by paragraph and trim what falls outside the relevant window — an insight buried in paragraph 15 of a 2,000-word article is functionally invisible to the retrieval model.

-

Refresh high-performing content on a regular cadence: add new statistics, update examples, and revise the publish date. AI systems strongly prefer fresh content, and a targeted refresh can re-enter a page into the citation pool after it has been deprioritized.

-

Optimize for each AI platform separately, not just for Google. ChatGPT holds 78% of LLM referral traffic; Gemini has grown 5× since 2024 but captures only 12%. Each platform has distinct source preferences, and the overlap between Google’s top results and ChatGPT citations has dropped from 76% to 38%.

Warning: the GPT model version designations cited in this video (GPT-5.3, GPT-5.4 Thinking Premium) do not match current official OpenAI model naming conventions — see the verified version below.

- Track GEO-driven leads as a separate metric from traditional organic traffic. Mixing AI referral conversions into your standard SEO reporting makes it impossible to measure whether your retrieval optimization is working — or compounding against you.

How does this compare to the official docs?

The video makes a clear, data-backed case for GEO strategy, but several of its specific claims — model version naming, citation rate benchmarks, and robots.txt crawl behavior — are worth checking against current platform documentation before building a client strategy around them.

Here’s What the Official Docs Show

The video builds a solid GEO framework that holds up well where documentation exists — this act layers in what the captured screenshots add and flags where platform-level verification wasn’t possible. Two items in Step 9 require plain correction before you build any client strategy around them.

Step 1 — The RAG retrieval process

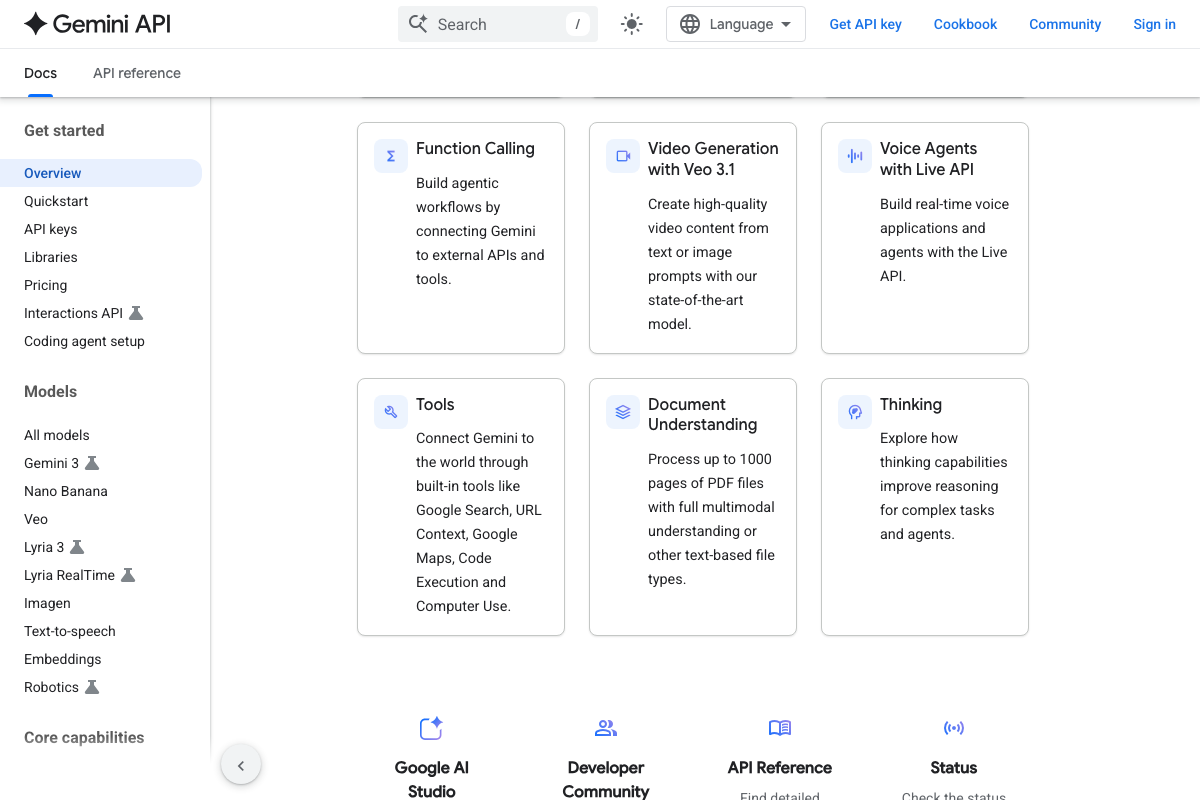

The Gemini API documentation confirms that Google Search and URL Context are explicit built-in retrieval tools, which supports the video’s RAG framing at the platform level. What those docs do not cover: how the consumer-facing Gemini app or AI Overviews selects which URLs to retrieve — a distinct, undocumented layer.

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 2 — The four retrieval signals: relevancy, authority, structure, freshness

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 3 — Audit your robots.txt for AI crawler access

The video’s approach here matches the current docs exactly. robotstxt.org confirms the robots exclusion protocol and links directly to a /robots.txt checker consistent with the tutorial’s audit recommendation. One addition worth noting: GPTBot and PerplexityBot are not named in the visible page content — confirm those specific user-agent strings against OpenAI’s and Perplexity’s own developer documentation before editing your rules.

Step 4 — Build branded mentions on credible, topically relevant sites

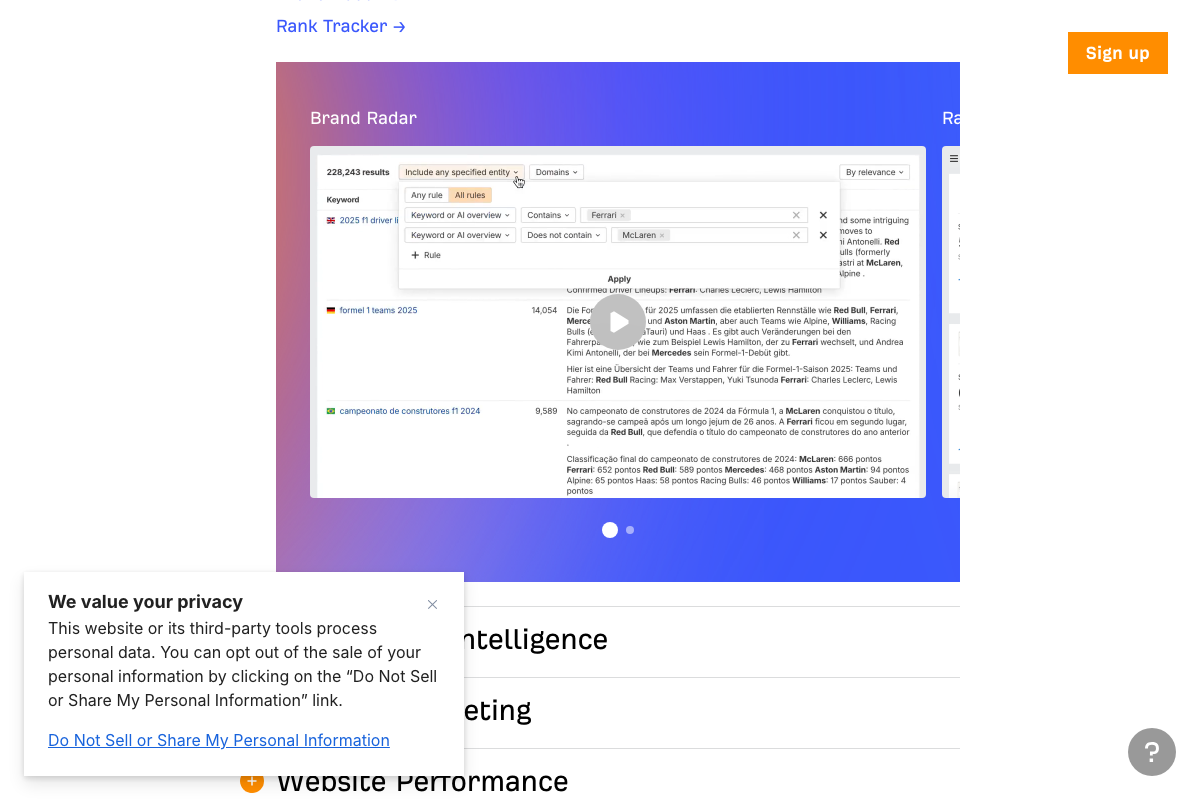

The video’s approach here matches the current docs exactly. NP Digital’s homepage lists GEO/AEO as a named, purchasable service category alongside traditional SEO — confirming the discipline is commercially established. Ahrefs Brand Radar explicitly tracks “brand mentions, citations, and chatbots” as a named product capability, giving you direct tooling to measure what the video describes.

Step 5 — Build deep topic clusters

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 6 — Structure pages with H-tags, lists, and FAQ sections

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 7 — Lead with your most important insight

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 8 — Refresh high-performing content regularly

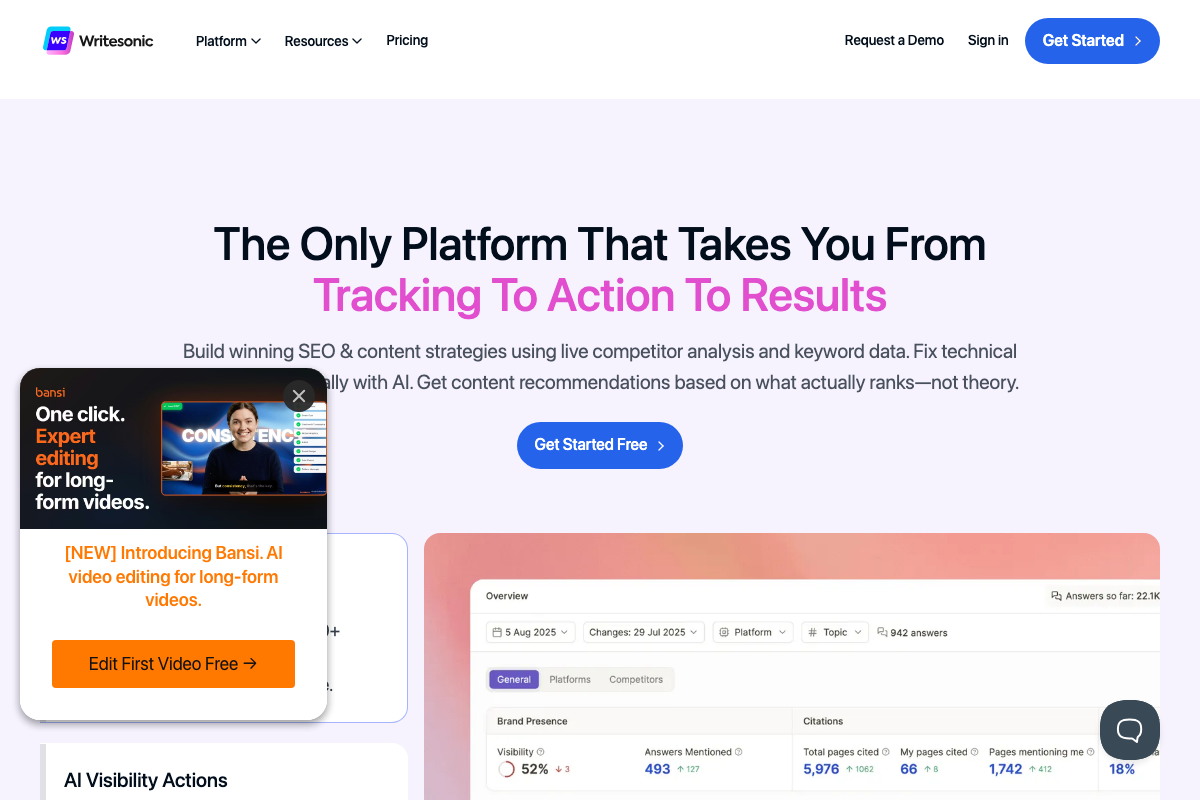

Writesonic explicitly lists “refresh existing pages” as one of three named action types — alongside content creation and competitor outreach — confirming this is an established, tool-validated tactic. No official AI platform documentation addresses freshness signals directly in any captured screenshot.

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 9 — Optimize for each AI platform separately

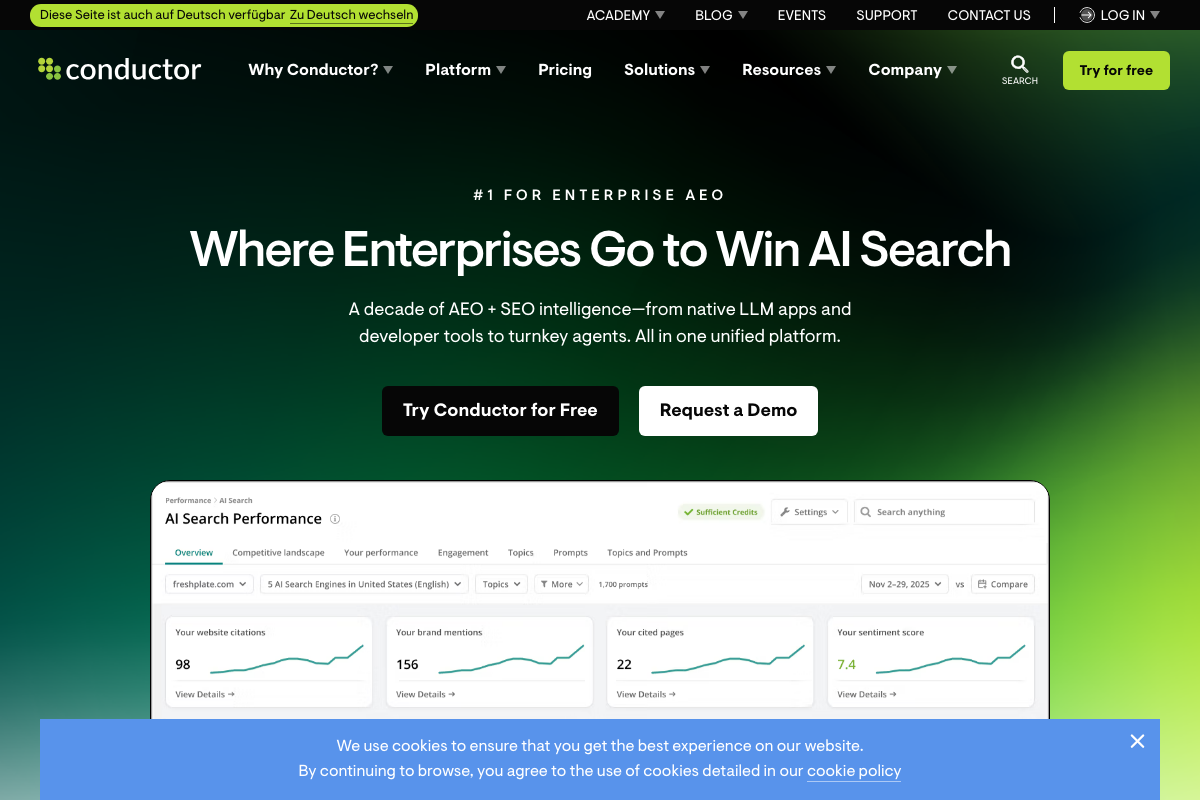

The video lists ChatGPT, Gemini, Perplexity, and AI Overviews as the four platforms to optimize for. As of April 29, 2026, both Conductor and Writesonic track Claude and Microsoft Copilot as distinct AI search engines with measurable citation behavior — neither appears in the tutorial’s platform list, and both are omissions worth correcting before scoping a client engagement. Separately, the Google AI Overviews source URL the video references (https://blog.google/products/search/ai-overviews-search/) returns a 404 error as of April 29, 2026 — current official guidance on AI Overviews is available through Google Search Central, not this address.

Step 10 — Track GEO-driven leads as a separate metric

The video’s approach here matches the current docs exactly. Ahrefs Brand Radar surfaces “Your performance by AI search engine” as a live, filterable product view. Conductor’s dashboard tracks website citations, brand mentions, cited pages, and sentiment score across five named engines with longitudinal period-over-period comparison. Writesonic adds specific KPI language — Answers Mentioned, Total pages cited, Pages mentioning me — that maps directly into campaign-level reporting.

Useful Links

- ChatGPT — Consumer homepage confirming Deep Research as a named ChatGPT feature distinct from standard conversational chat.

- Gemini API | Google AI for Developers — Developer documentation confirming Gemini’s live web retrieval via Google Search and URL Context as explicit built-in tools.

- Perplexity — Consumer homepage confirming Perplexity’s vertical search modes including Finance, Health, Academic, and Patents.

- The Web Robots Pages — Canonical robots exclusion protocol reference with a /robots.txt checker tool for auditing crawler access.

- NP Digital — Global digital marketing agency listing GEO/AEO as a named, purchasable service category alongside traditional SEO.

- Ahrefs — AI Marketing Platform Powered by Big Data — SEO and AI search platform with Brand Radar for tracking citations, brand mentions, and chatbot appearances segmented by AI engine.

- Conductor — Win in AI Search — Enterprise AEO platform tracking AI search performance across ChatGPT, Gemini, Copilot, Claude, and traditional search with citation and sentiment metrics.

- Writesonic — AI Search Visibility Tracking & Optimization Platform — AI search optimization platform with per-platform visibility scoring across Claude, Perplexity, Gemini, and a Track → Action → Results workflow.

0 Comments