Generative Engine Optimization: Is It Safe and How to Do It the Right Way

Brands racing to appear in ChatGPT and Perplexity answers are increasingly turning to Generative Engine Optimization — but many of the tactics being sold are actively destroying the organic foundations that make GEO possible in the first place. In this practitioner interview, SEO specialist Harpre Singh breaks down how AI retrieval actually works, why the most popular GEO playbooks are high-risk, and what a sustainable approach looks like. After working through these steps, you’ll be able to audit your own GEO exposure and build a citation strategy that doesn’t trade short-term AI visibility for long-term search penalties.

-

Understand how query fan-out works. When a user types a query into ChatGPT, Perplexity, or any AI tool, the system silently expands that single query into multiple sub-queries before fetching results. A search for “best running shoes” might spawn “best running shoes 2026” in the background. The dominant GEO advice — produce content for every keyword variation the AI might generate — treats this as a content scaling opportunity. It is not. Chasing fan-out at scale produces low-commercial-intent content that doesn’t generate revenue and creates the conditions for the pattern in step 2.

-

Recognize the rank-and-tank pattern. Sites that use GEO content tools to scale from roughly 100 to 1,000+ blog posts in a matter of weeks typically see an initial traffic spike. GEO vendors frame that spike as proof of increased AI visibility. What follows is a Google algorithm penalty that can require two to three years to recover from — a timeline Singh calls “not an understatement.” The core problem: inflated citation counts from informational keywords don’t represent real commercial visibility, and the underlying SEO damage is severe.

-

Distinguish between web retrieval and LLM training data. Most commercially relevant queries trigger a live web search inside the AI tool, not a lookup against the model’s training data. When ChatGPT or Claude search the web, they use a search engine API — Bing, Google, or similar — to retrieve URLs. Studies show a strong correlation between traditional search rankings and the probability of a page being retrieved by those AI web searches. This means traditional on-page SEO is the actual foundation of GEO for most brands, not a parallel track.

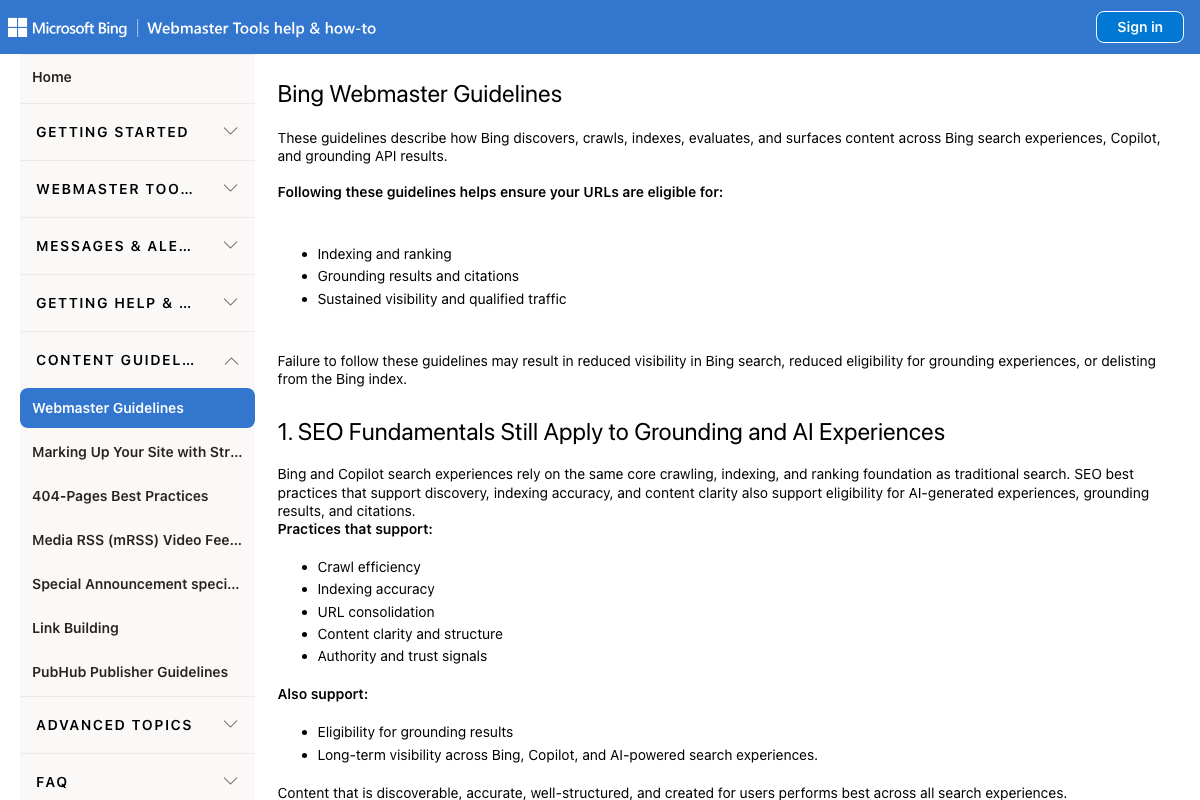

- Run the free-vs-paid ChatGPT experiment. Open an incognito browser tab with the free version of ChatGPT. In a separate tab, log into a paid account. Submit the same query in both, then repeat it several times with slight phrasing variations. Compare citation presence, answer accuracy, and whether the tool searched the web at all. The free version frequently answers from training data without a web search; the paid version more often retrieves live sources. That difference tells you exactly which optimization lever applies to your use case.

-

Use the experiment to decide your optimization focus. If the paid version consistently cites live web results, double down on traditional on-page SEO — metadata, authority, link equity. If answers appear to come from training data without a web search, the priority shifts to updating third-party profiles and sources the LLM can reference without fetching the web.

-

Audit and optimize your Trustpilot profile. Trustpilot is heavily cited in ChatGPT responses to brand-plus-reviews queries. Respond to negative reviews. Build a systematic process for encouraging satisfied customers to leave reviews. Improving your Trustpilot presence directly improves the content the AI is likely to surface when someone searches your brand.

-

Update your presence on LLM training data sources. Reddit threads, G2 listings, local board of trade directories, and similar platforms can be referenced by an LLM without a live web search. Singh cites an anecdotal case where negative G2 and Reddit content about implementation timelines was reflected in model outputs — and updated over time as those sources changed.

Warning: this step may differ from current official documentation — see the verified version below.

-

Build comparison pages only where real search demand exists. Brand A vs. Brand B pages have legitimate GEO value — but only when users are genuinely searching that comparison. Manufacturing comparison pages for obscure competitors at scale is another version of the rank-and-tank trap: it generates low-value content, dilutes topical authority, and doesn’t drive conversions.

-

Track brand queries using paid ChatGPT to identify cited sources. Prompt-tracking tools capture screenshots of AI-generated answers. Run your tracked queries through a paid ChatGPT account — not the free version — so the tool performs a live web search. Examine which specific sources are cited, then optimize those sources directly rather than adding net-new content.

How does this compare to the official docs?

The tactics Singh describes are grounded in practitioner observation rather than published platform guidelines — which raises important questions about what guidance actually exists from OpenAI, Google, and Bing on how their AI search systems evaluate and retrieve brand content.

Here’s What the Official Docs Show

Act 1’s practitioner take holds up well where platform documentation exists — what follows layers in the official evidence, flags what couldn’t be verified, and surfaces one platform development the tutorial didn’t cover.

Step 1: Query fan-out mechanics

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 2: The rank-and-tank pattern

No official documentation was found for this step — proceed using the video’s approach and verify independently.

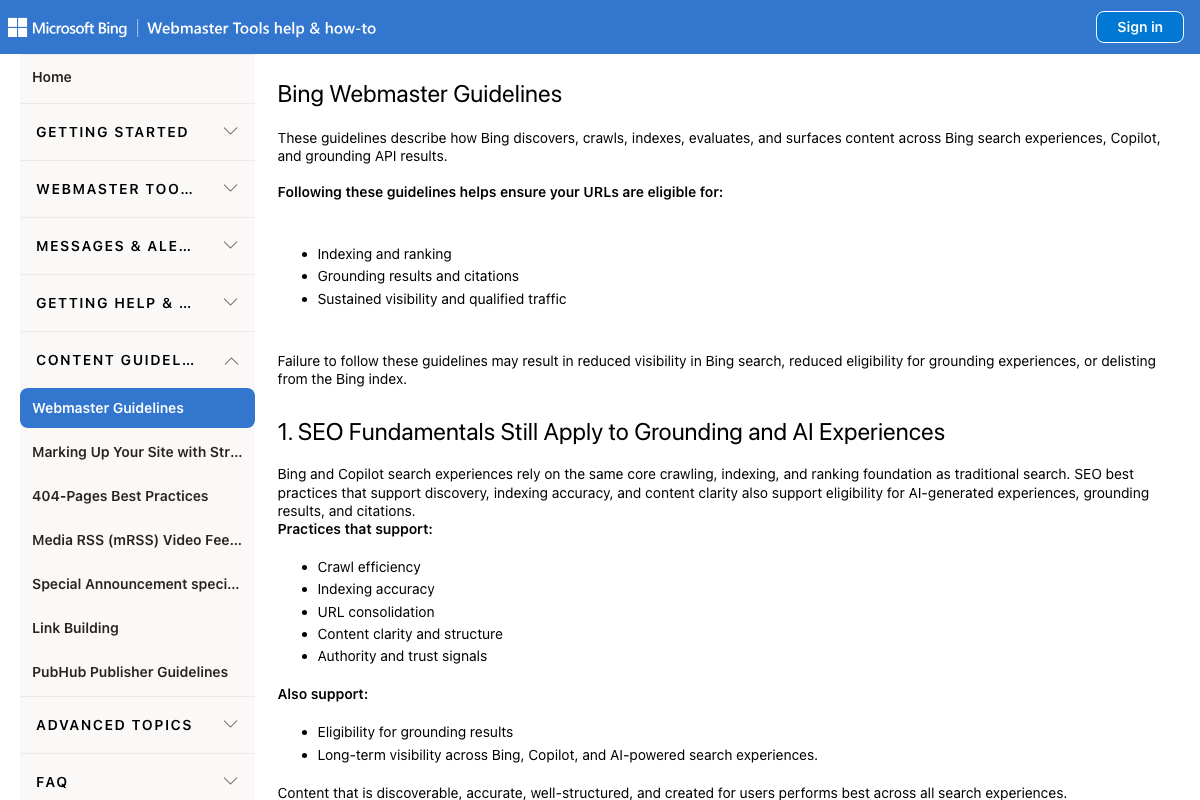

Step 3: Web retrieval vs. training data

The video’s approach here matches the current docs exactly. Bing’s official Webmaster Guidelines state directly: “Bing and Copilot search experiences rely on the same core crawling, indexing, and ranking foundation as traditional search” — with crawl efficiency, indexing accuracy, content clarity, and authority signals listed as the specific factors that determine grounding eligibility. Scope note worth keeping: this guidance covers Bing and Copilot specifically. Equivalent published documentation from OpenAI on ChatGPT’s retrieval ranking signals was not captured in these screenshots.

Step 4: Free-vs-paid ChatGPT experiment

The video’s approach here matches the current docs exactly. The free-tier interface is accessible without login at chatgpt.com, consistent with the incognito-tab instruction. One clarification: “Deep research,” “Images,” and “Apps” appear in the sidebar for unauthenticated users — these are paid-feature teasers, not functional capabilities at the free tier.

Step 5: Choosing your optimization focus

No official documentation was found for this step — proceed using the video’s approach and verify independently.

One additive data point from the screenshots: Google’s homepage now displays an “AI Mode” button directly in the search bar. If your audience is running queries in Google AI Mode rather than ChatGPT, the step 4 experiment would need to be run separately against that interface.

Step 6: Trustpilot profile optimization

The video’s approach here matches the current docs exactly. Trustpilot confirms the public review platform, the “For businesses” management portal, and the platform’s explicit positioning of review volume as a growth lever. One clarification: the claim that Trustpilot is “heavily cited in ChatGPT results for brand-plus-reviews queries” is not verifiable from available screenshots — treat it as practitioner observation rather than documented behavior.

Step 7: LLM training data source updates

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Relevant context: G2.com returned a bot-detection block across all three capture attempts. If automated indexing is similarly restricted at the network level, G2’s availability as a real-time LLM retrieval source may be more constrained than the tutorial implies.

Step 8: Comparison pages and real demand

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 9: Tracking brand queries via paid ChatGPT

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Only the logged-out free-tier ChatGPT interface was captured; the paid interface described in this step was not verified against current UI.

Useful Links

- Webmaster Guidelines – Bing Webmaster Tools — Official Bing guidance explicitly confirming that SEO fundamentals determine eligibility for Copilot grounding results and AI citations.

- ChatGPT — OpenAI’s consumer AI interface, accessible at the free tier without login and the starting point for the tutorial’s free-vs-paid comparison experiment.

- Trustpilot Reviews: Experience the power of customer reviews — Public review platform with a business management portal and structured category rankings relevant to AI citation eligibility.

- G2 — B2B software review platform; bot-detection restricted all capture attempts, limiting verification of the tutorial’s training-data source claims.

- Google — Google Search homepage; now features an “AI Mode” button in the search bar, a Google-native AI surface the tutorial’s methodology does not address.

- Sign in – Claude — Anthropic’s consumer AI assistant; a separate platform from ChatGPT with an analogous free, Pro, and Max tier structure.

0 Comments