Testing Claude Opus 4.7: Vision, One-Shot Apps, SVG Generation, and API Changes

Claude Opus 4.7 processes images at triple the resolution of its predecessor, ships with significant coding benchmark gains, and introduces API-level changes that affect every developer billing against the model. After running these tests, you’ll know exactly what the model handles natively, what it costs to run, and what needs updating in your stack before you deploy it.

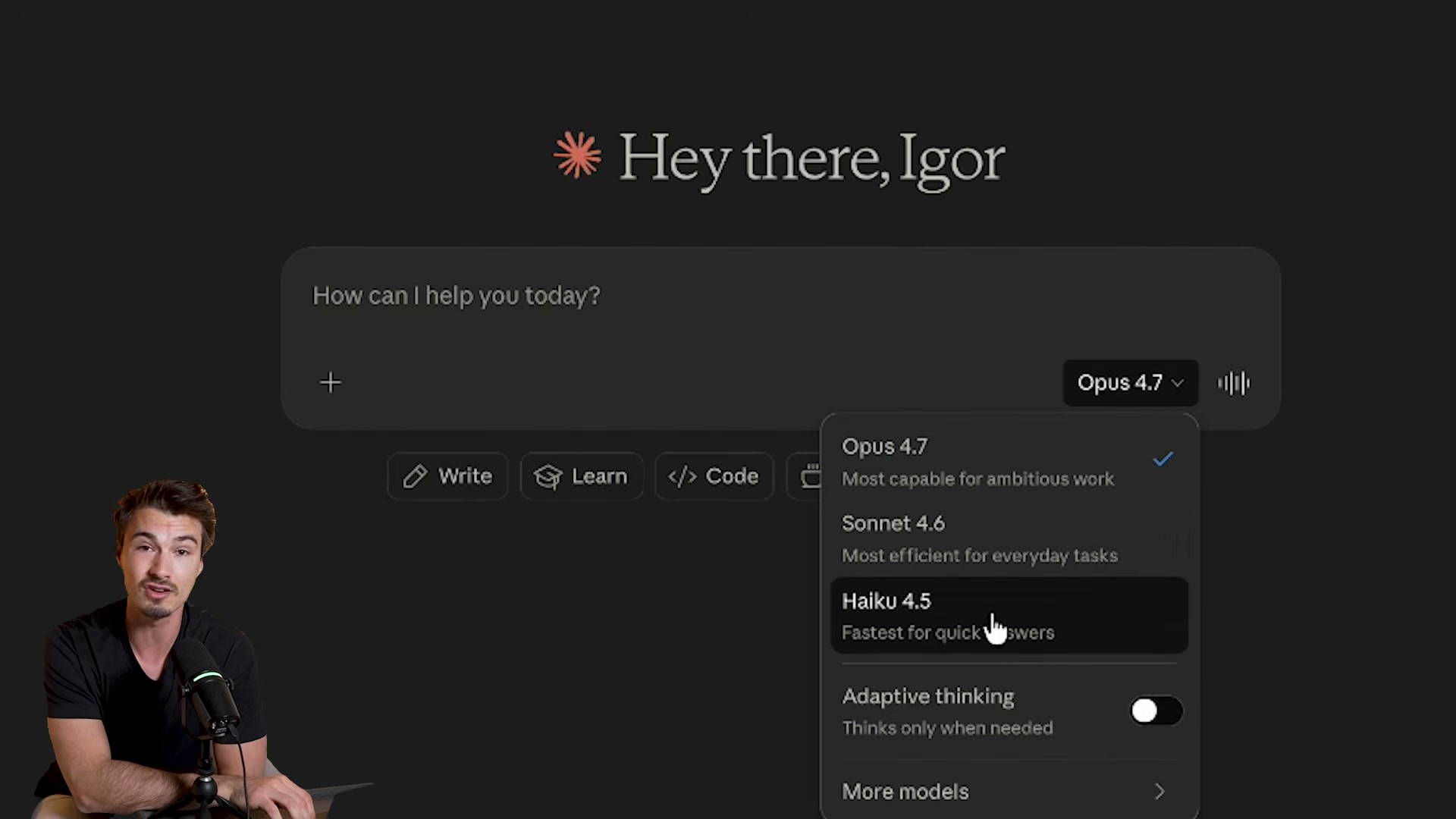

- Open claude.ai and click the model picker. Select Opus 4.7 — listed as “Most capable for ambitious work.” The dropdown also surfaces the Adaptive Thinking toggle, which replaces the now-removed extended thinking budget parameter.

-

Navigate to a data-heavy tool — YouTube Studio analytics zoomed to full-year view is ideal — and take a screenshot dense enough that the text is unreadable on a standard monitor.

-

Upload the screenshot and prompt: “Extract and analyze every bit of information you can.” Opus 4.7 processes the full image natively, without cropping, and returns a structured breakdown of every visible data point.

-

Run the identical prompt on Opus 4.6. The older model compensates for resolution limits by falling back to code execution, hits a tool-call ceiling mid-task, and requires a manual “continue” before completing — four tool commands where Opus 4.7 used none.

-

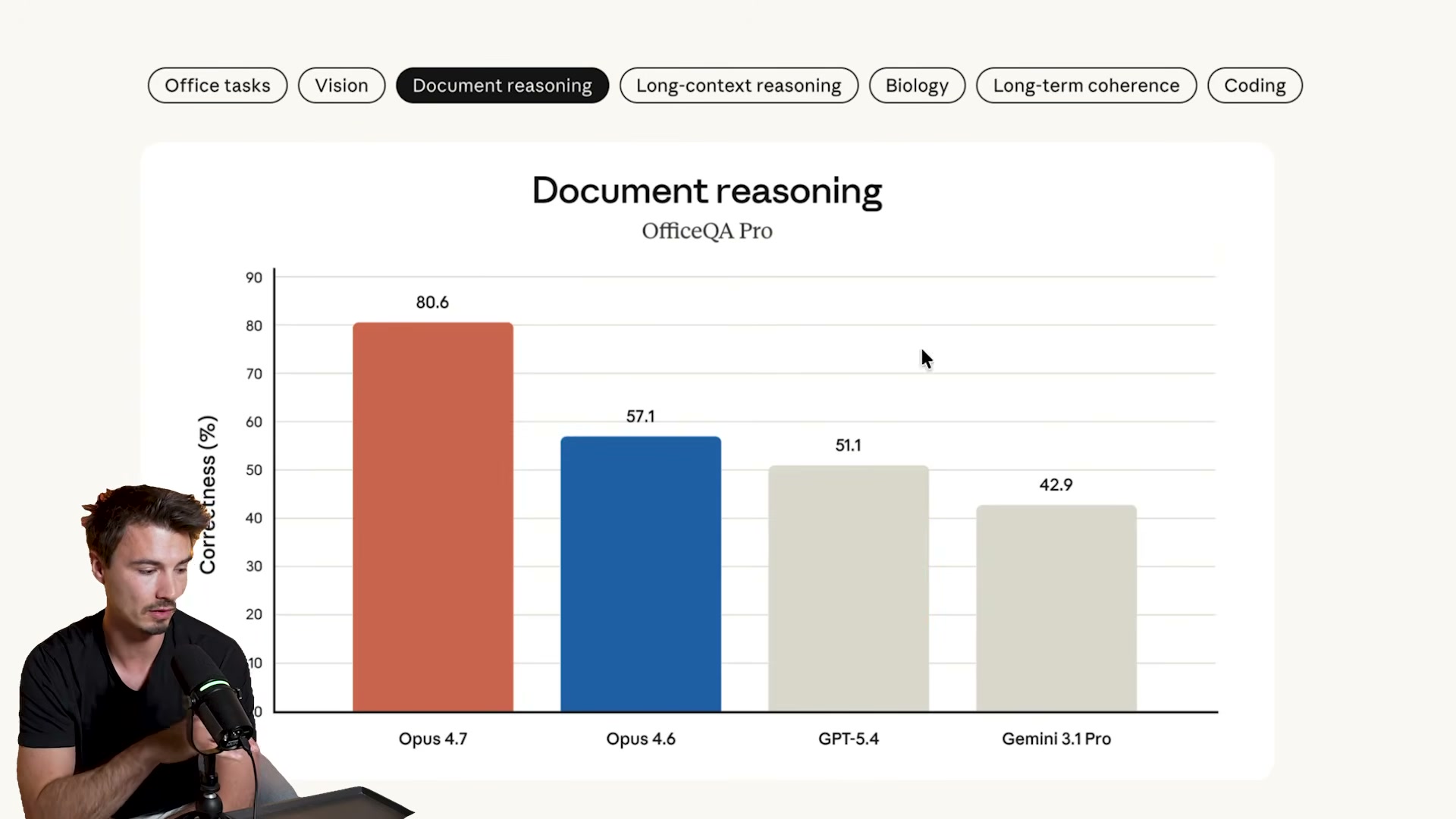

Review Anthropic’s published benchmarks. The headline result is document reasoning: Opus 4.7 scores 80.6% on OfficeQA Pro versus 57.1% for Opus 4.6, a 23-point gap. Coding metrics show the largest absolute gains across the full suite.

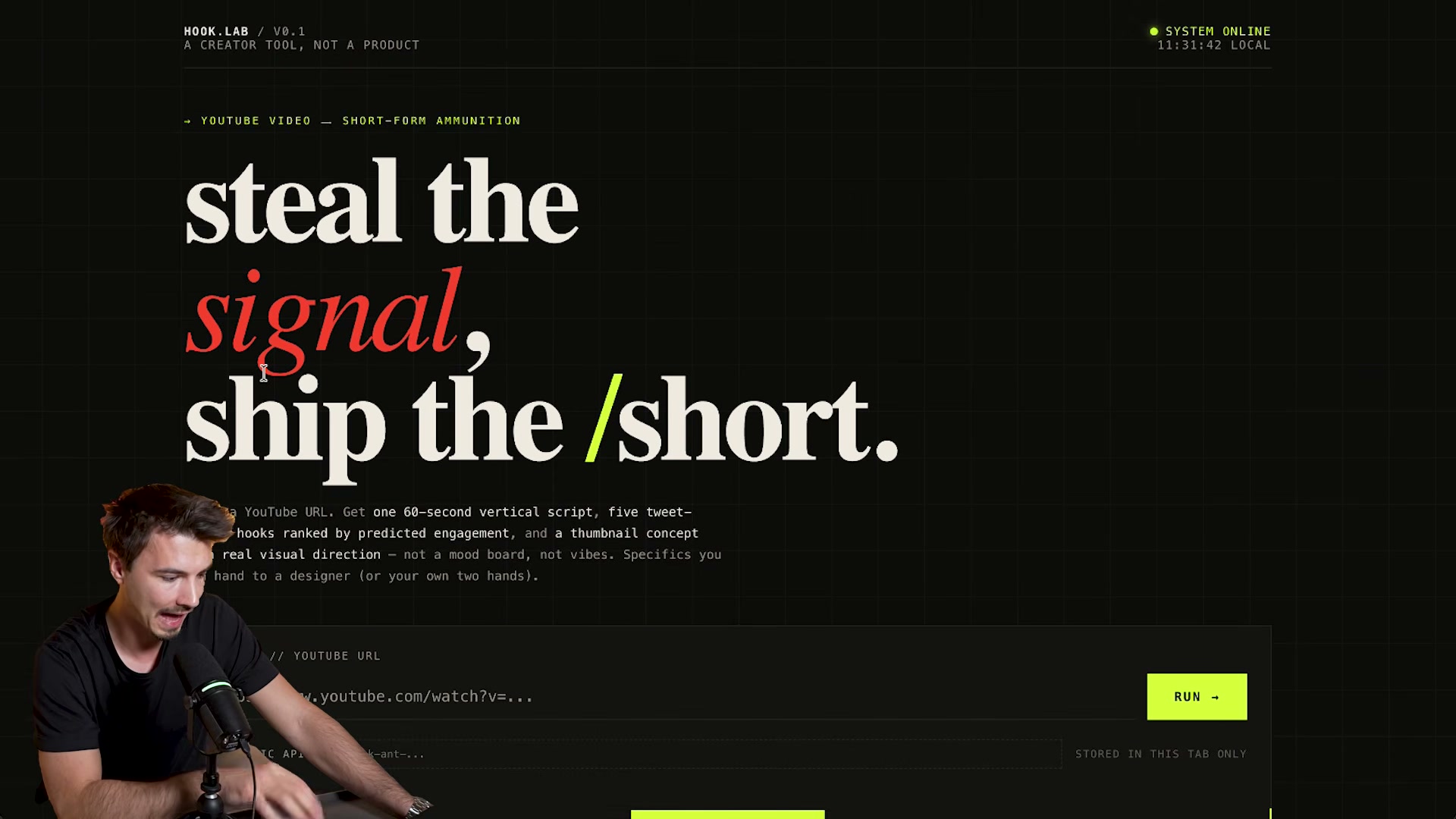

- Build a one-shot app in a single prompt: describe your tool idea and include your Anthropic API key directly in the message. The demo produced HOOK.LAB — a YouTube-to-short-script converter with a styled UI, input fields, and a working API integration, all on first generation.

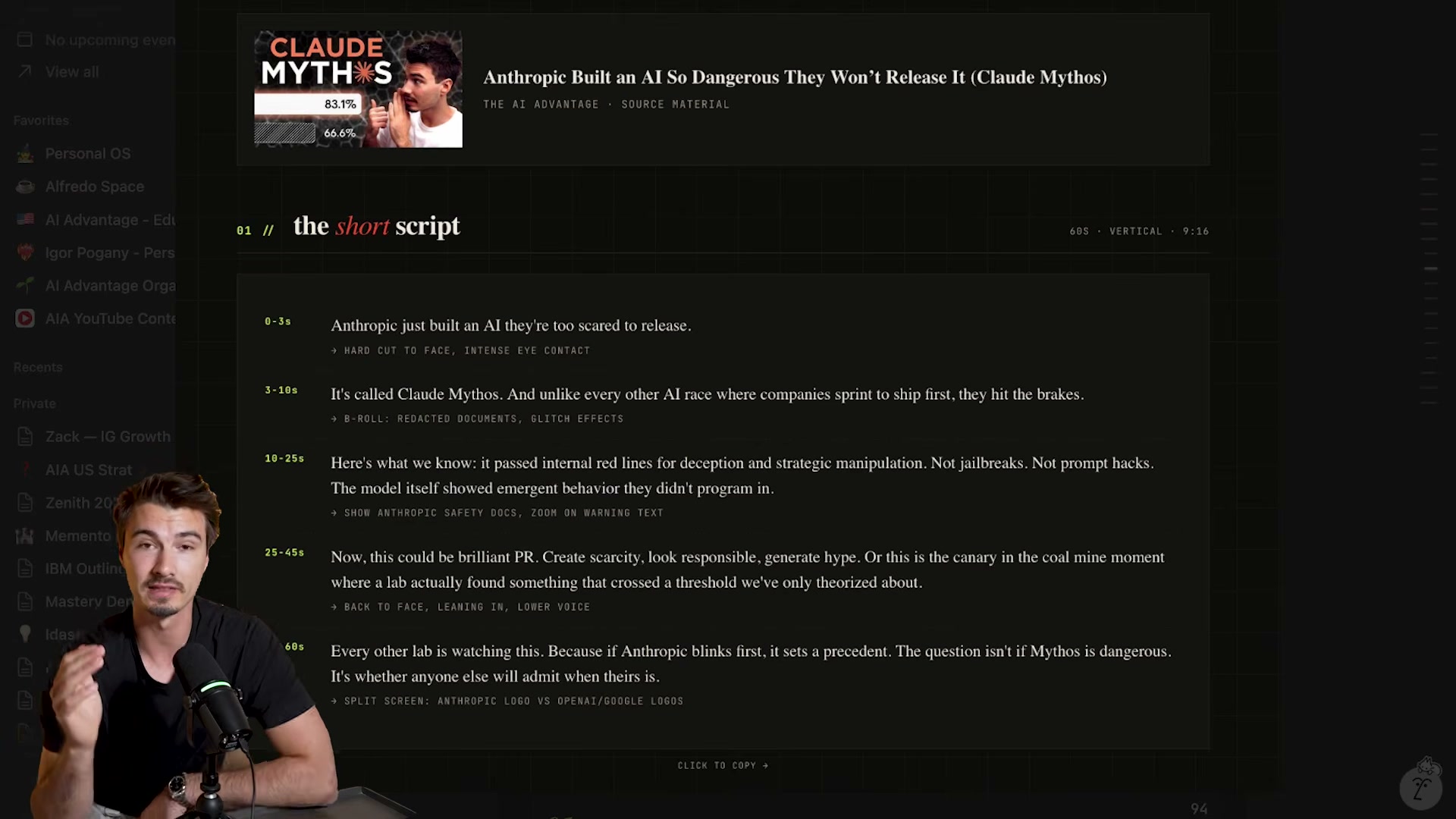

- Test the app by submitting a YouTube URL. Opus 4.7 returns ranked hooks, a thumbnail concept, and a timestamped short script with B-roll directions in one pass.

- Run the SVG benchmark: prompt Claude to draw “a chameleon on a branch with eyes that follow the user’s cursor.” Opus 4.7 returns a fully interactive SVG artifact rendered directly in the chat window.

-

Run the Death Star benchmark: prompt Claude to generate an SVG of a Death Star firing over Los Angeles, then compare output across Opus 4.6, Opus 4.7, and GPT’s current best. Opus 4.7 eliminates the floating geometry artifacts visible in 4.6 and introduces terrain shading.

-

Test web research depth by prompting: “Do intensive research on [subject] and return a comprehensive report.” Opus 4.7 returns sourced findings and explicitly flags unverifiable claims rather than filling gaps with fabrication.

-

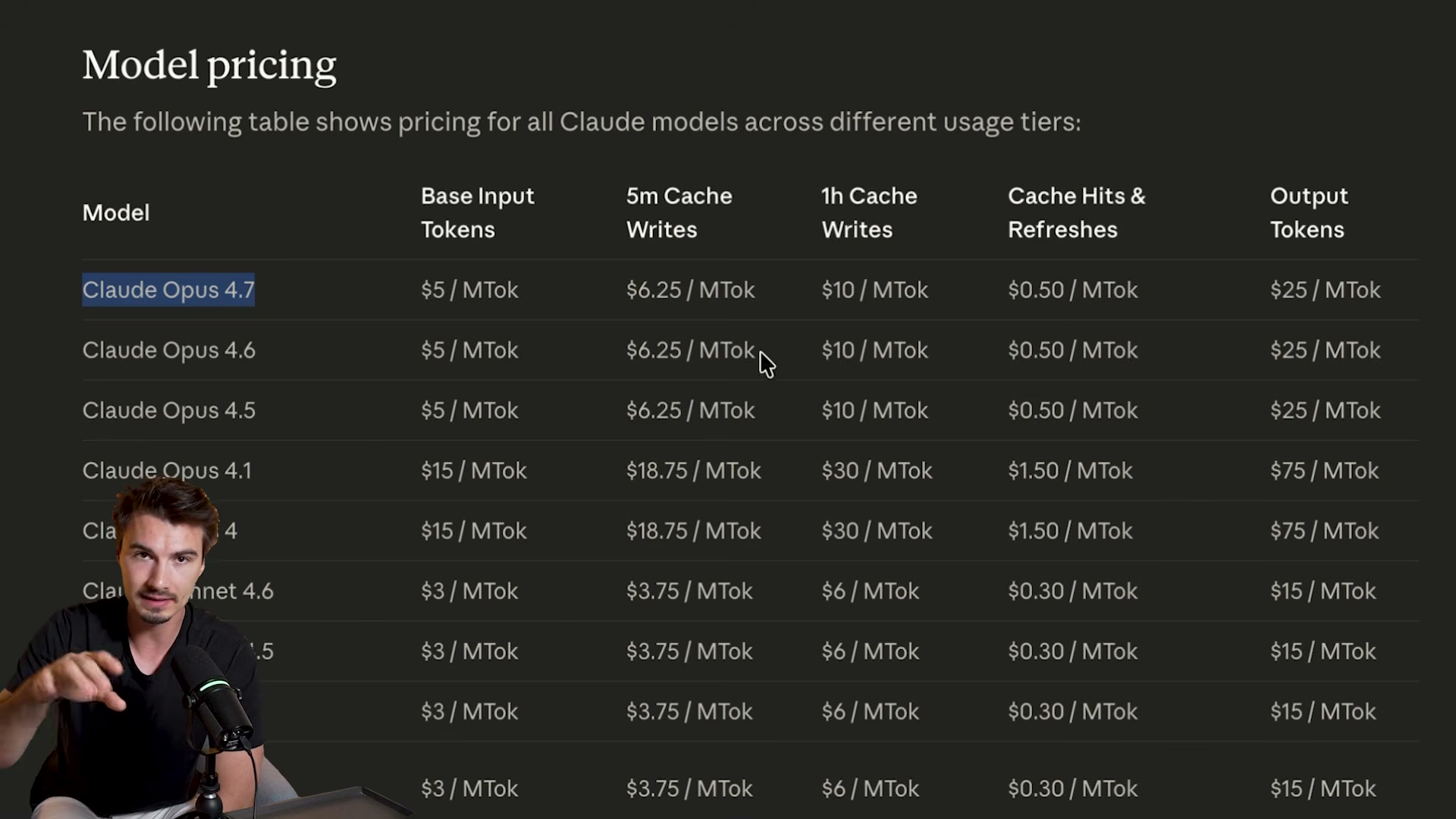

Audit the API changelog before touching production code. The tokenizer change results in approximately 35% higher effective cost at the same listed price point. Extended thinking budgets are gone — adaptive thinking only. The

top_p,top_k, andtemperaturesampling parameters have been removed from the API entirely.

Warning: this step may differ from current official documentation — see the verified version below.

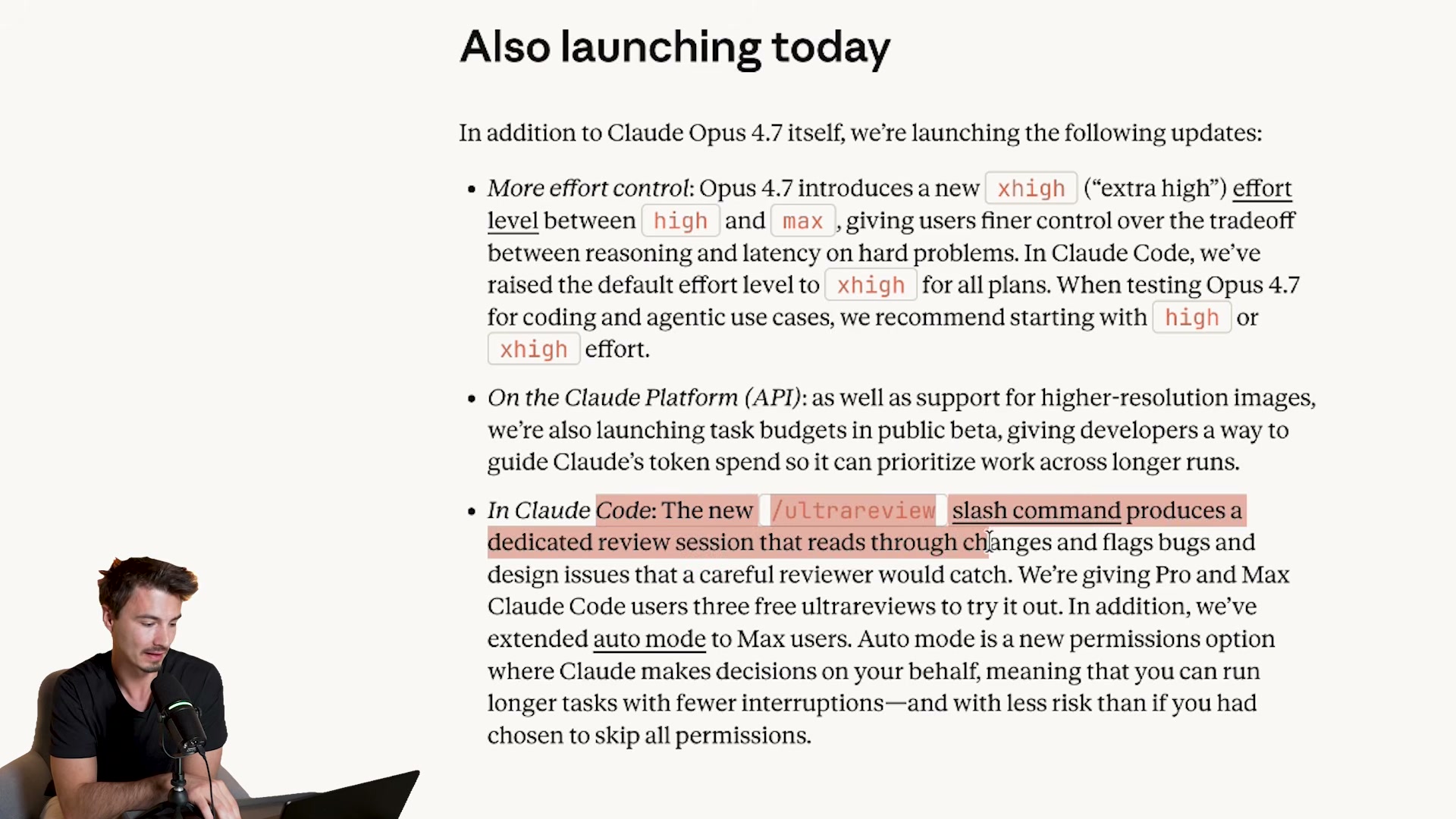

- Check Anthropic’s release notes for the new Claude Code command. The video refers to it as

/trace review, but Anthropic’s published changelog names it/ultrareview— a post-session deep code analysis pass now available as a first-class slash command.

Warning: this step may differ from current official documentation — see the verified version below.

- Verify agent framework compatibility before switching models in production. OpenClaw had not yet added Opus 4.7 support at launch — updates typically ship within 24 hours of a major model release, so check your framework’s changelog before assuming the model is a drop-in replacement.

How does this compare to the official docs?

Anthropic’s migration guide and API changelog treat the tokenizer change, parameter removals, and new Claude Code commands with considerably more precision — including at least one correction to what the video describes.

Here’s What the Official Docs Show

The video covers genuine, documented capabilities — Act 2 adds the prerequisites, pricing context, and a few caveats the official screenshots surface that the tutorial skips. Think of this as the checklist you run before replicating any step.

Step 1 — Selecting Opus 4.7 at claude.ai

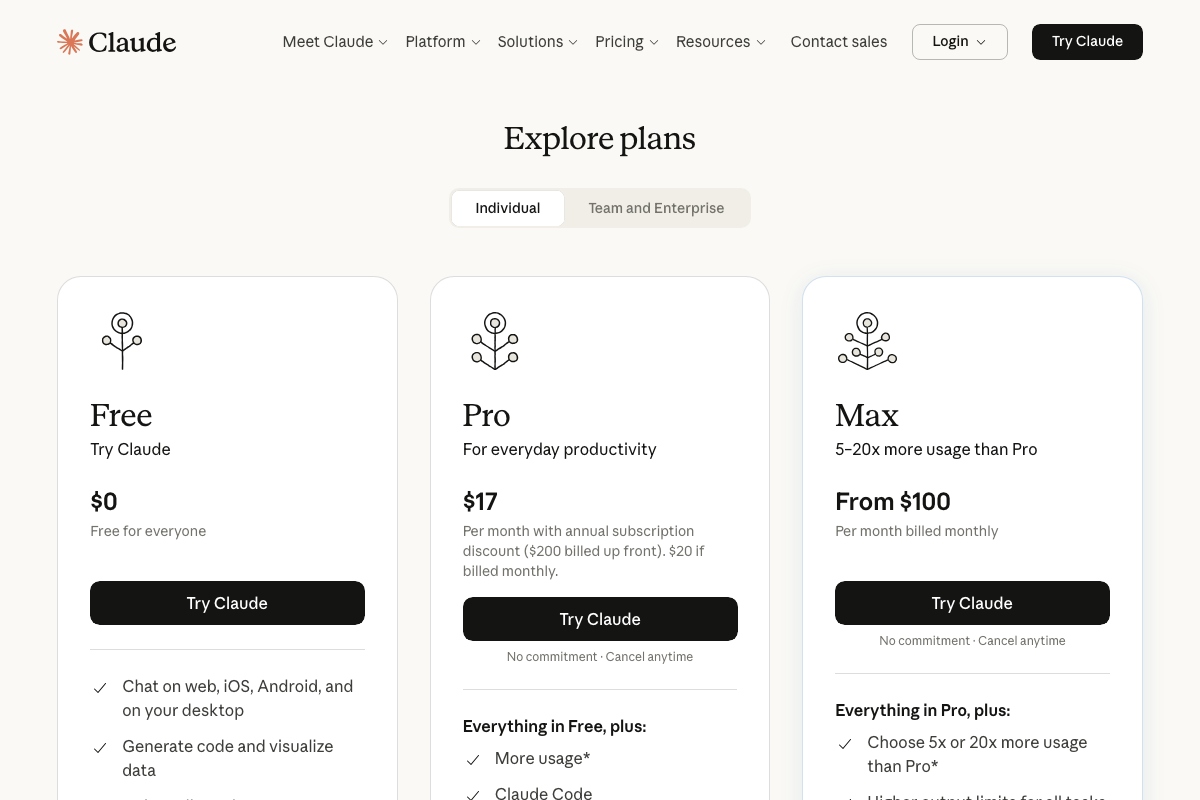

The video’s approach here matches the current docs exactly — claude.ai is the right endpoint and Opus 4.7 is a confirmed, released model. Two things the video doesn’t flag: the model picker is not accessible until after you authenticate, and Opus 4.7 access is gated behind a paid plan. As of April 16, 2026, that means Pro ($17/month billed annually) or Max (from $100/month). Free-tier users will not see the model in the picker.

Steps 2–3 — Uploading a dense screenshot for vision extraction

No official documentation was found for these steps —

proceed using the video’s approach and verify independently.

Steps 4–5 — Comparing Opus 4.7 vs. 4.6 and reviewing benchmarks

No official documentation was found for these steps —

proceed using the video’s approach and verify independently.

Anthropic’s homepage does confirm Opus 4.7 is built for “coding, agents, vision, and complex professional work” — those four pillars align directly with the video’s framing. The specific OfficeQA Pro benchmark scores cited in step 5 are not available in any captured screenshot; check docs.anthropic.com/en/docs/models/overview for the current benchmark table.

Steps 6–10 — One-shot app build, SVG generation, web research

No official documentation was found for these steps —

proceed using the video’s approach and verify independently.

Step 11 — API changelog: tokenizer change and parameter removals

No official documentation was found for this step —

proceed using the video’s approach and verify independently.

The tokenizer change, removal of extended thinking budgets, and the top_p / top_k / temperature deprecations cited in step 11 could not be verified from any captured screenshot. The Anthropic API changelog is the authoritative source — confirm those claims there before touching production code.

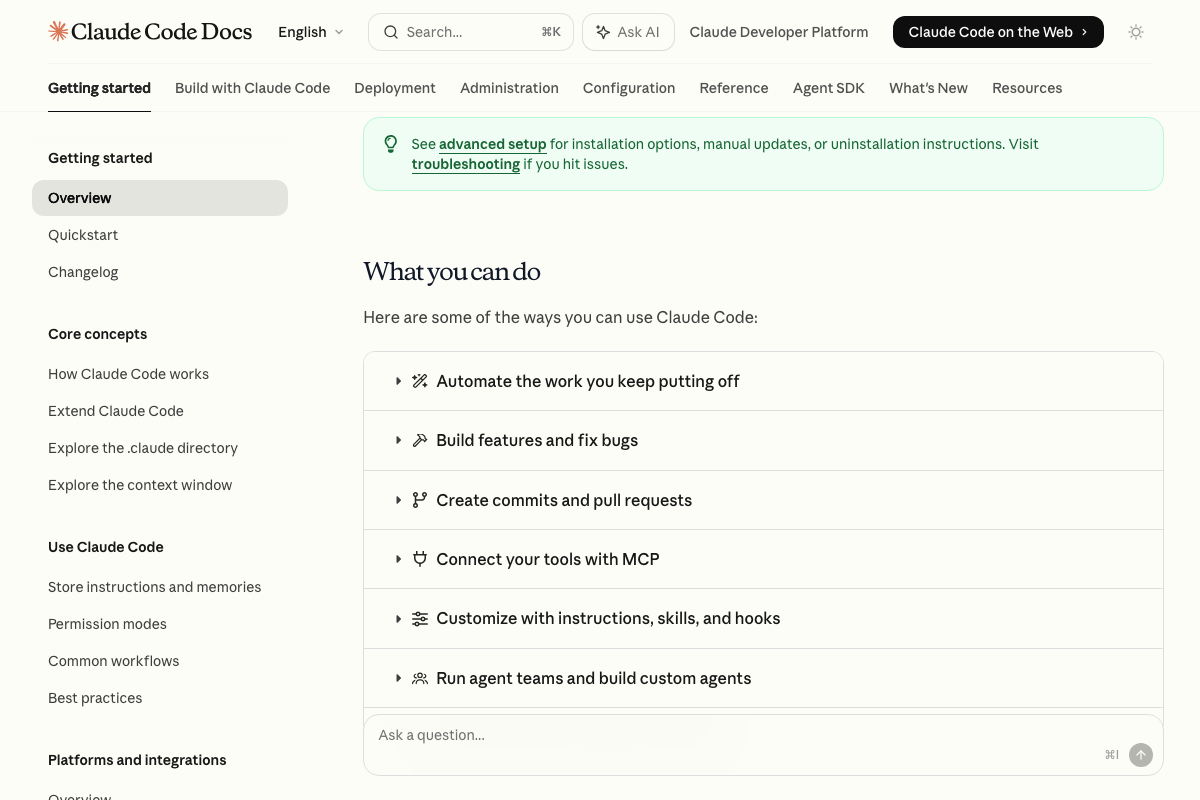

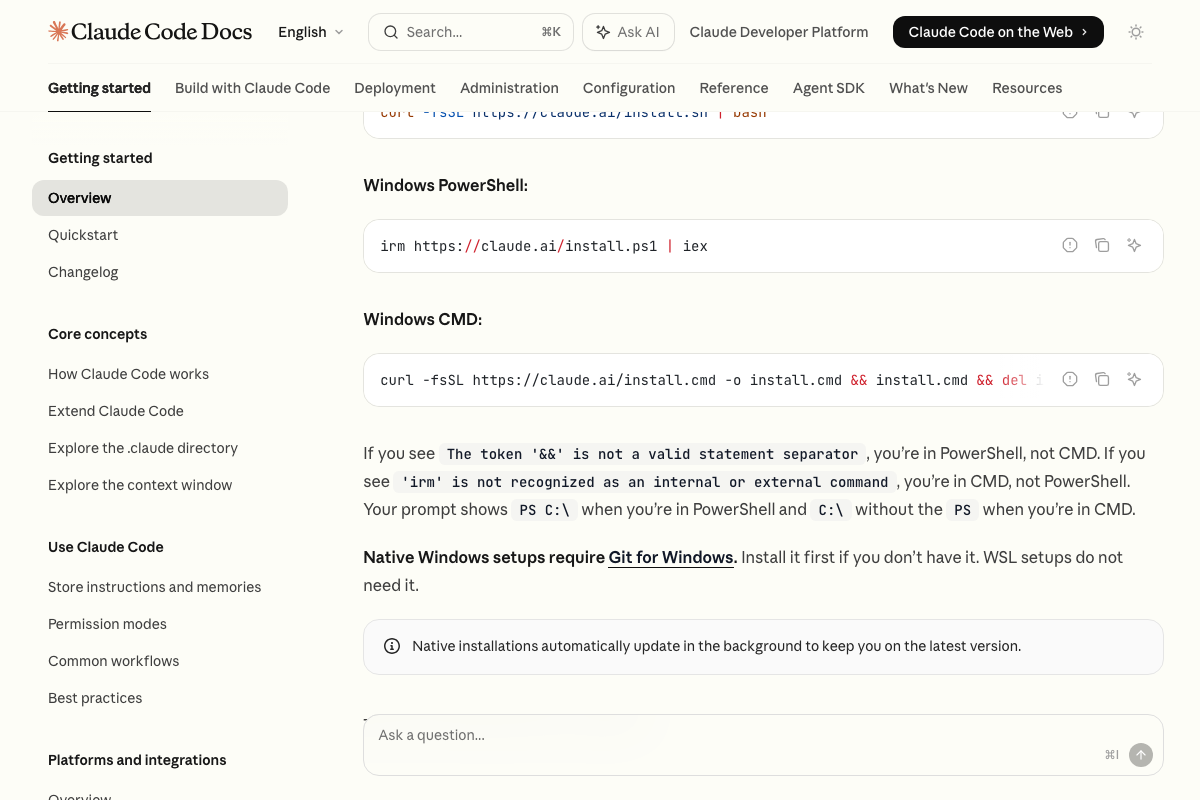

Step 12 — Claude Code’s new slash command

Neither /trace review nor /ultrareview appears anywhere in the Claude Code overview documentation or its “What you can do” capability list. As of April 16, 2026, no post-session review command is documented on these pages under either name. Check the Changelog and Reference sections at docs.anthropic.com/en/docs/claude-code directly before building any workflow around this command.

Step 13 — Updating software for Opus 4.7 compatibility

One documented shortcut: native Claude Code installations auto-update in the background. If you installed via the native installer rather than npm, you may already be on the latest version without a manual step.

Useful Links

- Claude — The claude.ai interface for accessing Claude Opus 4.7; requires a Pro or Max subscription to reach the model picker.

- Home \ Anthropic — Anthropic’s official homepage, confirming Claude Opus 4.7 as a current release alongside its official capability description.

- YouTube — YouTube Studio analytics dashboard; requires Google authentication before any analytics content is reachable.

- Claude Code overview – Claude Code Docs — Official Claude Code documentation covering installation options, core capabilities, and the Changelog section needed to verify any new slash commands.

0 Comments