Programmatic advertising’s core infrastructure — the 100-millisecond real-time auction — is breaking down. Years of privacy regulation, third-party cookie deprecation, and identity fragmentation have eroded the signal quality that the entire ecosystem depends on, leaving publishers underpaid and advertisers frustrated with results. Agentic advertising — the use of autonomous AI agents to bridge buyer intent with publisher data — is emerging as the architectural replacement, and if you run media campaigns or manage publisher inventory, you need to understand how to deploy it now.

What This Is

Agentic advertising is the convergence of autonomous AI agents with the programmatic supply chain. Rather than relying on impression-by-impression bidding powered by decaying third-party identifiers, agentic systems let buyer-side and seller-side agents negotiate, evaluate inventory, and execute campaigns through natural language interfaces and structured protocols — all without requiring a human to manually mediate every transaction.

According to Vlad Stesin, CEO of Optable, writing on Digiday, the core problem is structural: “The structure of a real-time auction forces each party to use only a small amount of signal.” This constraint isn’t a bug that can be patched — it’s the foundational architecture of RTB. Buyers see a limited bid request; sellers see only the winning bid. Neither side can share the full picture of intent, audience quality, or contextual relevance within the 100ms window.

The current industry workarounds — deal-based packaging, programmatic guarantees, and audience curation — treat symptoms rather than causes, as Stesin notes. They patch over the signal gap manually and at scale they remain slow, opaque, and labor-intensive.

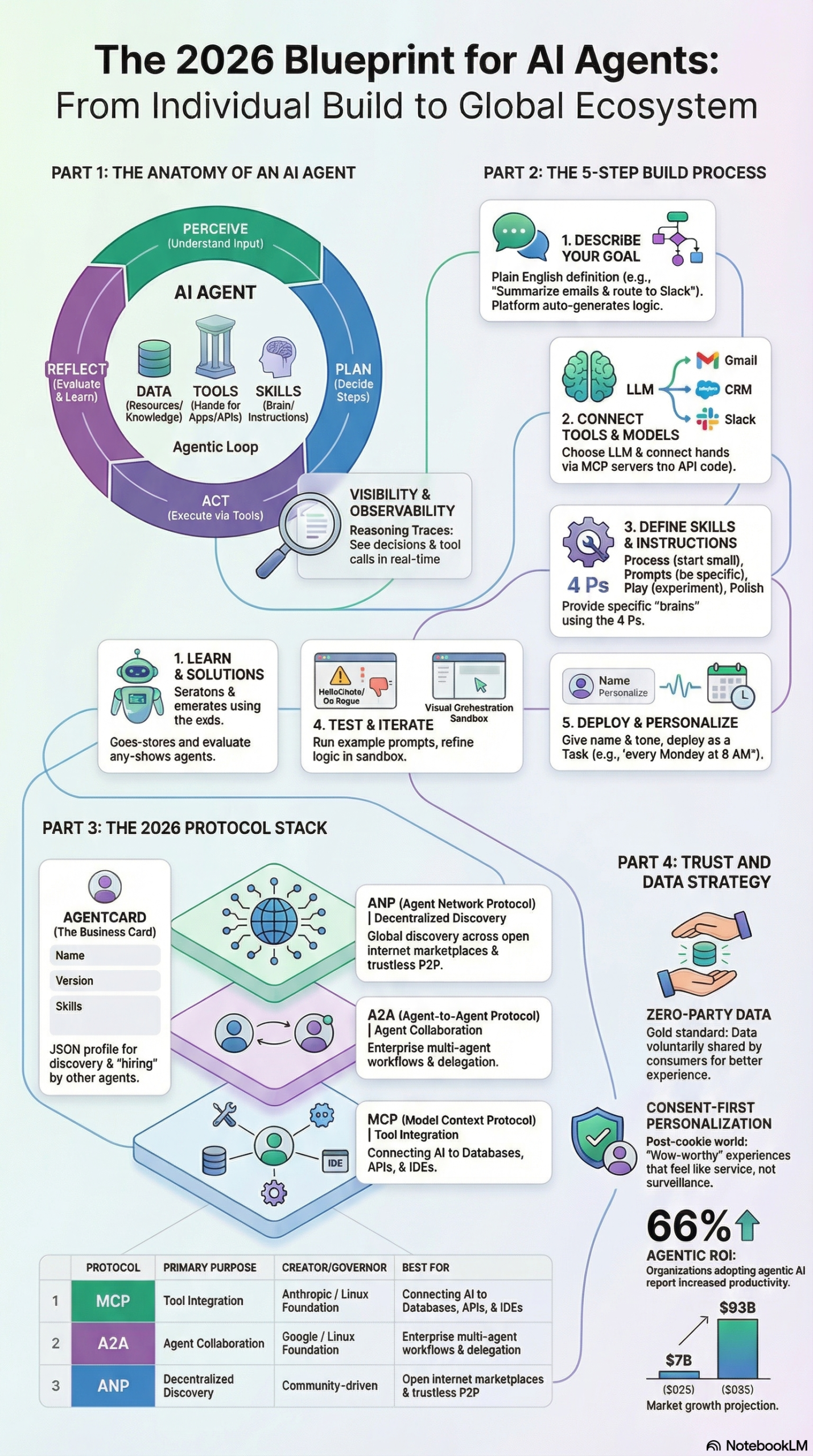

The agentic alternative works differently. As documented in the MarketingAgent research report on agentic AI ecosystems, AI agents operate within an “agentic loop” of four phases: Perceive (ingest data from ad inventory, CRM, or campaign signals), Plan (determine which publisher segments align with campaign objectives), Act (execute buys or RFP responses via connected APIs), and Reflect/Repeat (evaluate performance and optimize future cycles). Applied to advertising, this means a buyer-side agent can autonomously evaluate a publisher’s audience data, match it against campaign KPIs, and negotiate a deal — all without a human sending an email or dialing into a call.

The key technical enabler is the Ad Context Protocol (AdCP), a specialized protocol designed for agent-to-agent communication within the programmatic supply chain. The research report identifies AdCP alongside the Model Context Protocol (MCP) and Agent-to-Agent Protocol (A2A) as part of the emerging “Protocol Era” — a standardized layer that ends the N×M integration nightmare where every buyer needed custom code to connect with every publisher.

On the sell side, tools like Optable’s Audience Agent are already translating this concept into deployed infrastructure. RFP responses that previously required days of manual audience analysis can now be turned around in a fraction of the time, according to Optable. Publishers expose their audience data in a machine-readable format; buyer agents query it autonomously; deal terms are returned at programmatic speed without sacrificing the signal depth of a direct deal.

For practitioners, the simplest way to think about agentic advertising: it combines the speed of programmatic with the data richness of a direct insertion order. The 100ms auction is replaced by agent negotiation that can take seconds or minutes rather than milliseconds — but it can carry orders of magnitude more signal in both directions.

Why It Matters

Signal degradation is not a future risk — it is the current state of the programmatic ecosystem. The phase-out of third-party cookies by major browsers, combined with growing compliance obligations under GDPR and India’s Digital Personal Data Protection Act (DPDPA), has removed the identity spine that RTB was built on. What remains is a system running on probabilistic guesses, contextual proxies, and increasingly unreliable device graphs.

Agentic advertising matters for three distinct practitioner groups:

For media buyers and agencies, it means reclaiming audience precision without reverting to slow, manual direct buying. A buyer agent can be configured with campaign goals, KPIs, and audience profiles, then deployed to evaluate thousands of publisher segments autonomously. The research report notes that PubMatic’s AgenticOS — an operating system for orchestrating autonomous media execution — has demonstrated campaign setup time reductions of up to 87% and issue resolution time reductions of 70%. Those are not incremental improvements; they are structural changes to how a media team operates.

For publishers, agentic advertising solves the monetization gap created by signal loss. When buyers can’t read your audience accurately, CPMs fall. Publishers who expose clean, machine-readable, identity-resolved data — hashed emails, UID2 identifiers, structured audience taxonomies — become legible to buyer agents and command premium pricing. Those who don’t will continue to be commoditized in the open exchange.

For martech and adtech developers, the emergence of standardized protocols (MCP, A2A, AdCP) means you no longer need to custom-build every buyer-seller integration. The research report describes MCP as a “universal adapter” or “USB port” for AI — connect your tool once, and any agent with MCP support can use it. This is the infrastructure shift that makes agentic advertising scalable beyond a handful of large walled gardens.

One additional dimension that separates agentic advertising from previous automation waves: the role of zero-party data. As the research report documents, zero-party data — information a consumer intentionally and proactively shares with a brand — is emerging as the primary signal layer in a post-cookie world. Unlike first-party data that a brand infers from behavioral observation, zero-party data is explicitly declared: purchase intentions, style preferences, dietary requirements. It is consent-first by design, higher quality than probabilistic data, and — critically — it is the kind of structured, machine-readable signal that agentic buyer systems can actually use. The brands and publishers building zero-party data infrastructure now are positioning themselves for agentic advertising execution at scale.

The Data

The market context behind this shift is significant. The agentic AI market is projected to grow from USD 7.06 billion in 2025 to USD 93.20 billion by 2032, registering a CAGR of 44.6%, according to market research cited in the MarketingAgent research report. Programmatic advertising is one of the largest immediate deployment surfaces for this technology.

Below is a feature comparison of traditional programmatic vs. agentic advertising approaches:

| Dimension | Traditional Programmatic (RTB) | Agentic Advertising |

|---|---|---|

| Transaction Speed | 100 milliseconds | Seconds to minutes (with richer signal) |

| Signal Depth | Limited to bid request fields | Full audience profiles, intent signals, ZPD |

| Identity Dependency | Third-party cookies, device IDs | Hashed emails, UID2, zero-party data |

| Buyer-Seller Communication | Blind auction | Agent-to-agent protocol (AdCP, A2A) |

| Campaign Setup Time | Hours to days (manual) | Up to 87% faster with AgenticOS (source) |

| Issue Resolution | Manual escalation | Up to 70% faster with autonomous agents (source) |

| Publisher Data Legibility | Opaque to buyers | Machine-readable, structured for agent query |

| Compliance Architecture | Retroactive consent management | Zero-party data, consent-first by design |

| Scale Requirement | Accelerated compute (NVIDIA-class) for real-time bidding | Accelerated compute for millisecond inference at millions of transactions/sec (source) |

| Human Role | Hands-on campaign execution | Strategic oversight; agents handle execution |

Step-by-Step Tutorial: Deploying Your First Agentic Advertising Workflow

This tutorial walks through the practical architecture of an agentic advertising workflow — from publisher data preparation through buyer agent deployment. It’s designed for practitioners at agencies, DSPs, SSPs, and publisher ad operations teams.

Prerequisites

Before you start, you need:

– A publisher-side audience data asset (first-party or zero-party) in a structured format (CSV, clean room output, or API-accessible)

– Identity resolution in place: hashed emails or UID2 tokens associated with audience segments

– Access to an agent-capable platform (e.g., PubMatic AgenticOS for publishers, or a no-code builder like Gumloop or Vellum for custom agent workflows per the research report)

– Basic familiarity with JSON and API concepts (no coding required for no-code paths)

Phase 1: Prepare Your Data Layer (Publisher Side)

The single most important thing a publisher can do to participate in agentic advertising is make their audience data machine-readable. Per Optable’s framework, three requirements apply:

1. High-quality data. Audit your first-party segments for completeness and recency. Segments built on 90-day-old behavioral data without identity resolution will score poorly when buyer agents evaluate them. Clean your taxonomy — vague labels like “engaged user” need to be replaced with structured descriptors (age range, content affinity categories, purchase stage).

2. Resolved identity. At minimum, your audience segments need to be keyed to hashed emails (SHA-256) or UID2 tokens. UID2 is an open-source, privacy-preserving identifier standard that buyer-side agents can match against without exposing raw PII. If you’re not running UID2 yet, start with your login-authenticated users — even a small identity-resolved segment is more valuable in an agentic auction than a large anonymous pool.

3. Interoperability. Your data must speak a shared language. This means using IAB content taxonomy categories, standard audience segment descriptors, and — ideally — an AdCP-compatible exposure format so buyer agents can query your inventory with a standardized request structure rather than a custom one-off integration.

Practical step: Export a test segment of 50,000+ users in a format that includes: segment_id, iab_category, uid2_token, recency_days, frequency, cpm_floor. This is the minimum viable data object a buyer agent needs to evaluate a publisher programmatically.

Phase 2: Configure Your Publisher-Side Agent (Sell-Side Automation)

If you’re using a platform like PubMatic’s AgenticOS, you’ll configure your sell-side agent through a dashboard interface. For custom deployments, the research report describes a no-code path via platforms like Gumloop or Vellum that use “prompt-to-build” interfaces — you describe the agent’s goal in plain English and the platform handles API wiring.

Step 1 — Define the agent’s goal. Be specific. Example: “When a buyer agent submits an RFP for a campaign targeting 25-44 year old male sports enthusiasts with a CPM floor of $8, respond with the top 3 matching audience segments from our inventory, including reach, index score, and sample creative alignment.” Vague goals produce vague agents.

Step 2 — Connect your data source. Use MCP (Model Context Protocol) to connect the agent to your audience management platform or clean room output. The research report describes MCP as the “universal adapter” layer — most major audience platforms are adding MCP support in 2026. If yours doesn’t have it yet, a simple REST API wrapper will work as an interim bridge.

Step 3 — Define the agent’s output format. The agent’s response to a buyer RFP should return structured JSON, not a human-readable email. Specify fields: segment_name, estimated_reach, cpm_floor, iab_taxonomy, identity_resolution_type, data_recency, match_score. This ensures buyer-side agents can parse and compare your inventory automatically.

Step 4 — Set guardrails. Define what the agent cannot do without human approval. Recommended: require human sign-off on any deal above $50K, any exclusivity arrangement, or any data-sharing request that falls outside your standard DPA terms. The research report is clear that humans move upstream in agentic systems — they define goals, set constraints, and review high-stakes decisions. They don’t disappear.

Phase 3: Deploy the Buyer-Side Agent

On the buy side, an agentic workflow begins with translating campaign briefs into structured queries that can be dispatched to multiple publisher agents simultaneously.

Step 1 — Encode your campaign brief as structured data. Take your media plan and extract the machine-readable parameters: target_audience, iab_category_list, geo, flight_dates, budget, kpi_primary (e.g., CPA, ROAS, viewability), cpm_ceiling. This becomes the input to your buyer agent.

Step 2 — Configure the buyer agent’s discovery scope. Using A2A (Agent-to-Agent Protocol), your buyer agent can discover publisher agents that have registered AgentCards — the machine-readable JSON “business cards” described in the research report that declare each agent’s identity, capabilities, and skills. Query the AgentCard registry for publishers with sports content affinity, mid-tier CPM ranges, and UID2 support.

Step 3 — Issue the RFP autonomously. The buyer agent dispatches structured RFP requests to matching publisher agents via AdCP. Each publisher agent returns a structured response (as configured in Phase 2). The buyer agent ranks responses against campaign KPIs and surfaces the top three recommendations for human review.

Step 4 — Human review and activation. This is where the practitioner re-enters the loop. Review the agent’s ranked recommendations: check reach vs. budget efficiency, verify the identity resolution type (UID2 vs. hashed email), and confirm that the publisher’s content environment aligns with brand safety requirements. Approve the deal; the agent handles the IO execution.

Step 5 — Monitor and reflect. Configure the agent to report on campaign delivery daily: spend pace, CPM actuals vs. floor, KPI performance, and segment match score. The agentic loop’s “Reflect” phase means the agent uses this feedback to adjust future RFP parameters — over time, it learns which publisher data signals actually correlate with your KPI outcomes.

Expected Outcomes

A properly deployed agentic advertising workflow should deliver:

– RFP response cycles reduced from days to hours or minutes

– Improved audience signal quality by transacting on identity-resolved, zero-party data rather than probabilistic cookie-derived segments

– Campaign setup time reduction of up to 87% at scale (per PubMatic AgenticOS data cited in the research report)

– A direct feedback loop between campaign performance and future media buying parameters — eliminating the manual reporting-to-optimization cycle

Real-World Use Cases

Use Case 1: Independent Publisher Competing Against Walled Gardens

Scenario: A mid-size lifestyle publisher with 4 million monthly unique visitors struggles to compete with Facebook and Google in direct deals because buyers can’t easily evaluate their audience quality.

Implementation: The publisher implements UID2 for their authenticated newsletter subscribers (240K identified users), structures segments by content affinity category (home/garden, wellness, sustainable living), and deploys a sell-side Audience Agent via Optable that can respond to buyer RFPs autonomously. They register an AgentCard declaring their segment capabilities.

Expected Outcome: Buyer agents evaluating sustainable living campaigns can now query this publisher’s inventory alongside platform inventory, compare CPM efficiency and identity resolution quality, and execute a deal without a human sales call. The publisher’s legible, identity-resolved inventory commands a CPM premium over anonymous open-exchange inventory.

Use Case 2: Performance Advertiser Scaling CTV Campaigns

Scenario: A direct-to-consumer brand wants to scale into Connected TV as the channel overtakes search for performance. Per the research report, CTV is expected to become the dominant performance channel, with AI agents enabling millions of small businesses to produce and optimize TV ads at low cost.

Implementation: The brand deploys a buyer agent configured with their customer lookalike profile (based on zero-party data from a product quiz — purchase intent, household income proxy, content consumption preferences). The agent continuously queries CTV publisher inventory, matches against lookalike parameters, and optimizes bid levels based on post-view conversion data fed back into the agentic loop.

Expected Outcome: The brand reaches audiences on premium CTV inventory with the targeting precision previously only available on walled garden platforms, without requiring a dedicated programmatic trader managing the campaign daily.

Use Case 3: Agency Automating Multi-Publisher Deal Negotiations

Scenario: A mid-size media agency manages 40 direct publisher relationships. Each quarterly deal cycle requires analysts to manually compile RFPs, follow up on inventory proposals, and reconcile CPM offers — a process that consumes 3-4 weeks per buyer per cycle.

Implementation: The agency deploys a buyer-side agent connected to their DSP and DMP via MCP. The agent is trained on historical deal terms and KPI benchmarks. Each quarter, it autonomously dispatches RFPs to all 40 publishers, collects structured responses, scores them against current campaign KPIs, and presents a ranked shortlist to the human buyer for approval.

Expected Outcome: The 3-4 week deal cycle collapses to 2-3 days. Buyers shift time from administrative negotiation to strategic planning and creative oversight — exactly the “humans move upstream” model described in the research report.

Use Case 4: Data Clean Room Operator Enabling Agentic Matching

Scenario: A retail media network runs a data clean room where CPG brands can match their customer data against the retailer’s purchase data. Currently, every match requires manual configuration by a data engineer.

Implementation: The clean room operator exposes a sell-side agent via AdCP that accepts structured audience match requests. A CPG brand’s buyer agent submits a match request specifying: target segment (cereal buyers, 25-54), desired reach, and identity key (UID2). The clean room agent executes the match, returns reach estimates and a segment activation package — all without manual intervention.

Expected Outcome: Match time drops from days to minutes. The clean room becomes a real-time programmatic data layer rather than a batch analytics environment, dramatically increasing its utility for performance advertisers.

Common Pitfalls

1. Deploying agents before data is machine-readable. The most common failure mode is launching an agentic workflow on top of messy, inconsistently labeled audience data. Per Optable’s framework, publishers must ensure audience data is clean, accessible, and machine-readable before agents can transact on it. If your segment taxonomy is a mix of internal jargon and legacy labels, an agent cannot evaluate it — and neither can a buyer’s matching algorithm. Fix the data layer first.

2. Over-scoping the first agent deployment. The research report explicitly warns against this: “Avoid the pitfall of ‘over-scoping.’ Build a single agent for a repetitive, well-defined task before graduating to complex multi-agent systems.” Trying to build a fully autonomous, end-to-end media buying system on day one is a reliable way to produce an agent that does nothing well. Start with one step: automate RFP response, or automate segment evaluation, not both simultaneously.

3. Removing humans from high-stakes decisions. Agentic systems are not “human-out-of-the-loop.” The research report is direct: “People remain essential for defining goals, shaping strategy, exercising judgment.” Agents that are allowed to execute six-figure buys without human review will make costly mistakes. Define clear escalation thresholds from day one.

4. Neglecting protocol compatibility. If your publisher data platform doesn’t support MCP or your DSP doesn’t support A2A, your agents can’t communicate with the broader ecosystem. Audit your vendor stack for protocol support before building custom integrations that become technical debt the moment the ecosystem standardizes.

5. Confusing zero-party data with first-party data. Zero-party data is explicitly declared by the consumer and requires an active value exchange (a quiz, a preference center, a conversational interface). First-party data is inferred from behavior. The distinction matters for both signal quality and compliance. Agents trained on first-party behavioral data will perform differently — and carry different regulatory risk — than agents trained on zero-party declared preferences.

Expert Tips

1. Implement layered protocols, not a single stack. The research report recommends using MCP for tool integration, A2A for enterprise agent collaboration, and ANP (Agent Network Protocol) for decentralized discovery at internet scale. Most production deployments will need all three, and they serve different functions. Don’t try to solve everything with one protocol.

2. Use AgentCards as your interoperability foundation. Publisher and buyer agents should register AgentCards — machine-readable JSON files that declare capabilities, skills, and supported protocols. This is how agents discover each other without manual directory lookups. Build your AgentCard template before your first external agent deployment.

3. Prioritize “skill abstraction” over tool-by-tool instruction. Per the research report, rather than instructing your agent on how to execute each API call, provide it with high-level “skills” and goals. A well-designed buyer agent told to “find three audience segments matching this campaign brief and return a ranked shortlist” will outperform one given a step-by-step API script, because it can adapt when data formats vary.

4. Build zero-party data collection into your owned channels now. If you don’t already have an interactive quiz, preference center, or conversational commerce interface collecting declared user intent, start building one. Per the research report, this is the primary driver of signal quality in agentic advertising — buyers running agents will prioritize publishers and brands with declared-intent audience data over those with only behavioral inference.

5. Demand accelerated compute infrastructure. The research report notes that accelerated computing (NVIDIA-class GPU infrastructure) is required to handle the millisecond-level inference needed for millions of advertising transactions per second. If your ad stack is running on standard CPU-based cloud instances, you will hit latency ceilings at scale. Evaluate whether your infrastructure partner has GPU-accelerated inference capacity before scaling agentic workflows into production.

FAQ

Q: Is agentic advertising only viable for large publishers and enterprise advertisers?

No — and this is one of the more significant aspects of the shift. The research report specifically notes that AI-driven agents will allow “millions of small businesses to produce and optimize TV ads at low cost,” particularly in the CTV channel. No-code platforms like Gumloop and Vellum make it possible for non-technical teams to deploy agents without engineering resources. The barrier to entry for agentic workflows is lower today than the barrier was to self-serve programmatic buying a decade ago.

Q: How does agentic advertising handle brand safety?

Brand safety in agentic workflows is enforced through guardrails defined at the agent configuration stage — not through post-hoc content filtering after a bid is won. Publishers declare content classifications in their AgentCard and audience segment metadata; buyer agents filter against brand safety parameters before issuing an RFP. The Optable framework emphasizes that buyer agents should be able to evaluate publisher inventory without a manual sales call — which means brand safety metadata must be embedded in the data layer, not managed through a separate phone conversation.

Q: What happens to the open exchange in an agentic advertising world?

The open exchange doesn’t disappear, but its role diminishes for premium inventory. Agentic systems will handle the premium, signal-rich direct deal layer more efficiently than the RTB auction can. The open exchange will continue to serve as the clearance layer for remnant inventory. The implication for publishers: more of their revenue will shift toward agent-negotiated deals if they invest in the data infrastructure to support it.

Q: How do I evaluate whether my current audience data is “agent-ready”?

Apply three tests based on the Optable criteria: (1) Can a buyer agent query my segments using structured parameters without a human explanation? (2) Are my segments keyed to a privacy-preserving identity signal (hashed email, UID2) rather than third-party cookies? (3) Do my segment labels correspond to standard IAB taxonomy categories? If you fail any of these tests, your data is not yet agent-ready.

Q: What is the Ad Context Protocol (AdCP) and do I need it?

AdCP is a specialized protocol for agent-to-agent communication within the programmatic supply chain, as documented in the research report. Think of it as the advertising-specific version of A2A — a shared language that buyer and seller agents use to exchange structured RFPs, inventory responses, and deal terms. If you’re building a publisher-side or buyer-side agent that needs to communicate with external counterparts in the ad ecosystem, AdCP support is becoming table stakes. Check whether your SSP, DSP, or audience management platform has announced AdCP compatibility.

Bottom Line

Programmatic signal degradation is not a temporary setback — it is the predictable outcome of building an identity layer on top of third-party cookies that were never designed for this purpose. Agentic advertising addresses the structural problem: it replaces the signal-constrained, 100ms blind auction with agent-mediated negotiation that can carry full audience context, declared intent data, and privacy-preserving identity signals. The tools to deploy this are available today — from AgentCards and AdCP to no-code agent builders and zero-party data collection interfaces. Publishers who make their audience data machine-readable and buyers who configure agents to evaluate inventory autonomously will transact at a level of precision and efficiency that the legacy RTB stack cannot match. The agentic AI market is projected to reach $93.20 billion by 2032 according to the research report — the trajectory is clear, and the practitioners who build this infrastructure in 2026 will not be catching up to it later.

0 Comments