These brands didn’t guess which game mechanics worked. They tested them.

In gamification, context beats cleverness—and experiments reveal context.

Gamification fails most often not because mechanics are bad, but because assumptions go untested. What motivates runners may bore coffee buyers. What excites first-time users may frustrate loyalists. In 2026, the brands that win with gamification are relentless about experimentation—testing reward timing, difficulty, visuals, social layers, and pacing until the system fits their audience.

This guide lays out a practical A/B testing framework for gamification, grounded in lessons from world-class brands, with concrete test designs you can run immediately.

Executive Summary: Why Testing Is Non-Negotiable

Gamification multiplies outcomes—but also magnifies mistakes. Small design choices can swing results dramatically:

- Reward timing can double completion—or halve it

- Difficulty curves can accelerate onboarding—or spike churn

- Visual framing can lift participation without changing incentives

A/B testing turns gamification from art into applied science. In 2026, mature teams test continuously because no mechanic is universally motivating.

1) The Core Problem: Copy-Paste Gamification Doesn’t Transfer

Teams often borrow mechanics that worked elsewhere:

- Badges from fitness apps

- Leaderboards from games

- Streaks from education tools

But motivation is situational. The same mechanic can perform wildly differently depending on:

- User intent

- Lifecycle stage

- Cultural norms

- Mobile vs desktop context

Testing is how you find fit, not just features.

2) What to A/B Test in Gamification (and What Not To)

High-Impact Variables (Test These)

- Reward timing: immediate vs delayed

- Difficulty: fixed vs adaptive

- Social layer: solo vs team vs leaderboard

- Progress framing: bars vs milestones vs tiers

- Visual language: playful vs premium

Low-Signal Variables (Deprioritize)

- Copy tweaks without behavior change

- Cosmetic animations with no feedback impact

- Rare edge-case rewards

Test what changes behavior, not what just looks different.

3) Designing Gamification Experiments That Actually Work

The Golden Rule

Test one mechanic at a time with a clear behavioral outcome.

A Simple Gamification Test Template

- Hypothesis: What behavior should change and why

- Mechanic: The single element being varied

- Population: Who is included (new users? loyalists?)

- Primary KPI: The one metric that decides the winner

- Guardrails: Churn, complaints, or cost thresholds

If you can’t state the hypothesis in one sentence, don’t run the test.

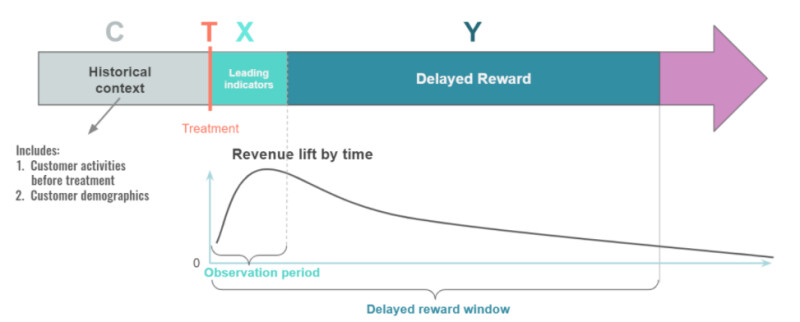

4) Reward Timing: The Highest-Leverage Test

Reward timing consistently produces the largest deltas.

| Variant | Expected Impact |

|---|---|

| Immediate reward | Faster activation |

| Delayed reward | Stronger anticipation |

| Milestone reward | Higher completion |

| Surprise reward | Better recall |

Insight: Immediate rewards win early; milestone rewards win long-term.

5) Difficulty Curves: Where Most Programs Break

Too easy → boredom.

Too hard → frustration.

What to Test

- Fixed difficulty vs adaptive difficulty

- Early friction vs early wins

- Optional challenges vs mandatory tasks

Finding: Adaptive difficulty almost always improves completion—but only when explained transparently.

6) Social Mechanics: When Competition Helps (and Hurts)

Social layers amplify engagement—until they alienate.

Smart Tests

- Global leaderboard vs tiered leaderboards

- Solo challenges vs team challenges

- Public ranking vs relative ranking (“You’re #4 of 18”)

Rule: Test social mechanics by segment, not globally.

7) Case Studies: What the Best Brands Tested (and Learned)

Nike

Nike tested:

- Badge-based motivation vs social competition

- Personal goals vs public rankings

Result:

Personal progress framing outperformed leaderboards for most users; competitive layers worked best for opt-in segments.

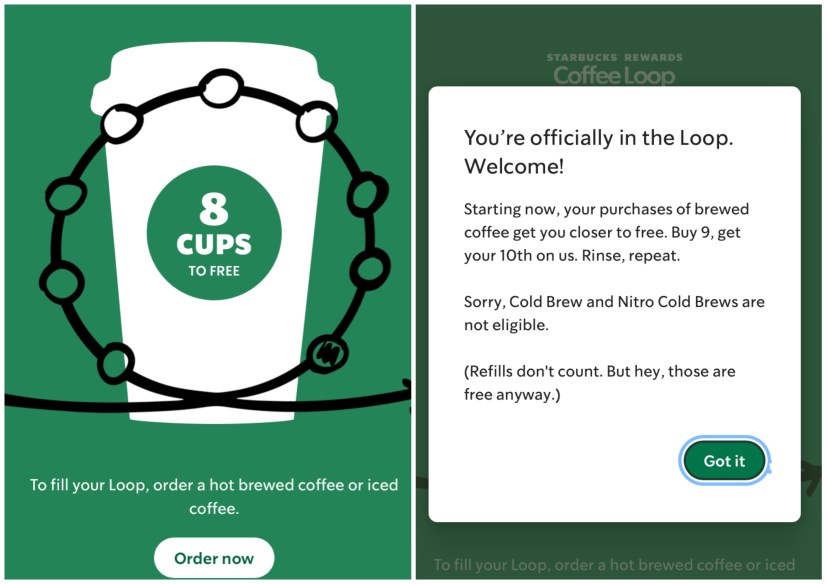

Starbucks

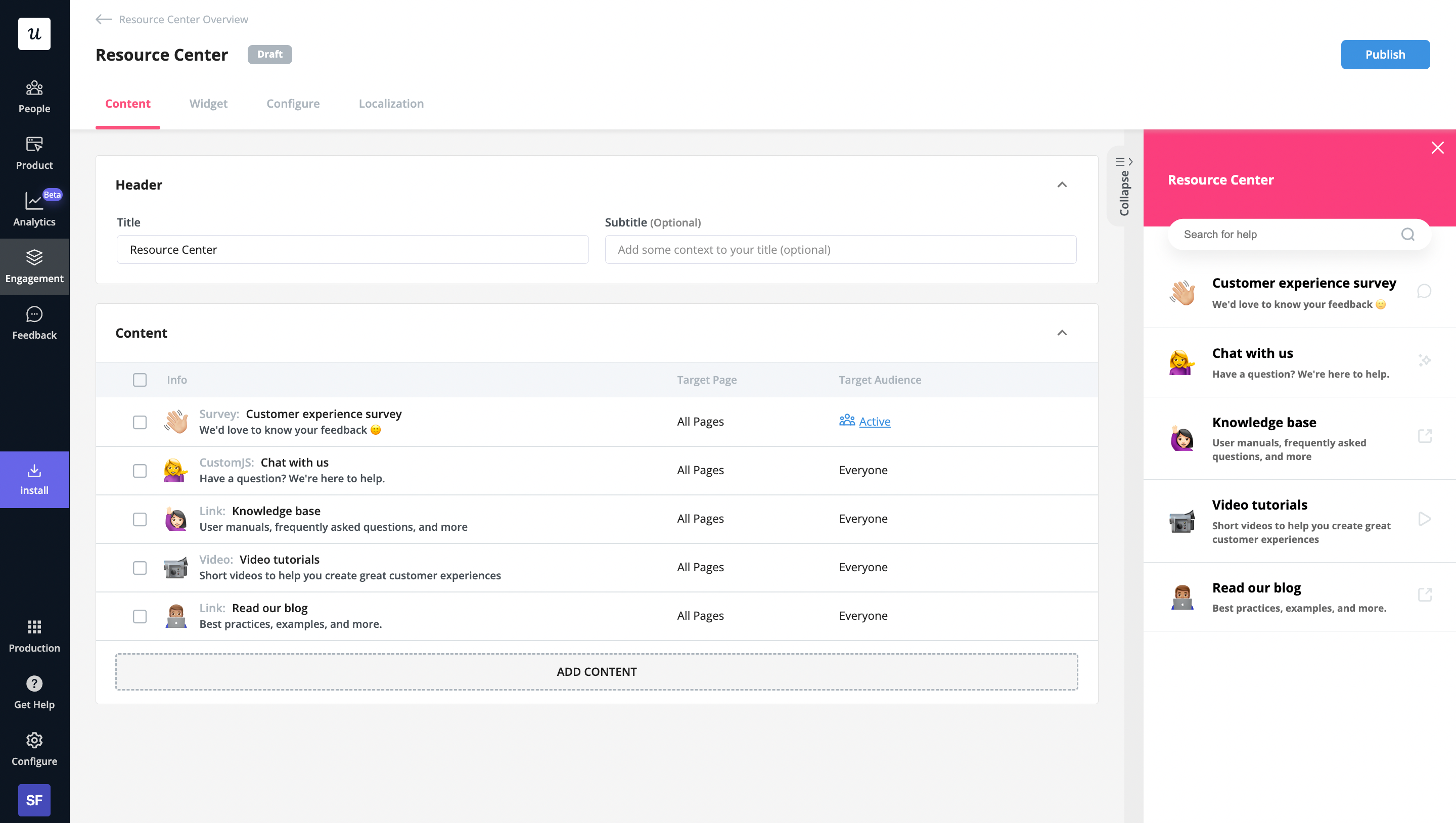

Starbucks tested:

- Star accumulation rates

- Reward thresholds

- Visual progress cues

Result:

Small changes in star pacing significantly altered visit frequency—without changing rewards.

McDonald’s Monopoly

McDonald’s tested:

- Prize frequency

- Instant wins vs delayed reveals

Result:

Frequent low-value wins sustained participation better than rare high-value prizes.

8) Speed Matters: Iteration Beats Perfection

Gamification experiments don’t need long runways.

Best Practices

- Short test windows (1–2 weeks)

- Clear stop conditions

- Rapid iteration cycles

One study found gamified tutorials made users 135% faster—but only after multiple iterations refined friction points.

9) Measuring the Right Outcomes

Each test should ladder to business impact.

| Test Type | Primary KPI |

|---|---|

| Onboarding game | Time-to-value |

| Loyalty mechanic | Return frequency |

| Reward test | Conversion lift |

| Social layer | Participation depth |

| Difficulty tuning | Completion rate |

If a test doesn’t move a core KPI, it’s noise.

10) Avoiding Common Testing Pitfalls

- Over-testing: too many variants dilute insight

- Under-segmenting: averages hide winners

- Ignoring cost: rewards affect margin

- Stopping early: novelty spikes fade

Gamification requires patience plus rigor.

11) Building a Continuous Testing Culture

The strongest programs treat gamification as a living system:

- Monthly experiment backlog

- Clear owner for insights

- Shared learnings across teams

Testing isn’t a phase—it’s the operating model.

Final Takeaway

You can’t intuit motivation.

In 2026, the brands that win won’t ask:

“Which mechanic should we use?”

They’ll ask:

“What did our last test teach us?”

Because in gamification, assumptions are expensive—and experiments are profitable.

0 Comments