This Week in AI: Google’s Product Blitz, Anthropic’s Leak, and the Open-Source Memory Race

A single week produced more deployable AI news than most months: Anthropic shook up its pricing model and accidentally revealed how its agents remember, Google bundled video and email AI into tools you already pay for, and a viral tweet from Andrej Karpathy reframed how you should think about knowledge retrieval entirely. Work through this roundup and you’ll know which releases deserve immediate attention, which to watch, and how one leaked source file is already reshaping open-source tooling.

-

Anthropic announced Claude Mythos, a flagship model withheld from public release on safety grounds. Strong benchmarks are expected given Claude’s track record — but the more consequential question is what a substantially more capable agentic model does to every workflow currently built on top of it.

-

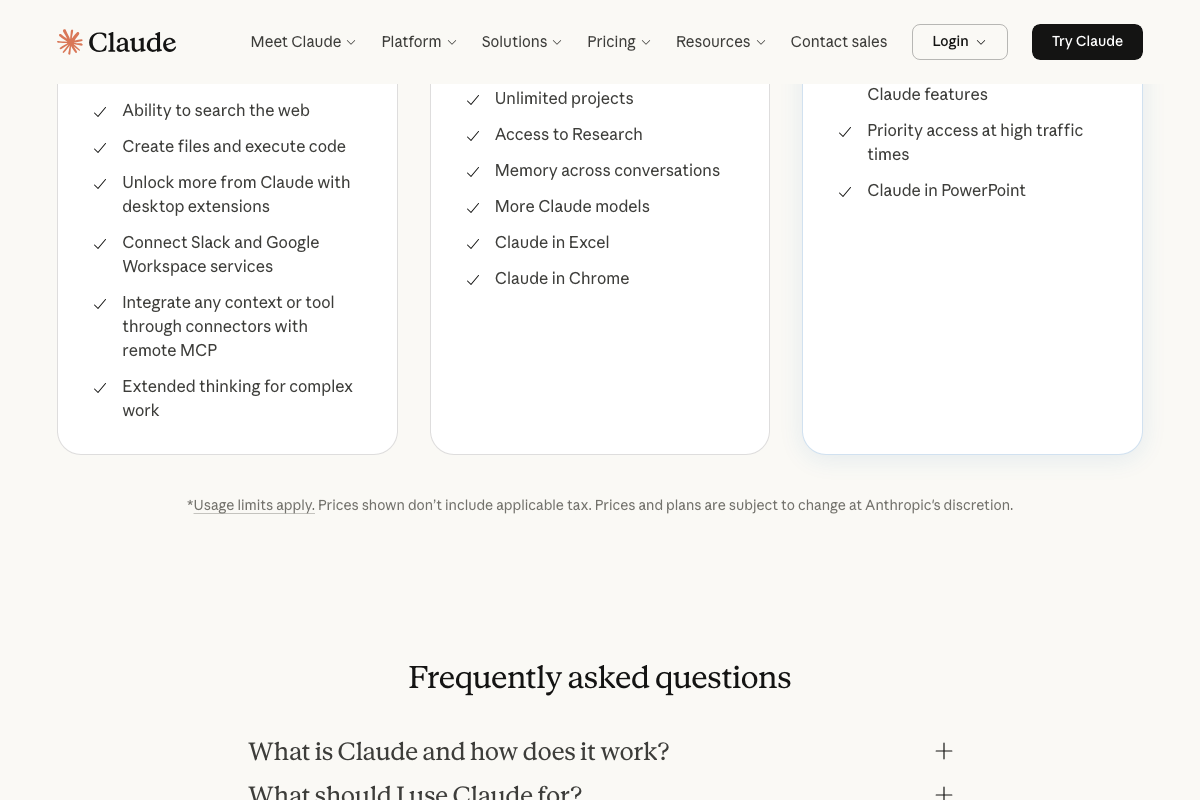

Anthropic cut off third-party agent access to standard Claude subscriptions last Sunday. Developers running workflows through OpenClaw or similar platforms on a $200/month plan now face API costs of $1,000–$3,000/month depending on usage.

-

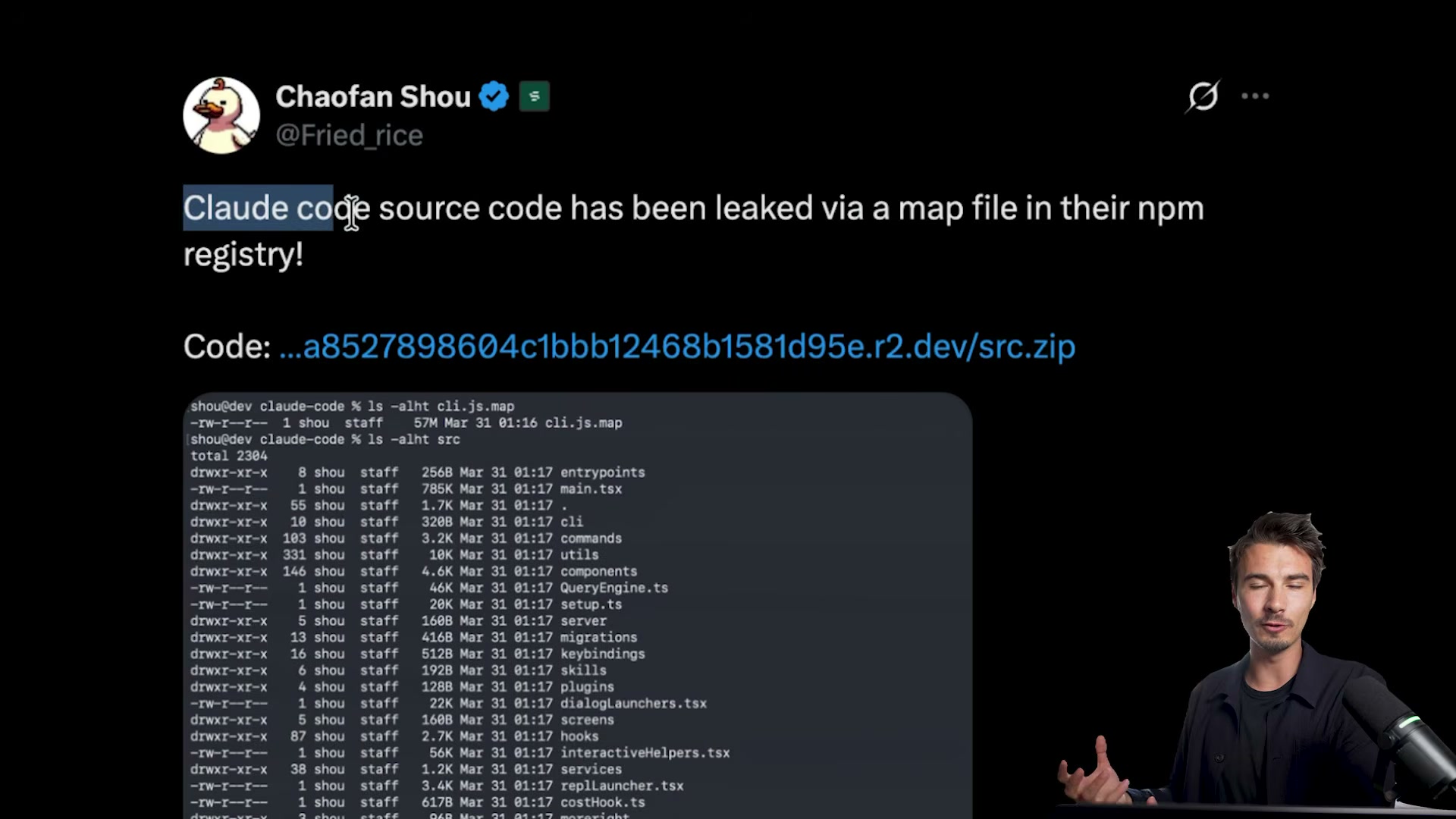

The Claude Code source code leak was real — not an April Fool’s joke, as initially misreported. A map file in Claude Code’s npm package exposed the full internal directory structure, including modules for keybindings, skills, plugins, and hooks.

-

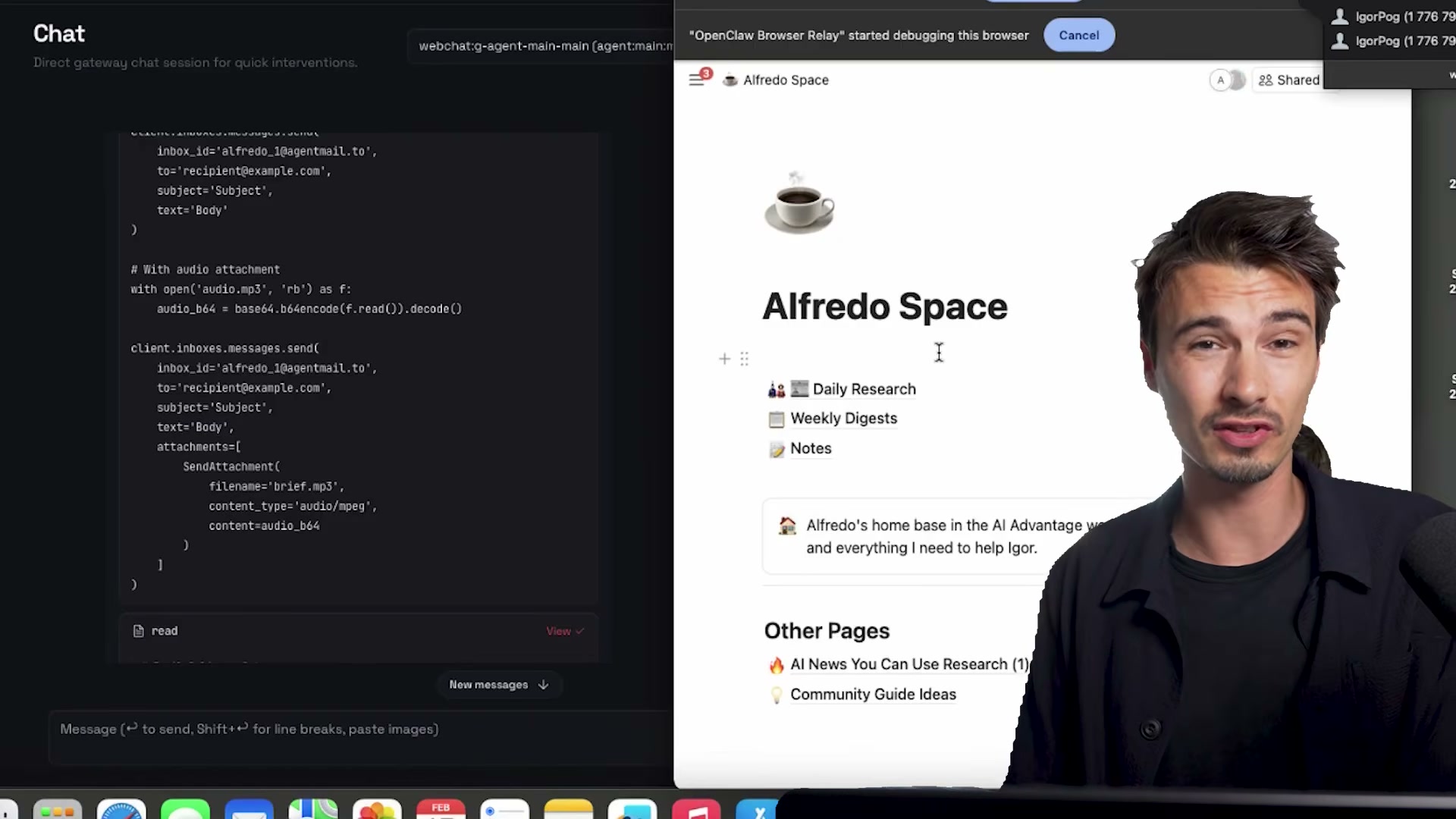

Inside that leaked source, developers found a dreaming function: a cross-session memory mechanism that writes working context to files at session end and reads them back at the start of the next — a deliberate parallel to human memory consolidation during sleep.

-

OpenClaw shipped a matching dreaming feature within days of the leak going public. Closed-source implementation leaks; open-source alternative absorbs it and ships within a news cycle. Expect this pattern to repeat.

-

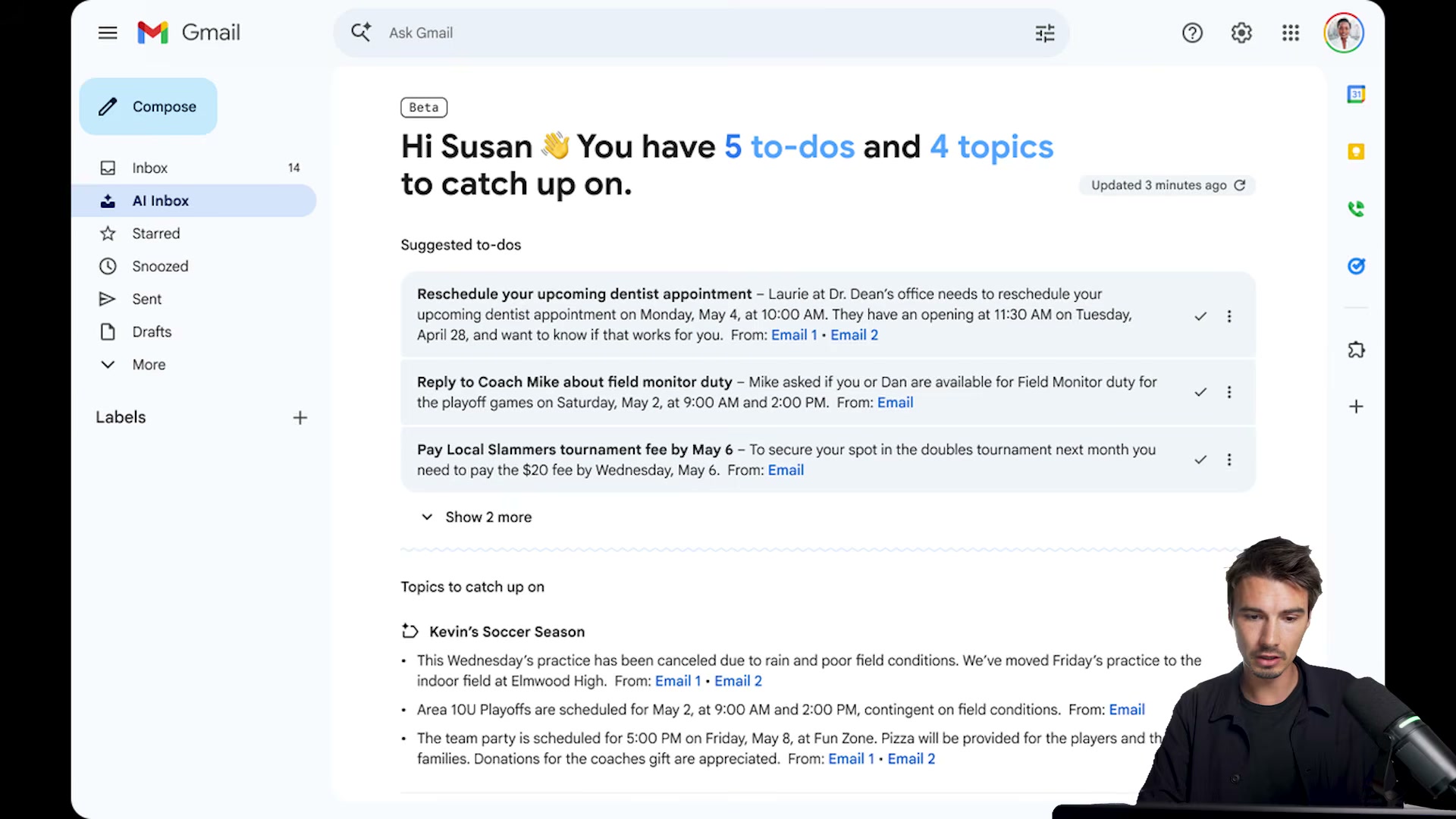

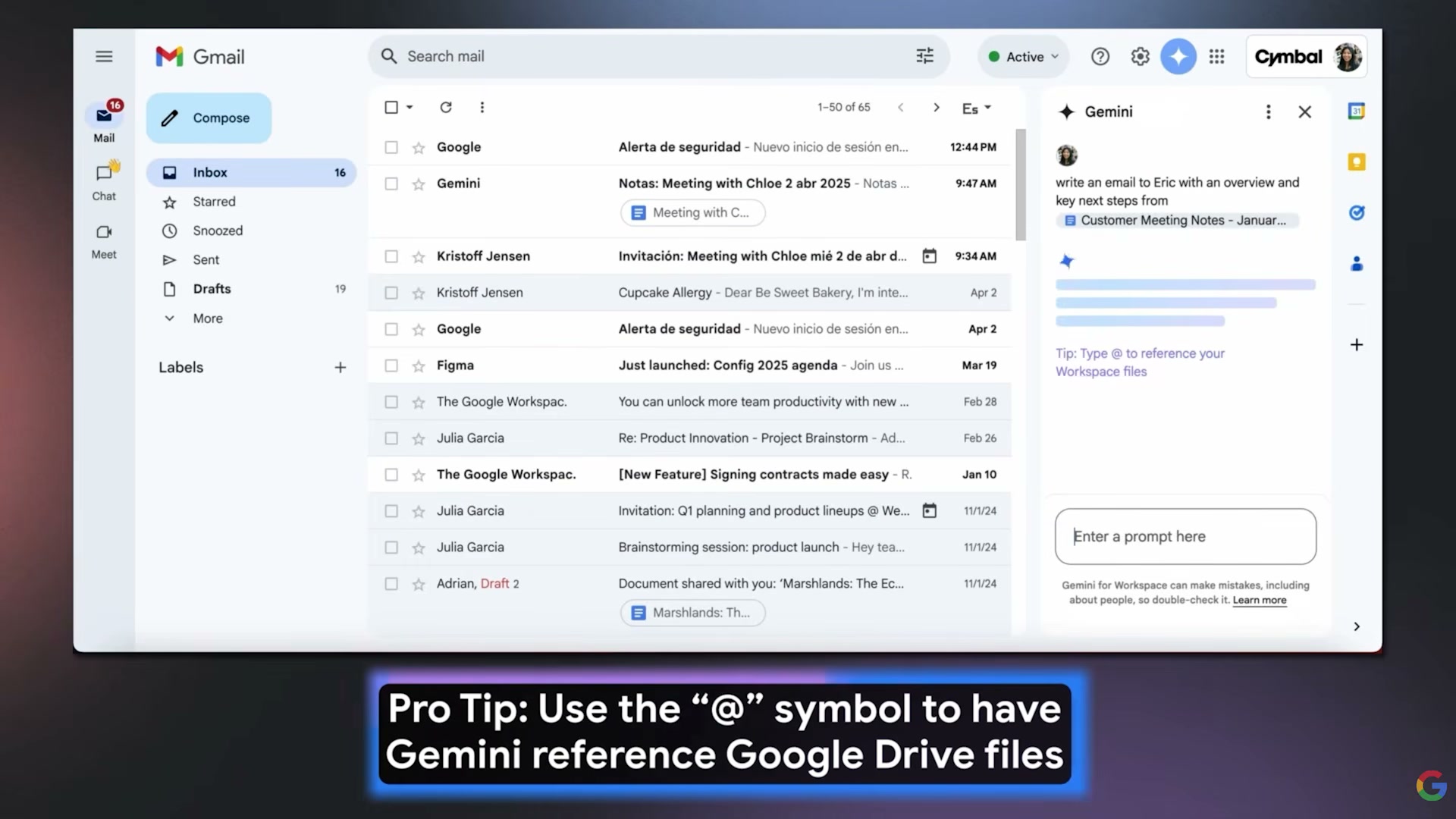

Gmail AI Inbox is rolling out to Google Ultra ($250/month) US subscribers. It prioritizes incoming messages and generates a daily personalized briefing — triage and context first, autonomous replies later. Inside the Gemini side panel, type

@to reference a Google Drive file as inline context.

-

Google Vids now packages AI video generation, avatar selection, custom voiceover, and AI music generation inside Google Workspace. For pro-account users, the entire production pipeline — avatar, narration, background music — runs without leaving the suite.

-

Pabs is an open-source beta that gives AI agents a rendered face for Zoom calls. It requires the PK API and is very early-stage — worth watching, not deploying.

-

Runway Characters is available on the free plan. A live demo produced a voice-driven, real-time-responsive AI character with noticeably better latency and expression fidelity than earlier iterations of this category.

-

Sea Dance 2.0 is now accessible on major platforms after a capacity-constrained launch. Benchmark prompts — sloth on a float, ghost in a corridor — both ranked at the top of current video generation leaderboards for motion quality and prompt accuracy, with one minor hand-render artifact noted.

-

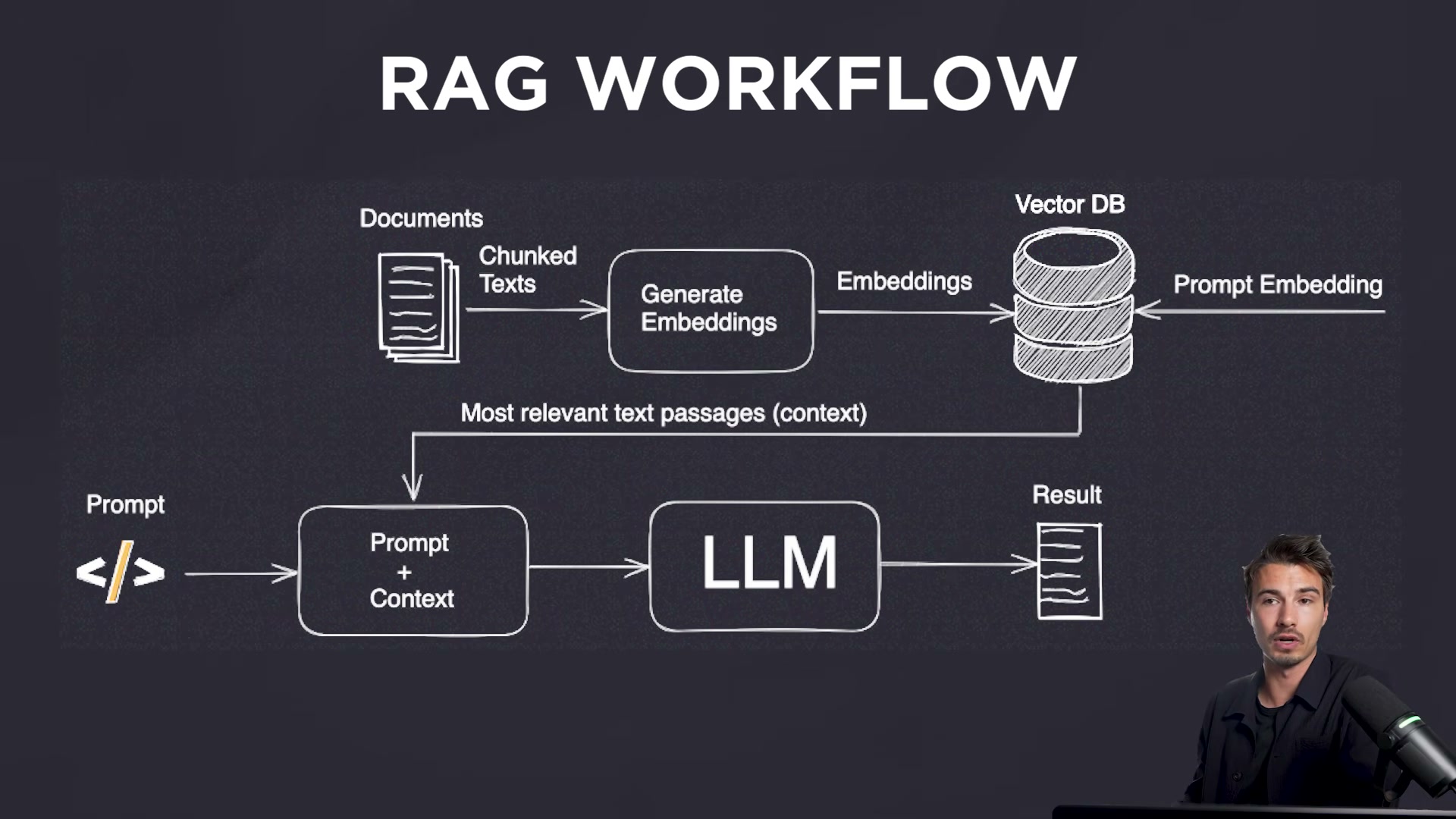

Andrej Karpathy’s LLM Wiki concept argues against embedding and vector databases for personal knowledge retrieval. Write plain text files organized by topic, let the agent read them all at inference time. Early informal comparisons suggest this approach can substantially outperform traditional RAG pipelines for structured personal knowledge bases.

Warning: this step may differ from current official documentation — see the verified version below.

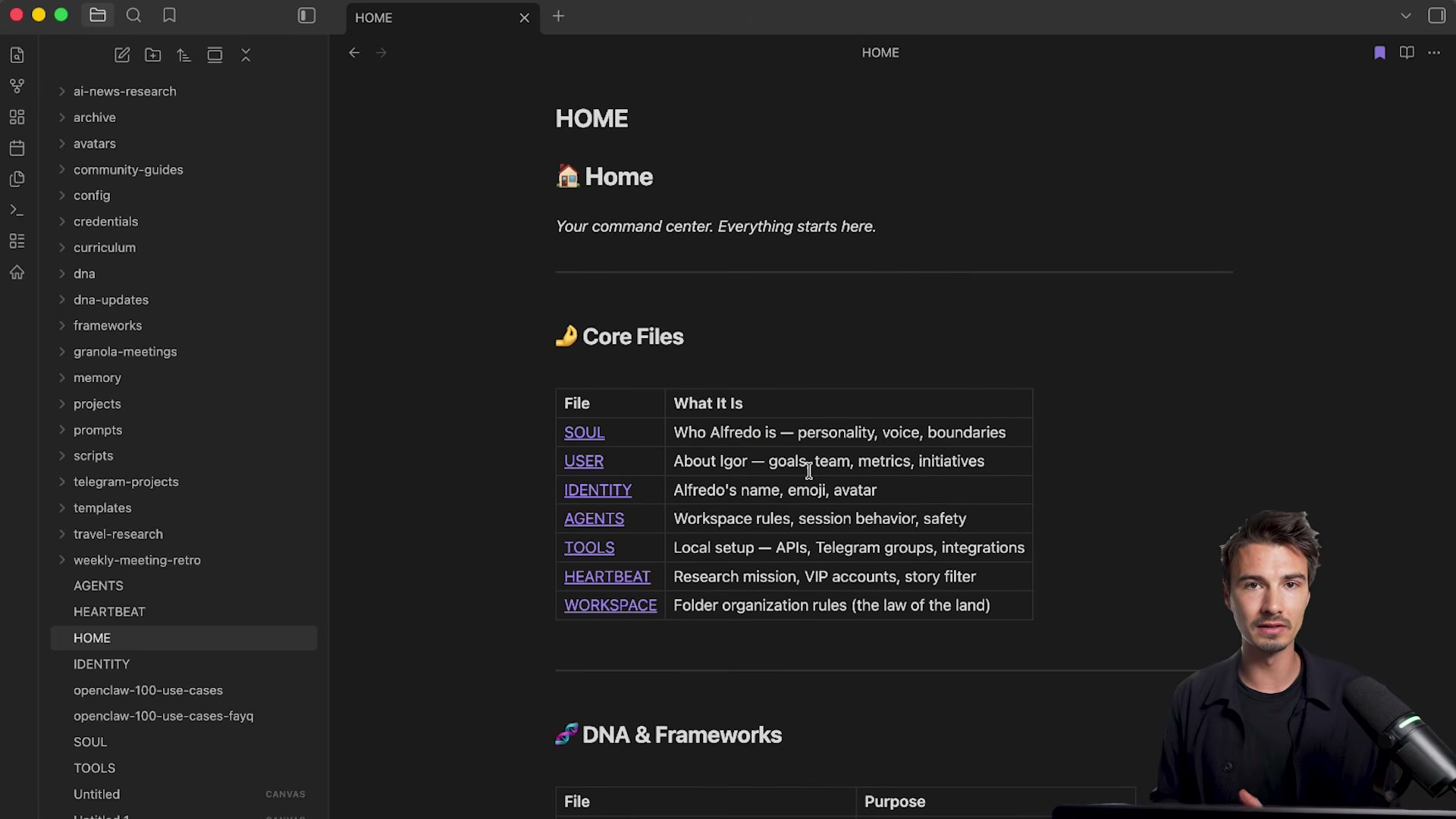

- The presenter’s Obsidian vault, wired to OpenClaw, applies this principle operationally. Named files handle discrete roles — SOUL sets voice and personality, HEARTBEAT defines the research mission, AGENTS governs session rules — and the agent reads all of them as persistent context before each session begins.

-

GLM 5.1 is a 754B-parameter fully open-source Chinese LLM benchmarked near the top frontier tier, with support for agentic tasks running up to eight continuous hours.

-

Gemma 4 is Google’s small open-source model designed to run on-device including on phones — the opposite end of the capability-portability spectrum from GLM 5.1.

-

Suno 5.5 raised AI music output quality enough that internal team testing produced jingles that pass casual listening without obvious machine artifacts.

-

Microsoft released MAI, a suite of transcription, voice, and image models positioned as a direct alternative to OpenAI’s equivalents.

-

New Microsoft 365 cloud connectors let Claude access Word documents stored in the cloud with no manual export or upload required.

How does this compare to the official docs?

The dreaming memory mechanism, the Karpathy wiki workflow, and GLM 5.1’s agentic task claims each deserve scrutiny against what Anthropic, the open-source community, and the respective teams have formally documented — and that’s precisely where Act 2 picks up.

Here’s What the Official Docs Show

The tutorial above covers a genuinely dense week of AI releases, and the official documentation confirms most of the broad strokes. What follows runs the same sequence, fills in where the docs add precision, and flags the handful of claims that don’t appear in current official sources.

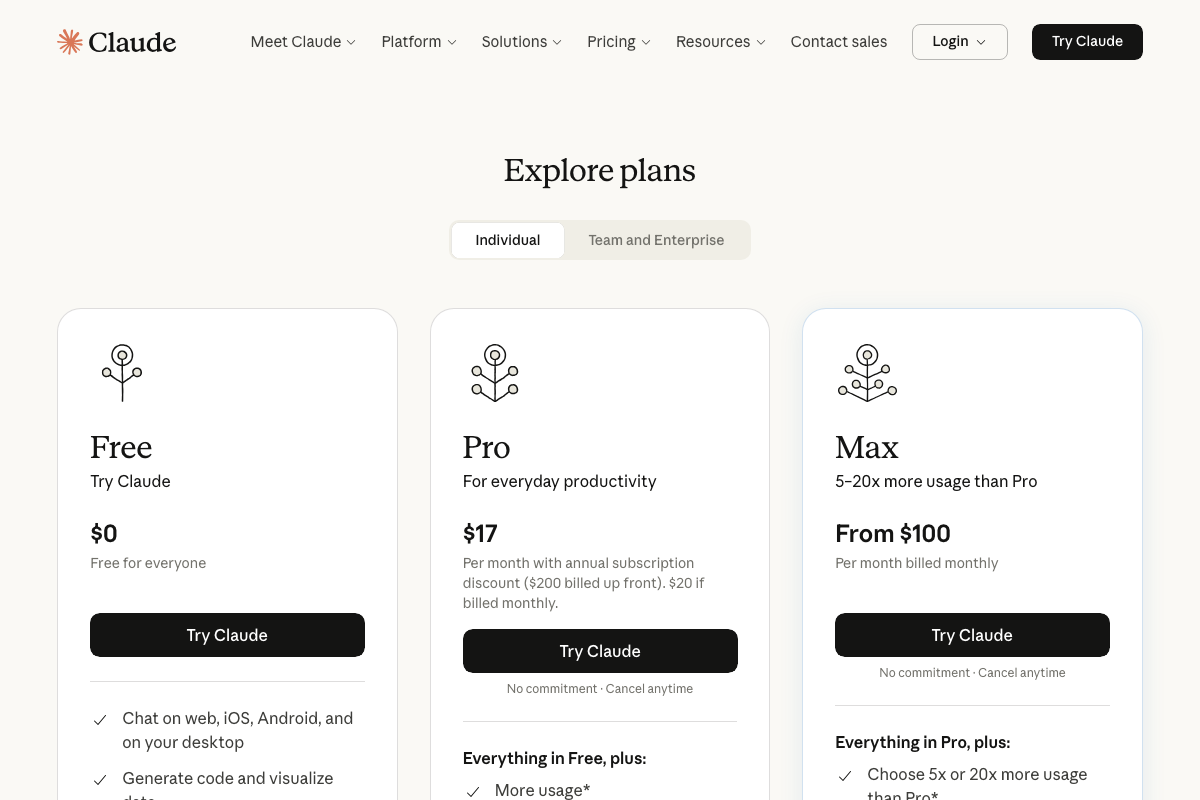

Step 1 — Claude Mythos announcement

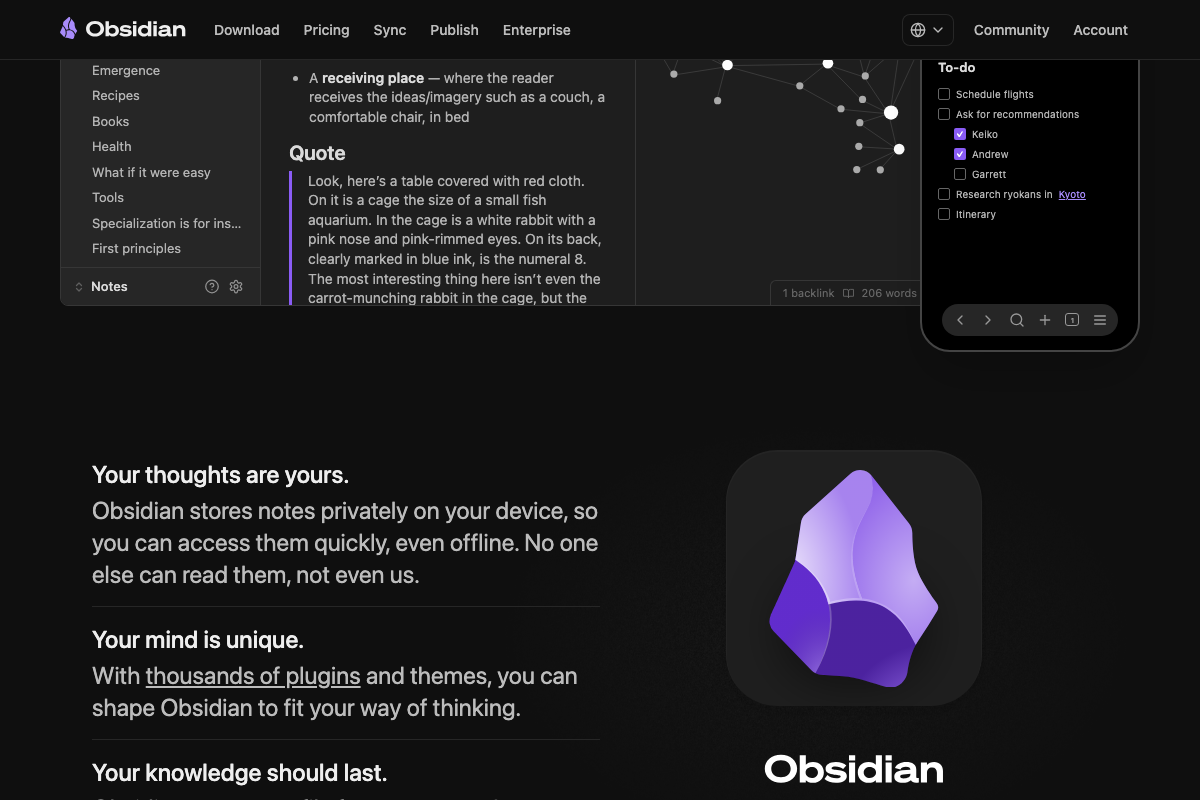

As of April 10, 2026, no model or plan named “Claude Mythos” appears on the official claude.ai pricing page. The three publicly listed individual tiers are Free ($0), Pro ($17/month annual), and Max (from $100/month).

Step 2 — Third-party agent access restrictions

No policy restricting Claude subscription access through third-party agent platforms is visible on the official pricing page. The page presents standard tiers only.

Steps 3 & 4 — Claude Code source leak and dreaming function

No official documentation was found for these steps — proceed using the video’s approach and verify independently.

The Claude Code documentation overview was not accessible at time of capture. The pricing page does confirm “Memory across conversations” as a named Pro plan feature, lending indirect support to the concept — the underlying mechanism is not described.

Step 5 — OpenClaw dreaming feature

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 6 — Gmail AI Inbox

Two points from the official page. First, Gemini in Gmail is described as available “for everyone” — no Google Ultra plan restriction appears in the documentation. Second, the three documented Gemini features are writing assistance, thread summarization, and inbox search. “Email prioritization” and “daily briefings” as described in the video are not present in the captured pages.

Step 7 — Google Vids

Google Vids is confirmed as a Gemini-powered Google Workspace product. One scope clarification: background music is one element inside Gemini’s storyboard generation bundle — not a standalone AI music generation feature. AI avatar creation and custom voice-over as discrete named features do not appear in the captured documentation.

Step 8 — Pabs

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 9 — Runway Characters

The video’s approach here matches the current docs exactly. “Runway Characters: Real-time Video Agents” is confirmed as a named product on Runway’s official homepage.

Step 10 — Sea Dance 2.0

As of April 10, 2026, Sea Dance 2.0 does not appear in Runway’s homepage content or Research section. Runway’s named video generation model is Gen-4.5, described as “the world’s best video model.” Sea Dance 2.0 may be a third-party model hosted on the Runway platform rather than a Runway-developed product.

Step 11 — Karpathy LLM Wiki concept

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 12 — Obsidian vault as agent context

Obsidian is confirmed as local-first with a broad plugin ecosystem. Because notes are stored locally (“No one else can read them, not even us”), connecting to an external platform like OpenClaw requires an explicit plugin or API bridge — that specific integration is not documented in the captured pages.

Steps 13–16 — GLM 5.1, Gemma 4, Suno 5.5, Microsoft MAI

No official documentation was found for these steps — proceed using the video’s approach and verify independently.

Step 17 — Microsoft 365 cloud connectors

The video’s approach here matches the current docs exactly. Claude’s Free plan officially lists “Integrate any context or tool through connectors with remote MCP” as a named feature — this is the documented mechanism for the cloud document access described in the tutorial.

Useful Links

- Google Vids: AI-Powered Video Creator and Editor | Google Workspace — Official product page documenting Gemini-assisted storyboard generation, background music bundling, and the multi-track Google Vids editor interface.

- Runway | Building AI to Simulate the World — Runway’s homepage and Research section confirming Runway Characters: Real-time Video Agents and Gen-4.5 as the current named video generation model.

- Obsidian – Sharpen your thinking — Obsidian’s product site documenting its local-first private storage architecture, plugin ecosystem, and Links and Graph features relevant to the LLM Wiki workflow.

- Claude — Claude.ai pricing listing Free, Pro, and Max individual tiers with confirmed features including remote MCP connectors and Memory across conversations.

- Gmail: Secure, AI-Powered Email for Everyone | Google Workspace — Gmail’s official AI features page documenting the three confirmed Gemini in Gmail capabilities: writing assistance, thread summarization, and inbox search.

0 Comments