AI agents are no longer a future concept — they are actively browsing, comparing, and shortlisting products on behalf of real users right now, and most brand websites are architecturally invisible to them. This tutorial breaks down exactly what the agentic web is, why it’s replacing traditional search-based discovery, and the concrete steps you need to take to ensure your brand makes the cut when an AI agent goes shopping on your customer’s behalf.

What This Is

The agentic web is internet infrastructure built for AI agents — software systems that use artificial intelligence to pursue goals and complete tasks autonomously on behalf of users. These aren’t simple chatbots or keyword matchers. According to Google Cloud, “AI agents are software systems that use AI to pursue goals and complete tasks on behalf of users. They show reasoning, planning, and memory and have a level of autonomy to make decisions, learn, and adapt.”

In practical terms: your prospective customer opens a conversation with an AI assistant and says, “Find me the best CRM for a 20-person sales team under $50 per user.” The agent doesn’t show the user a list of links. It browses, compares, filters, and returns a shortlist — or in increasingly common cases, initiates a trial signup or purchase directly. By the time the human ever sees the result, your brand has either made the shortlist or been eliminated entirely.

This shift was documented in depth by Semrush and represents a structural change in how the commercial web functions. Several enabling protocols have launched in rapid succession since late 2024:

- Model Context Protocol (MCP) — launched November 2024, now the de facto standard for AI-to-system communication. It functions similarly to how HTTP standardized web communication, letting AI agents interact with retail systems, inventory, pricing engines, and customer service APIs in a consistent, machine-readable way.

- Agentic Commerce Protocol (ACP) and Agentic Payment Protocol (AP2) — standardize how agents discover products and authorize autonomous transactions.

- WebMCP — allows websites to actively declare their capabilities to agents visiting their pages.

- Google’s Universal Commerce Protocol (UCP) and OpenAI’s Agent Commerce Protocol — further standardize agent-merchant interactions.

The Agentic AI Foundation (AAIF) was formed specifically to build shared agent infrastructure across these competing standards. As of March 2026, OpenAI has shifted from native checkout to merchant site redirects, meaning agents are now sending users back to merchant sites after evaluation — making your site’s machine-readability more critical than ever.

The result of all this infrastructure is what researchers call the Delegate Economy: consumers are no longer primary researchers. They are approvers. Awareness, consideration, and purchase intent collapse into a single moment — the validation layer — where a human simply reviews and confirms what their AI agent has already decided.

Why It Matters

Traditional SEO was built on the assumption that a human would type a query, scan a results page, click through to your site, read your content, and decide to convert. That journey is now frequently bypassed entirely for transactional searches.

Crystal Carter, Head of SEO Insights at Wix, describes the emerging consumer experience as a “validation layer” — a moment distinct from anything brands have navigated before. As she put it, according to Semrush’s agentic web research: “Brands haven’t experienced this level of burden of proof before.” The brand must prove its case not to a curious human browser, but to a skeptical, efficiency-optimized AI system running structured matching logic.

For practitioners, this changes three core marketing workflows:

Discovery is now agent-mediated. Paid search ads, meta descriptions, and even well-ranked organic pages are frequently bypassed when an agent is operating in “task completion” mode. The agent isn’t reading your site the way a human does — it’s extracting structured signals and comparing them against the user’s declared requirements.

Consideration happens in milliseconds. Where a buyer journey once spanned days of research and comparison, an agent compresses that into a single API interaction. Your product’s attributes, pricing, certifications, and audience fit must be immediately machine-readable or the agent moves on.

Trust is validated externally, not from your own copy. An AI agent doesn’t trust your headline claiming you’re “the #1 rated solution.” It cross-references claims against third-party sources — Reddit threads, industry publications, verified badge networks, G2 reviews. Semrush’s AI Visibility Index tracks which brands consistently appear in AI-generated recommendations, and the brands winning are those with strong external validation, not strong internal marketing copy.

This affects every practitioner in the marketing stack: content strategists need to restructure how they write; developers need to build MCP endpoints; brand managers need to invest in third-party certifications; and analytics teams need new measurement tools because GA4 won’t tell you how often an AI agent evaluated and rejected your brand.

The Data

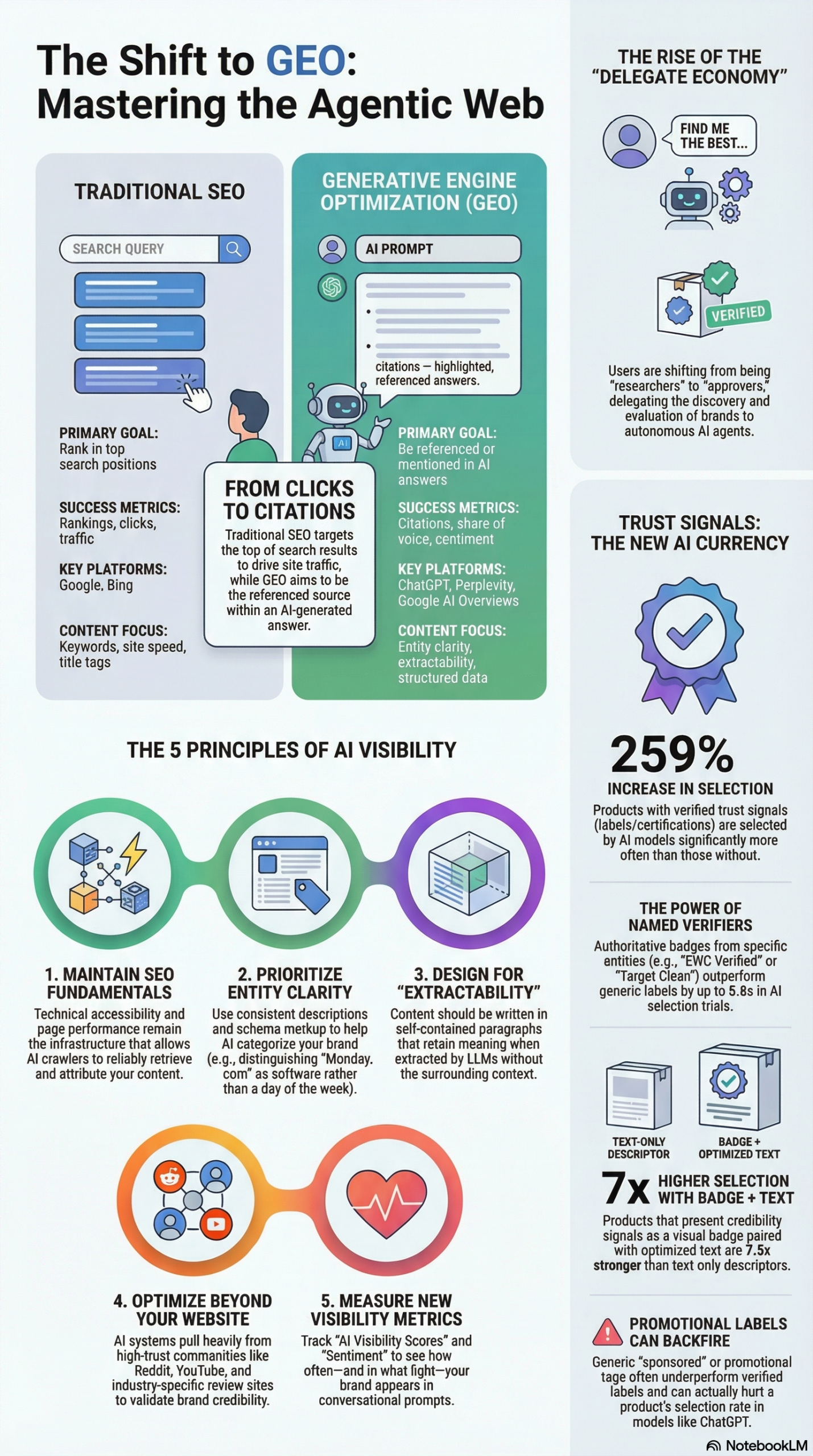

The research supporting the agentic web shift is concrete and quantified. Here’s how traditional SEO compares to the emerging Generative Engine Optimization (GEO) framework, along with documented trust signal impact data:

SEO vs. GEO: Strategic Comparison

| Dimension | Traditional SEO | Generative Engine Optimization (GEO) |

|---|---|---|

| Primary Goal | Rank in top search positions | Be cited and mentioned in AI-generated answers |

| Success Metrics | Rankings, click-through rate, traffic | AI citations, sentiment, share of voice in AI responses |

| User Path | Human clicks link, visits site | AI agent extracts data, presents summary or recommendation |

| Key Platforms | Google, Bing | ChatGPT, Google AI Overviews, Perplexity, Claude, Gemini |

| Optimization Focus | Title tags, meta descriptions, keywords | Self-contained paragraphs, structured data (JSON-LD), schema |

| Credibility Signals | Backlinks, domain authority | Third-party verified trust badges, mentions on Reddit/forums |

| Volatility | Rankings shift gradually | 40%–60% of cited sources change month-to-month |

Source: Semrush AI Visibility Index, NotebookLM Research Report

Trust Signal Impact on AI Selection Rates

| Trust Signal Type | AI Selection Impact |

|---|---|

| Verified third-party badge + optimized text | Up to 7.3x stronger than text-only descriptors |

| Specific named verifier (e.g., “EWG Verified”) | Up to 5.6x better than generic certification labels |

| Any verified trust signal vs. no signal | 2.3x–2.6x more likely to be selected (259% improvement) |

| “Sponsored” or promotional tags | Negative — hurts selection vs. having no label |

| Generic “Best Seller” / scarcity tags | Often worse than no label at all |

| Products with no trust signals | Selected 86–96% less often than random chance |

Source: Novi Research via NotebookLM Research Report

The citation volatility stat is particularly important for practitioners: the Semrush AI Visibility Index found that 40%–60% of sources cited by AI platforms change month-to-month. This means you can’t build a single optimized page and expect stable AI visibility. Consistent presence across multiple authoritative surfaces is the only durable strategy.

Step-by-Step Tutorial: Optimize Your Brand for AI Agent Discovery

This is a practical implementation guide for getting your brand to appear in AI agent shortlists. It covers five phases: infrastructure, content architecture, trust signal acquisition, off-site presence, and measurement.

Prerequisites

Before starting, you need:

– Access to your site’s CMS or codebase (for schema and structured data)

– Google Search Console and at least one AI visibility tracking tool (Semrush AI Toolkit, Profound, or similar)

– A list of your top 10 commercial pages (product pages, service pages, pricing pages)

– Access to your brand’s third-party review profiles (G2, Trustpilot, Google Reviews)

Estimated time investment: 4–6 hours for initial implementation, ongoing monthly audit cadence.

Phase 1: Technical Foundation — Make Your Site Agent-Readable

Step 1: Audit for JavaScript rendering dependency.

AI crawlers — especially those powering agents — still struggle with JavaScript-heavy, client-side-rendered pages. Audit your top commercial pages using Google’s Rich Results Test and a JavaScript-disabled browser view. Any critical content (pricing, features, audience fit statements) that only appears after JS execution is invisible to many agent crawlers. Move that content to server-side rendering or static HTML.

Step 2: Implement JSON-LD schema markup on every commercial page.

Schema markup is the single fastest win for agent-readability. AI systems use structured data to extract entity information without parsing prose. For product pages, implement Product schema. For service pages, use Service schema. For your organization, implement Organization schema at the domain root.

Example Organization schema for a B2B SaaS company:

{

"@context": "https://schema.org",

"@type": "Organization",

"name": "YourBrand",

"url": "https://yourbrand.com",

"description": "CRM software for automotive dealerships with 5–50 sales reps",

"foundingDate": "2019",

"areaServed": "US",

"sameAs": [

"https://www.linkedin.com/company/yourbrand",

"https://twitter.com/yourbrand",

"https://g2.com/products/yourbrand"

]

}

Note the specificity of the description field — this is where entity clarity lives. Generic descriptions like “leading CRM solution” are useless to agent matching logic. Specific declarations like “CRM software for automotive dealerships with 5–50 sales reps” enable an agent to confidently match your product to a user’s declared requirements. Semrush’s research on Salesforce’s 82/100 AI Visibility Score shows that dedicated industry-specific pages are a core driver of that performance.

Step 3: Declare your audience explicitly on every page.

The research from Semrush is clear: agents perform matching, not searching. “Declaration over inference” is the operating principle. Every commercial page should explicitly state:

– Who this product/service is for (role, industry, company size)

– What specific problem it solves

– What it does NOT do (negative matching helps agents avoid false positives)

Phase 2: Content Architecture — Write for Extraction, Not for Reading

Step 4: Audit and rewrite your top pages for “self-contained paragraphs.”

AI systems chunk your content into vector embeddings. Each paragraph is processed independently. A paragraph that says “As mentioned in the previous section, this integration is key” gives an AI agent nothing useful in isolation. Every paragraph on a high-value page should be independently meaningful.

Run this audit process:

1. Pull your top 10 commercial pages

2. Read each paragraph in isolation, stripped of surrounding context

3. Mark any paragraph that requires context from elsewhere to make sense

4. Rewrite those paragraphs to be standalone fact-statements

Step 5: Front-load every section with the critical claim.

AI models prioritize content that appears early in a section. Structure headings and opening sentences to contain the key assertion first, then support it. Invert the journalistic pyramid: conclusion first, evidence second.

Instead of:

“When we think about how enterprises manage customer relationships across distributed sales teams, there are several factors to consider, including cost, ease of use, and integration capability. Our platform addresses all of these…”

Write:

“YourBrand reduces CRM implementation time by 60% for distributed sales teams by providing pre-built integrations with Salesforce, HubSpot, and Dynamics 365.”

Step 6: Add explicit comparison content.

Agents frequently run comparison tasks (“Find the best X vs. Y”). Having a published comparison page — even one that acknowledges competitors — significantly improves your chance of appearing in these agent queries. Structure comparison pages with clean markdown-style tables that an AI can extract cleanly.

Phase 3: Trust Signal Acquisition — Build the Verification Layer

Step 7: Identify and pursue the highest-impact third-party verifiers in your category.

Novi Research found that named, specific verifiers outperform generic certification labels by up to 5.6x in AI selection rates. Generic “Certified” or “Award Winner” labels often underperform having no label at all. Research which third-party certifiers AI models in your vertical consistently recognize:

- B2B SaaS: SOC 2 Type II, G2 Leader badges, Capterra Top Performer

- E-commerce/CPG: EWG Verified, MadeSafe, B Corp certification

- Health/Wellness: NSF Certified, USP Verified

- Security products: ISO 27001, FedRAMP authorization

Once you have the certification, display it as a badge + descriptive text combination. The Novi Research data is unambiguous: a badge combined with optimized text description is 7.3x more effective than text descriptors alone. The image itself carries signal — AI vision systems can read and process badge imagery.

Step 8: Eliminate “sponsored” and scarcity labels from AI-indexed surfaces.

This is counterintuitive but documented: “Sponsored” tags and promotional scarcity labels (limited time offer, 3 left in stock) consistently hurt AI selection rates compared to having no label. Remove these from any page that is primarily being optimized for agent discovery. They signal commercial bias, which AI models are trained to discount.

Phase 4: Off-Site Presence — Build the External Corroboration Layer

Step 9: Establish and maintain presence on the platforms AI models use as training and retrieval sources.

Semrush’s research identifies that AI pulls from YouTube, Reddit, and industry publications as “grounding” signals for brand legitimacy. A brand that exists only on its own domain is treated as unverified by most LLM systems. Build documented presence on:

- Reddit: Participate authentically in relevant subreddits. Answer questions where your product is genuinely applicable. Avoid promotional language — Reddit contributions function as third-party social proof.

- YouTube: Publish tutorial and product demo content. AI systems increasingly source video content for recommendations.

- Industry review platforms: G2, Capterra, Trustpilot. These are primary data sources for commercial AI queries.

- Industry publications and niche blogs: Earned mentions and guest posts in category-specific publications carry more weight than self-published content.

Step 10: Pursue the “mentioned often” strategy over the “rank first” strategy.

A key principle from the Semrush AI Visibility Index research: “In AI, being mentioned often beats ranking first.” Unlike Google’s winner-takes-all ranking system, AI models summarize and synthesize. A brand mentioned consistently across five moderately authoritative sources outperforms a brand with a single highly-ranked page. Diversify your mention footprint.

Phase 5: Measurement — Instrument Your AI Visibility

Step 11: Set up AI visibility tracking alongside traditional analytics.

Standard analytics tools (GA4, Search Console) have a measurement blind spot: they cannot capture traffic that was evaluated and rejected by an agent, or conversions that happened through an agent redirect. You need dedicated AI visibility measurement:

- Semrush AI Toolkit: Tracks your brand’s appearance in AI Overviews and Perplexity citations

- Profound: Monitors brand mentions across generative AI platforms

- Manual prompt testing: Regularly query ChatGPT, Perplexity, and Claude with the exact prompts your target customers would use. Track whether your brand appears, how it’s described, and what sources are cited.

Step 12: Establish a monthly citation audit.

Given that 40%–60% of AI citations change month-to-month, set a recurring monthly task:

1. Run 10–15 target queries across ChatGPT, Perplexity, and Google AI Overviews

2. Document whether your brand appears, what position, and what is said about it

3. Identify which external sources AI models are citing about your brand

4. Prioritize content or relationship-building on those cited sources

Expected Outcomes After 90 Days

Brands that implement all five phases systematically can expect:

– Measurable improvement in AI citation frequency on tracked queries

– Cleaner entity recognition in AI-generated brand descriptions

– Increased referral traffic from AI-assisted discovery (trackable via UTM parameters on agent redirect links)

– Better alignment between external reviews and AI-generated brand sentiment

Real-World Use Cases

Use Case 1: B2B SaaS Company Entering Agent-Driven Procurement

Scenario: A project management software company notices their pipeline includes more deals where the prospect “had an AI recommend a shortlist.” They want to ensure they appear on those shortlists.

Implementation: They implement SoftwareApplication schema across all product pages, add dedicated pages for each industry vertical they serve (construction, healthcare, professional services), build out G2 reviews to 150+ with responses, and publish a monthly comparison post pitting themselves against top competitors with structured feature tables.

Expected Outcome: When an AI agent processes a query like “best project management software for construction companies under 100 employees,” the brand’s explicit audience declaration and structured comparison content give it a strong matching signal. Their G2 rating and volume provide external corroboration.

Use Case 2: E-Commerce Brand Optimizing for AI Shopping Agents

Scenario: A CPG brand selling premium skincare products wants to appear when AI shopping agents handle health-conscious beauty searches. They currently have no third-party certifications.

Implementation: Following the Novi Research trust signal framework, they pursue EWG Verification for their flagship product line. Once certified, they display the EWG badge alongside a specific text descriptor: “EWG Verified: No harmful chemicals, formulated without parabens, sulfates, or synthetic fragrance.” They remove “Best Seller” and “Limited Time” tags from product pages. They also build a Reddit presence by contributing to r/SkincareAddiction.

Expected Outcome: Per Novi’s documented data, the specific named verifier (EWG) combined with badge + text format should dramatically outperform their previous unverified state. Products selected by AI agents at rates up to 259% higher than unverified alternatives.

Use Case 3: Marketing Agency Positioning for AI-Driven Client Acquisition

Scenario: A mid-size digital marketing agency wants AI agents to recommend them when business owners query “best marketing agency for e-commerce brands doing $5M–$20M revenue.”

Implementation: They restructure their homepage to explicitly declare their ICP (ideal client profile) in the first paragraph. They add LocalBusiness and ProfessionalService schema. They publish detailed case studies with specific, extractable metrics for e-commerce clients. They build presence on Clutch.co (a primary source for B2B service recommendations in AI queries) and achieve a Top Agency badge in their category.

Expected Outcome: The explicit ICP declaration allows AI agents to confidently match them to specific user queries rather than inferring fit. Clutch verification provides the external trust signal. Case studies with concrete numbers give AI systems extractable evidence to cite when recommending the agency.

Use Case 4: Enterprise Software Company Implementing MCP Endpoints

Scenario: A large CRM vendor wants to go beyond passive optimization and actively participate in the agentic commerce ecosystem by allowing AI agents to directly query their product catalog, pricing tiers, and integration compatibility.

Implementation: Their engineering team builds an MCP server hosted on AWS, exposing three endpoints: product tier specifications, integration library (compatible apps), and demo scheduling. The MCP server uses streamable-http transport for network-based interaction. They register the server with the Agentic AI Foundation’s directory and add WebMCP declarations to their site headers.

Expected Outcome: AI agents that support MCP can now directly query their system rather than scraping their website. This provides clean, structured, real-time data — dramatically improving response accuracy and making the brand the “path of least friction” that Semrush’s research identifies as a core agent selection criterion.

Use Case 5: Content Publisher Building AI Citation Authority

Scenario: A B2B industry newsletter wants their content to be the source AI models cite when answering questions in their vertical (marketing technology).

Implementation: They restructure all articles to lead with standalone fact paragraphs, add Article and NewsArticle schema to every post, create a dedicated “Stats and Data” page that aggregates key industry metrics with clear citations, and begin publishing original research (even small-scale surveys) that AI models can cite as primary sources. They submit their RSS feed to AI news aggregators.

Expected Outcome: Original research and structured fact-forward content are the highest-value inputs for AI citation. Publishers that produce citable, extractable data consistently appear in AI-generated summaries of industry topics.

Common Pitfalls

Pitfall 1: Optimizing only for Google while ignoring agent-specific signals.

Many SEO teams continue to treat AI Overviews as a Google ranking problem and never address the deeper structural changes required for full agent compatibility. Schema markup that satisfies Google’s Rich Results Test does not automatically optimize for AI agent matching logic. You need both — and GEO-specific content architecture on top.

Pitfall 2: Using generic trust labels instead of named verifiers.

The Novi Research data is unambiguous: a badge reading “Certified Product” performs worse than no badge in some contexts. AI models are trained to discount ambiguous authority signals. Only pursue and display certifications from verifiers that carry specific, recognized authority in your category. Generic seals of approval are money and time wasted.

Pitfall 3: Building one great page instead of broad mention coverage.

Given that 40%–60% of AI citations change month-to-month per the Semrush AI Visibility Index, a single highly-optimized landing page provides unstable results. The mention-breadth strategy — consistent presence across Reddit, YouTube, review platforms, and niche publications — is the only durable approach.

Pitfall 4: Relying on internal claims without external corroboration.

An agent evaluating “best CRM for small business” does not weight your homepage headline equally with G2 reviews or Reddit recommendations. Self-referential marketing copy is the weakest possible signal. Every claim your site makes needs external corroboration from sources that AI models treat as independent and trustworthy.

Pitfall 5: Ignoring measurement until it’s too late.

Without AI visibility tracking, you have no feedback loop. You cannot know if an agent is evaluating and rejecting your brand because GA4 captures only the sessions that arrive. Implement AI visibility monitoring before you optimize — so you have a baseline to measure against.

Expert Tips

Tip 1: Use Patagonia’s product data strategy as a model.

Semrush’s research highlights Patagonia’s approach: their review system captures specific user attributes (height, activity type, conditions) that allow AI agents to make precise product-to-user matches. If your product has meaningful variation in fit based on user attributes, build your review and product data collection to capture those attributes explicitly. Structured specificity beats generic praise every time.

Tip 2: Build a “machine-readable capabilities” page.

Beyond standard schema, consider publishing a dedicated page — possibly /capabilities.json or /agent-info — that directly declares your product’s attributes, integrations, pricing tiers, and audience fit in structured format. This is the WebMCP approach: active capability declaration rather than passive content optimization.

Tip 3: Track the queries, not just the citations.

Monthly prompt testing should go beyond checking if your brand appears. Track the exact language AI models use to describe your brand — this reveals how you’re being positioned and whether it matches your intended positioning. Gaps between your intended positioning and AI-generated descriptions are content gaps to close.

Tip 4: Treat negative space as a visibility signal.

Explicitly stating what your product does NOT do — which customer segments you don’t serve, which use cases you’re not optimized for — helps AI agents make confident matches and avoids appearing on lists where you’d generate negative user experiences. A rejected recommendation from an agent (user clicks through and finds you’re a poor fit) erodes agent trust over time.

Tip 5: Invest in original data, not just content.

Original research — even a simple annual survey of 200 industry practitioners — gives AI models a citable primary source. Secondary content that aggregates others’ statistics is structurally weaker for AI citation. One original data point that gets picked up across 10 industry publications is worth more for AI visibility than 20 well-written opinion articles.

FAQ

Q: How is the agentic web different from AI Overviews in Google Search?

A: Google AI Overviews is one surface where AI summarizes search results — it’s reactive, triggered by a user query. The agentic web describes infrastructure for AI agents that operate autonomously, initiating their own queries, browsing multiple sites, and completing multi-step tasks (including transactions) without waiting for user input at each step. Optimizing for Google AI Overviews is a subset of agentic web optimization, not the whole picture. Per Semrush, agents are now operating across ChatGPT, Perplexity, Claude, Gemini, and purpose-built agent platforms — not just Google.

Q: What is Model Context Protocol (MCP) and do I need to implement it?

A: MCP is a standardized protocol — launched in November 2024 — that allows AI agents to communicate with business systems (inventory, pricing, customer service) in a consistent machine-readable format. Think of it as HTTP for AI-to-business communication. Whether you need to implement it depends on your scale: large enterprises and e-commerce operations with complex product catalogs benefit most. For smaller businesses, focusing on schema markup, content architecture, and trust signals is the higher-ROI starting point. MCP implementation requires developer resources and cloud infrastructure.

Q: Will my paid search investment still matter in the agentic web?

A: The Semrush agentic web research indicates that OpenAI shifted away from native agent checkout toward merchant site redirects in March 2026 — which means paid traffic channels still drive conversion-stage value. However, agents operating in research and shortlisting mode largely bypass paid placements. The safe assumption: paid search protects branded terms and drives conversion traffic, while GEO and agentic optimization drives discovery-stage presence. Both remain necessary; neither alone is sufficient.

Q: How do I know if AI agents are already evaluating my brand?

A: You won’t see it directly in GA4. The Semrush AI Visibility Index and tools like Profound track citation frequency across generative platforms. Manual testing — querying ChatGPT, Perplexity, and Google AI Overviews with your target customer’s search intent — gives you a baseline immediately. Check whether your brand appears, how it’s described, and which sources are cited. This manual testing costs nothing and reveals your current AI visibility posture within an hour.

Q: How long does it take for GEO changes to show up in AI citations?

A: AI citation lag is generally faster than traditional SEO ranking changes but less predictable. Schema markup and structured data changes can be picked up within days by AI crawlers. Content changes (rewriting for extractability, adding comparison pages) may take 2–8 weeks to influence AI citation patterns. Trust signal changes (earning and displaying new certifications) may take longer because AI models need to encounter the signal across multiple sources before weighting it consistently. Plan for a 60–90 day horizon for measurable impact, with monthly monitoring to track progress.

Bottom Line

The agentic web represents a structural shift in commercial discovery — not a trend, but a new infrastructure layer that is already operational. Semrush’s research documents a clear picture: AI agents are running evaluation logic that most brand websites are not built to satisfy, and Novi Research quantifies the consequence — products without verified trust signals are selected up to 96% less often than random chance. The five-phase implementation framework in this tutorial — technical foundation, content architecture, trust signal acquisition, off-site presence, and measurement — addresses each layer of the AI selection process systematically. The brands building this infrastructure now are the ones that will dominate AI-generated shortlists as agent autonomy increases over the next 24 months. The window to establish entity clarity and trust signal dominance in your category is open; it will not remain open indefinitely.

0 Comments