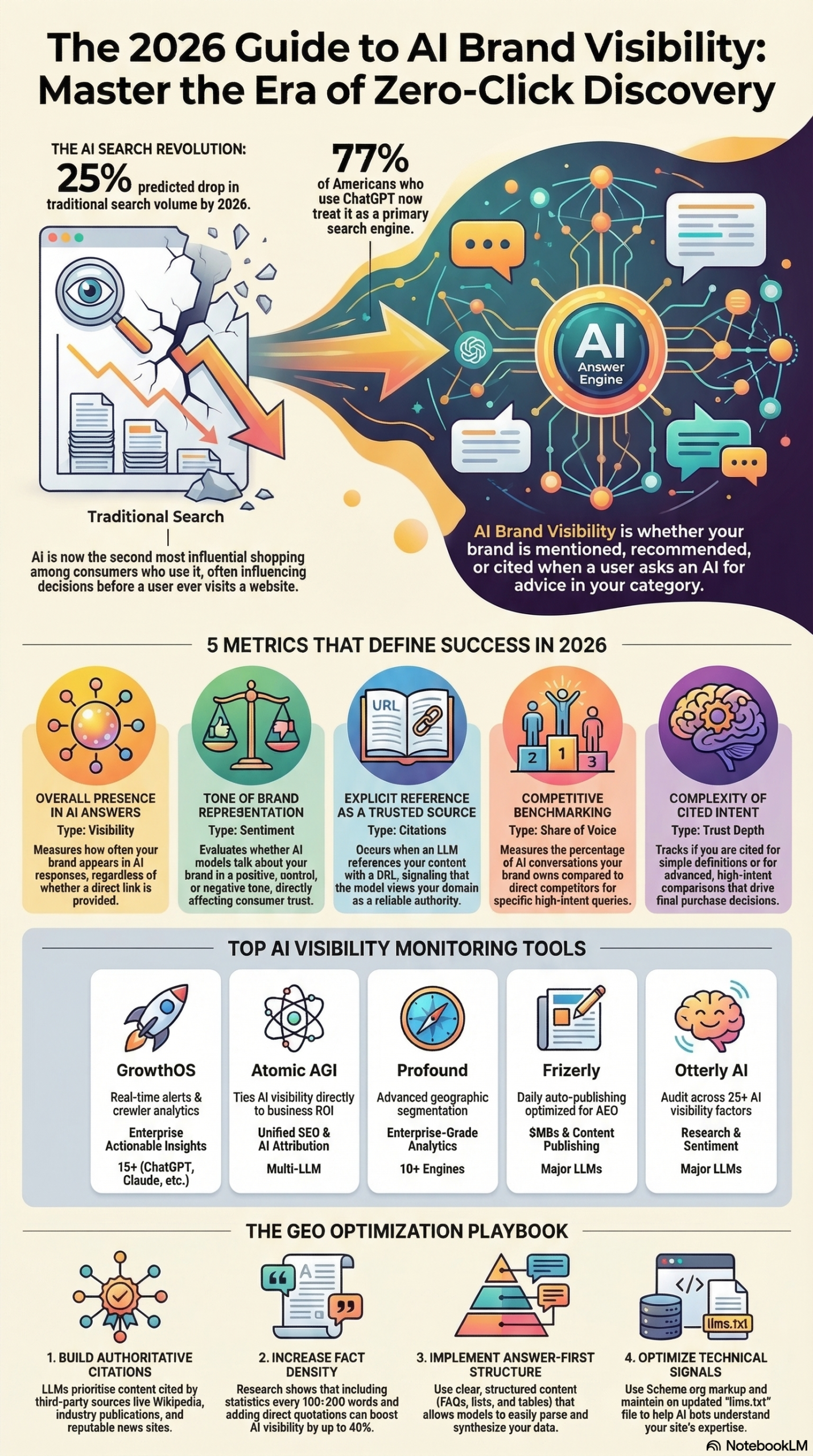

ChatGPT now reaches over 900 million weekly active users, and Gartner has predicted a 25% drop in traditional search volume by 2026 due to AI chatbots — which means the ranking game you’ve been playing for the last decade is no longer the only game in town. LLM visibility is the measure of how, when, and in what context AI assistants describe your brand when users ask relevant questions. This tutorial will show you exactly how to audit your current LLM visibility footprint, select the right monitoring tools, implement Generative Engine Optimization (GEO), and build the reporting infrastructure to track it over time.

What Is LLM Visibility?

LLM visibility refers to how prominently and accurately a brand appears within the synthesized responses generated by Large Language Models such as ChatGPT, Google Gemini, Anthropic Claude, and Perplexity. It is distinct from traditional SEO rankings in a fundamental way: ranking in Google’s index and being cited in an AI-generated answer are now two separate outcomes that require two separate strategies.

The core concept, as documented by Hootsuite’s 2026 LLM visibility analysis, is that AI systems resolve user queries internally, drawing on training data, real-time web crawl results, and third-party citations to synthesize a single authoritative answer. The user rarely needs to click through to any website. This creates what practitioners now call the “zero-click reality”: your brand may be shaping buying decisions without ever generating a session, a pageview, or a referral in your analytics platform.

As documented in the MarketingAgent AI Brand Visibility Research Report, this dynamic has given rise to an entirely new category of measurement: AI Visibility Score (AVS), Citation Share, Entity Recognition Accuracy, Sentiment Polarity, and Trust Depth. Each of these metrics captures a different dimension of how AI models perceive and represent your brand.

What makes this transition particularly sharp for practitioners is what the research report describes as the “Visibility Gap.” A brand can hold the #1 position on Google for a high-intent commercial query while remaining completely invisible in a ChatGPT response to the exact same question. The two environments use different signals, different data, and produce different winners. The report puts it plainly: “Ranking without recognition is no longer enough… If that SaaS company is not mentioned in the AI response, its ranking becomes irrelevant at the exact moment trust is formed.”

LLM visibility is also not a single-platform problem. As the research report notes, different models — ChatGPT, Claude, Gemini, Perplexity — use different training corpora and different real-time retrieval signals. A brand that appears prominently in ChatGPT responses may be entirely absent from Gemini, and vice versa. Comprehensive visibility management requires tracking across at least four major platforms simultaneously.

The underlying mechanics of how LLMs “see” a brand involve two pathways: historical training data (the model has already processed web content from before its knowledge cutoff and formed associations between brands, topics, and authority signals) and real-time web crawling (models like Perplexity and GPT-4o with browsing can fetch current content, meaning freshness and crawlability still matter). Understanding both pathways is essential for designing an effective GEO strategy.

Why LLM Visibility Matters for Practitioners

The business case for investing in LLM visibility is not about vanity metrics — it is about conversion rates and sales cycle length. The MarketingAgent research report documents that “AI traffic tends to convert better than organic,” and that “LLMs are increasingly the point of first contact in buyer journeys.” This means that not appearing in relevant AI responses doesn’t just cost you awareness — it costs you qualified leads at the bottom of the funnel.

The report further quantifies the trust effect: buyers who encounter a brand in AI research during their evaluation process demonstrate 18–25% shorter sales cycles. AI inclusion acts as a “pre-qualification” step, establishing authority before the prospect ever visits your website or speaks to a sales rep.

For marketing agencies, this represents both a new service category and a new reporting responsibility. Clients who previously paid for SEO rankings now need visibility scores across AI platforms — and agencies that cannot provide those dashboards will lose accounts to competitors who can.

For in-house digital marketers, the shift means rebuilding measurement infrastructure. As documented by Gravity Global and cited in the research report, “there are no clicks, no sessions, no visible referrals” in the AI channel. Standard Google Analytics reporting will show nothing for brand influence that happens entirely within a ChatGPT conversation — which makes dedicated LLM monitoring tools non-optional, not nice-to-have.

For enterprise brands, the stakes are highest. Large organizations with complex product lines face the additional challenge of Entity Recognition Accuracy: ensuring that AI models correctly identify and distinguish their brands, products, and executives rather than attributing achievements to competitors or misclassifying their category entirely.

This is a fundamentally new discipline, and it requires practitioners to learn a new set of tools, metrics, and content strategies simultaneously.

The Data: LLM Visibility Metrics vs. Traditional SEO KPIs

The following table, based on the MarketingAgent AI Brand Visibility Research Report, maps traditional SEO KPIs to their 2026 AI-era equivalents.

| Traditional SEO KPI | AI-Era Equivalent | Definition | Why It Replaced the Old Metric |

|---|---|---|---|

| Average Rank Position | AI Visibility Score (AVS) | Frequency of brand mentions in AI outputs relative to competitors | Rank is meaningless if the AI answers without linking to your site |

| Backlink Count | Citation Share | How often a brand is explicitly referenced with a URL vs. rivals | AI models treat citations as authority signals, not raw link volume |

| Organic Traffic Sessions | Trust Depth | Complexity of queries for which a brand is cited | Depth of AI citation distinguishes authority from general mentions |

| Keyword Impression Share | Entity Recognition Accuracy | Whether AI correctly identifies brands, products, and experts | Misclassification by AI can erase competitive differentiation |

| Sentiment (Review Volume) | Sentiment Polarity | The tone of the AI’s representation (positive/neutral/negative) | AI models synthesize tone from dozens of sources into a single narrative |

| Bounce Rate / Engagement | Prompt Response Quality | Nuance and accuracy of AI answers about your brand | Engagement with zero-click AI answers is invisible to analytics |

Additionally, the competitive tool landscape has matured rapidly. Here is how the leading AI monitoring platforms compare:

| Tool | Primary Focus | Key Differentiator | Best For |

|---|---|---|---|

| GrowthOS | Action-oriented analytics | 15+ platforms covered; prioritized content recommendations | Teams that need clear next-step guidance |

| Atomic AGI | Unified SEO + AI attribution | Blends Google Search Console / GA4 data with LLM visibility | Agencies bridging traditional and AI analytics |

| Profound | Enterprise GEO | “Conversation Explorer” for real-time AI search volume; multi-region | Large brands with multi-market presence |

| Frizerly Pro | SMB automation | Combines GEO tracking with daily auto-publishing and AEO | Small businesses with limited editorial bandwidth |

| Otterly.AI | Audit-based optimization | GEO audits across 25+ factors; actionable fix lists | Brands starting their GEO program from zero |

| Sight AI | Content-led visibility | Uses AI agents to generate GEO-optimized articles based on visibility gaps | Content-first teams targeting specific topic gaps |

Source: MarketingAgent AI Brand Visibility Research Report

Step-by-Step Tutorial: Building Your LLM Visibility Tracking System

This is the practical core of this guide. Follow these steps in sequence to build a functional LLM visibility tracking system from scratch. The full build takes roughly 4–6 hours the first time; subsequent monthly audits take 60–90 minutes.

Phase 1: Establish Your Baseline Prompt Library

Before you can track visibility, you need a consistent set of prompts to run across AI platforms. Your prompt library is the foundation of everything that follows.

Step 1: Identify your 10–15 core commercial queries.

Start with the buying-intent questions your prospective customers actually type. Think: “What is the best [category] tool for [use case]?” or “Which [category] companies are worth considering?” Do not start with branded queries — those will almost always mention you. You want to find the unbranded queries where AI models are deciding who to recommend without any hint of your brand name.

Examples for a B2B SaaS company:

– “What are the best CRM platforms for mid-market sales teams?”

– “How do I automate lead scoring in 2026?”

– “Which marketing automation platforms integrate with Salesforce?”

Step 2: Add 10–15 problem-oriented informational queries.

These capture upper-funnel AI answers where authority is established before the buying conversation begins. Examples: “How do I reduce customer churn?” or “What does an effective sales ops workflow look like?”

Step 3: Add 5 competitor-comparison queries.

These reveal whether AI models recommend you alongside or instead of known competitors. Examples: “[Competitor A] vs [Competitor B]: which is better for enterprise?” Run these even if they feel uncomfortable — the data is essential.

You now have a 25–35 prompt library. Document these in a shared spreadsheet that your team will use for every monthly audit.

Phase 2: Run Your First Manual Prompt Audit

Step 4: Set up your testing environment.

Open four browser tabs, one each for ChatGPT (GPT-4o), Google Gemini (Gemini 1.5 Pro or later), Perplexity AI, and Anthropic Claude. Use a private/incognito window for each to prevent personalization bias. Log into each platform with a neutral account that has no prior conversation history on the topic.

Step 5: Run each prompt across all four platforms.

Copy each prompt from your library and paste it into all four platforms simultaneously. Do not run them in sequence — time-of-day and conversation context can affect outputs. Record the raw response for each prompt/platform combination in your spreadsheet.

Step 6: Score each response against five criteria.

For each response, note:

– Is your brand mentioned? (Yes/No)

– Is it recommended? (Recommended / Mentioned incidentally / Not mentioned)

– Is it mentioned first, second, or third? (Position matters — first mention carries 2–3x the trust weight of third)

– Is the sentiment positive, neutral, or negative?

– Is the description accurate? (Check for misclassification or outdated information)

Enter these scores into your tracking spreadsheet. After your first audit, you have baseline data for every subsequent comparison.

Step 7: Calculate your initial AI Visibility Score (AVS).

AVS is calculated as: (Number of prompts where your brand is mentioned) ÷ (Total prompts run) × 100. Run this calculation per platform and overall. A brand that appears in 8 of 25 prompts on ChatGPT has an AVS of 32% on that platform. Track this number monthly.

Phase 3: Deploy a Dedicated LLM Monitoring Tool

Manual audits are essential for establishing methodology, but they do not scale. For ongoing monitoring, deploy one of the purpose-built tools listed in the comparison table above.

Step 8: Select your tool based on your team profile.

– If you’re an agency managing multiple clients: Atomic AGI (bridges your existing GA4 reporting) or GrowthOS (platform breadth).

– If you’re a solo marketer or small team: Otterly.AI (audit-based, lowest learning curve) or Frizerly Pro (built-in auto-publishing reduces workload).

– If you’re enterprise with multi-region requirements: Profound (Conversation Explorer gives the most accurate real-time AI search volume data).

Step 9: Configure your prompt library in the tool.

Import your 25–35 prompts from Phase 1 into the monitoring platform. Most tools allow you to group prompts by topic cluster, buyer stage (awareness/consideration/decision), and competitor comparison. Take the time to tag each prompt correctly — it enables filter-based reporting later.

Step 10: Set monitoring frequency.

The research report from MarketingAgent recommends running 20–30 key commercial queries monthly at minimum. For competitive categories where LLM outputs shift frequently (due to model updates and competitor content changes), weekly tracking is justified. Configure your tool’s automated schedule accordingly.

Step 11: Enable AI crawler monitoring.

This is a step most teams skip, and it is a significant mistake. AI models like ChatGPT and Claude use their own web crawlers (GPTBot and ClaudeBot, respectively) to retrieve real-time content. If your robots.txt is blocking these crawlers — intentionally or not — the AI cannot access your freshest content, which directly reduces citation share.

Go to your website’s robots.txt file and confirm that GPTBot and ClaudeBot are not blocked. If they are, you face a choice: allow them to index your full site, or selectively allow high-priority pages (product pages, case studies, research reports). For most brands, full allowance is the right call.

Phase 4: Act on the Data

Step 12: Identify your Citation Gap.

Once you have your first month of monitoring data, run a “Citation Gap Analysis.” This is the process of identifying which sources the AI models are citing when they don’t mention your brand. In your monitoring tool or manually, look at the third-party sources cited in competitor-favorable responses. These are the publications, review sites, Reddit threads, and industry blogs that carry authority weight with these specific LLMs.

Step 13: Build your third-party validation list.

For each external source identified in Step 12, create an action item:

– Review platforms (G2, Capterra, Trustpilot): Ensure your profile is complete, accurate, and has recent reviews.

– Industry publications: Pitch contributed articles or data studies that position your brand as a primary source.

– Reddit and Quora: Monitor for relevant threads and ensure your team participates authentically with expertise-first responses.

– Wikipedia: If your brand has a Wikipedia entry, audit it for accuracy. If you don’t have one, and your brand meets notability criteria, this is worth pursuing through a professional Wikipedia editor.

Step 14: Restructure your content for GEO.

Based on the prompts where you’re missing, create or restructure content specifically designed for AI citation. The research report identifies the content formats LLMs prioritize:

- Structured FAQs with question headers and direct, concise answers

- Comparison tables that give AI models easy-to-synthesize structured data

- Clear definitions that establish your brand as the source of record for key concepts

- Original research and data that models can cite as unique source material

- Schema markup (FAQ schema, HowTo schema, Speakable schema) to make content machine-readable

Step 15: Build a monthly reporting cadence.

Create a monthly LLM Visibility Report that tracks: AVS by platform, Citation Share trend, Sentiment Polarity score, and Entity Recognition Accuracy. Compare to the prior month and prior quarter. Share this alongside — not instead of — your traditional SEO reporting, so stakeholders see both channels represented.

Expected Outcomes After 90 Days

Teams that execute this full process consistently can expect: a baseline AVS established by month one, measurable Citation Share improvement by month two (from content and third-party work initiated in Steps 12–14), and a functioning monthly reporting cadence by month three. The 18–25% shorter sales cycle effect documented in the research report becomes measurable at the account level once CRM data is correlated with LLM referral traffic.

Real-World Use Cases

B2B SaaS: Recovering from a Competitive Visibility Gap

Scenario: A mid-market project management software company discovers that when users ask ChatGPT “What is the best project management tool for remote teams?”, the AI recommends three competitors by name but never mentions their brand — despite being ranked #2 on Google for the same query.

Implementation: They run a full prompt audit across Perplexity, Gemini, and Claude and find similar gaps. Citation analysis reveals the AI is citing a G2 comparison article and two TechCrunch features that mention only their competitors. They update their G2 profile with detailed feature descriptions and use cases, pitch a contributed feature comparison to TechCrunch, and restructure their pricing page with a competitor comparison table using schema markup.

Expected Outcome: Within 60–90 days, as AI crawlers re-index the updated third-party content, their brand begins appearing in AI responses to the target prompts. AVS on ChatGPT increases from 0% to approximately 20–35% for the commercial query cluster.

Marketing Agency: Building an AI Visibility Reporting Service

Scenario: A digital marketing agency wants to offer LLM visibility auditing as a new service line to retain existing SEO clients who are asking about AI search.

Implementation: They deploy Atomic AGI to bridge their existing Google Search Console and GA4 reporting with LLM visibility data. They create a standardized prompt library for each client’s industry, run baseline audits, and produce monthly AI Visibility Reports alongside traditional SEO dashboards. Pricing is set at an additional $1,500–$3,000/month per client.

Expected Outcome: The agency differentiates from competitors offering only traditional SEO, reduces client churn by demonstrating forward-looking value, and opens a new revenue stream that scales with minimal additional headcount as the monitoring tools handle ongoing tracking.

E-Commerce Brand: Optimizing for AI Shopping Recommendations

Scenario: A direct-to-consumer skincare brand notices that AI assistants frequently recommend competitor products when users ask “What are the best clean skincare products for sensitive skin?”

Implementation: Using Otterly.AI, they run a 25-factor GEO audit and identify that their product descriptions lack structured ingredient transparency data, which LLMs use to assess “clean” ingredient claims. They restructure product pages with ingredient transparency tables, publish an original research piece on “10 Ingredients Dermatologists Recommend for Sensitive Skin” with schema markup, and seed authoritative Reddit discussions with expert citations.

Expected Outcome: AI models begin citing the brand’s ingredient research as a reference source, and product recommendations appear in relevant conversational queries, driving direct AI-attributed traffic with above-average conversion rates.

Enterprise: Managing Entity Recognition Accuracy

Scenario: A large financial services firm discovers that Claude and Gemini consistently misidentify one of their subsidiary brands as a competitor’s product, due to naming similarity and ambiguous training data.

Implementation: They work with a Wikipedia professional editor to clarify the firm’s corporate structure, publish a detailed “About” page with organization schema markup explicitly distinguishing the subsidiary, and ensure press releases consistently use the full legal brand name rather than informal abbreviations. They also submit a brand clarification request through Anthropic’s and Google’s respective brand integrity channels.

Expected Outcome: Within one to two model update cycles, Entity Recognition Accuracy improves measurably. The misattribution rate drops, and the subsidiary’s distinct positioning becomes correctly reflected in AI-generated brand descriptions.

Content Marketing Team: Building Topic Territory Authority

Scenario: A HR technology company wants AI models to associate them specifically with “workforce analytics” rather than the broader and more competitive “HR software” category.

Implementation: They concentrate six months of content production on workforce analytics: publishing original data studies, hosting a dedicated resource center with FAQ schema, guest-posting on analytics-focused publications, and building a consistent terminology glossary that defines key workforce analytics concepts and links back to their platform.

Expected Outcome: Over time, AI models training on this concentrated body of content begin associating the brand specifically with the “workforce analytics” topic territory, making them the default recommendation for high-intent queries in that subcategory rather than being lost in the broader HR software noise.

Common Pitfalls

1. Blocking AI crawlers in robots.txt

This is the most damaging technical mistake. Many brands added blanket bot-blocking rules to robots.txt over the past few years for performance or data privacy reasons — inadvertently blocking GPTBot and ClaudeBot. If the AI crawler cannot access your site, real-time retrieval models cannot include your fresh content in their responses. Audit your robots.txt before anything else.

2. Measuring LLM visibility with traditional analytics

Standard Google Analytics 4 cannot capture AI-channel influence. As the research report notes, there are “no clicks, no sessions, no visible referrals” when AI answers a query without driving a click. Teams that try to measure LLM impact through organic traffic alone will consistently undercount the channel’s value and under-invest in it. You need purpose-built tools.

3. Running prompts only on one platform

Different LLMs use different training data and ranking signals. A brand that monitors only ChatGPT will miss entirely different visibility gaps on Gemini and Perplexity. The research report is explicit that comprehensive monitoring requires at least four major platforms.

4. Optimizing content only for keywords, not for concepts

LLMs do not rank pages by keyword density. They synthesize concepts. Content written for keyword frequency (thin, repetitive, volume-focused) performs poorly in AI citation systems. The pivot required is from “keyword stuffing” to “concept teaching” — comprehensive, structured explanations that a model can confidently summarize and cite.

5. Neglecting third-party validation

Owned content alone is not enough. As the research report documents, “AI models weight third-party citations (PR, media, Reddit, Wikipedia) more heavily than owned content.” Brands that optimize only their own site while ignoring their external citation footprint will plateau in AVS regardless of content quality.

Expert Tips

1. Use Speakable schema for direct voice-AI compatibility.

Google’s Speakable schema markup explicitly flags sections of your content as suitable for AI reading and summarization. Implement it on FAQ pages, product definition pages, and key explainer content to increase the probability that AI models pull from your structured data rather than a competitor’s less-optimized copy.

2. Run competitor citation analysis quarterly, not annually.

LLM training data shifts with every model update. A competitor who was invisible in AI responses six months ago may now appear in every commercial query if they published a high-authority data study and earned significant press coverage. Quarterly citation analysis keeps your competitive intelligence current.

3. Treat original research as your highest-LLM-priority content investment.

AI models prioritize citing unique data and research because it is factually distinct. A survey, a benchmark report, or a proprietary dataset gives models a reason to cite your brand as the primary source rather than a secondary aggregator. One original research piece can generate more LLM citations than ten derivative blog posts.

4. Monitor AI crawler logs as a leading indicator.

GPTBot and ClaudeBot crawl frequency on your site is a leading indicator of future visibility. If crawler activity suddenly drops, it may signal a technical issue — a server error, a new robots.txt rule, or a CDN configuration change — before it shows up in your AVS data. Set up crawler-specific log monitoring in your web analytics infrastructure.

5. Maintain “narrative consistency” across all brand touchpoints.

AI models aggregate information from executive bios, press releases, website copy, case studies, and media coverage to form a composite “understanding” of a brand. If your positioning language shifts across these sources — different terminology, different category definitions, different target audience descriptions — the model’s representation of your brand will be blurred and inconsistent. Establish a brand terminology glossary and enforce it across every team that publishes externally.

FAQ

Q: How long does it take to see LLM visibility improvements after implementing GEO changes?

A: Timelines vary by model and content type. For platforms with real-time retrieval (like Perplexity), improvements from third-party citation changes can appear within days. For models that update their training data periodically (like earlier versions of GPT), changes may take one to three months to reflect. As a practical rule, budget 60–90 days before expecting measurable AVS movement from a content and citation initiative, and plan your reporting cadence accordingly.

Q: Is LLM visibility the same as AEO (Answer Engine Optimization)?

A: These terms are related but not identical. AEO (Answer Engine Optimization) refers to the broader practice of optimizing content to appear in any answer engine output, including Google’s featured snippets and knowledge panels. GEO (Generative Engine Optimization) is the more specific discipline of optimizing for AI-generated responses from LLMs. LLM visibility is the metric that GEO is designed to improve. Think of AEO as the umbrella and GEO as the AI-specific implementation within it.

Q: Which AI platform should I prioritize first if resources are limited?

A: Start with ChatGPT, given its scale — the research report documents over 900 million weekly active users. Visibility on ChatGPT provides the broadest immediate reach. Add Perplexity second, because its real-time retrieval model makes your current content and third-party citations directly actionable (no waiting for training data updates). Gemini third, given its integration with Google Search. Claude fourth, though Claude’s growing use in enterprise workflows makes it increasingly important for B2B brands.

Q: Do I need to create entirely new content, or can I restructure existing content for GEO?

A: Both, but start with restructuring. Most brands have existing high-quality content that is not AI-citation-ready because it lacks FAQ schema, comparison tables, or clear definitional statements. Audit your top 20–30 pages first and apply GEO formatting (headers as questions, structured tables, schema markup) before creating net-new content. This provides faster results with lower investment. New original research should be your next priority for content creation.

Q: Can negative AI sentiment about my brand be corrected?

A: Yes, but it requires a sustained effort on third-party sources, not just owned content. Negative sentiment in AI responses typically originates from negative reviews on aggregator sites (G2, Reddit, consumer review platforms) or critical press coverage. The correction strategy involves generating a volume of positive, factual third-party content that outweighs the negative signals — through review generation campaigns, earned media, and authoritative guest contributions. This is a slower process than technical fixes but it is effective over a 6–12 month timeline.

Bottom Line

LLM visibility is the new metric that determines whether your brand exists in the minds of AI-assisted buyers — and in 2026, that population is growing faster than any other discovery channel. The tools to track it exist and have matured significantly: platforms like Profound, Otterly.AI, GrowthOS, and Atomic AGI give practitioners the infrastructure to monitor AVS, Citation Share, and Sentiment Polarity across the major AI platforms at scale. The strategy to improve it is teachable: structured content, schema markup, third-party citation building, and consistent brand narrative. The brands that invest in this discipline now — while most of their competitors are still debating whether it matters — will establish the kind of AI-layer authority that, as the research documents, shortens sales cycles by 18–25% and captures conversion-ready traffic that never shows up in traditional analytics. Start with a manual prompt audit this week, and build from there.

Primary research source: MarketingAgent AI Brand Visibility Research Report | Original article: Hootsuite — LLM Visibility: What it is and how to track it in 2026 | Published: March 10, 2026

0 Comments