Voice AI Just Became a Marketing Channel

Two foundational technology releases — from Thinking Machines and OpenAI — dropped within days of each other in May 2026 and together solved the latency problem that kept voice AI from being a viable marketing channel. Your brand is about to get a literal voice online, and the window to build that strategy before competitors do is open right now. Work through these steps and you’ll have a clear audit of your current voice presence, a framework for brand voice design, and a prioritized action list for deploying real-time voice AI.

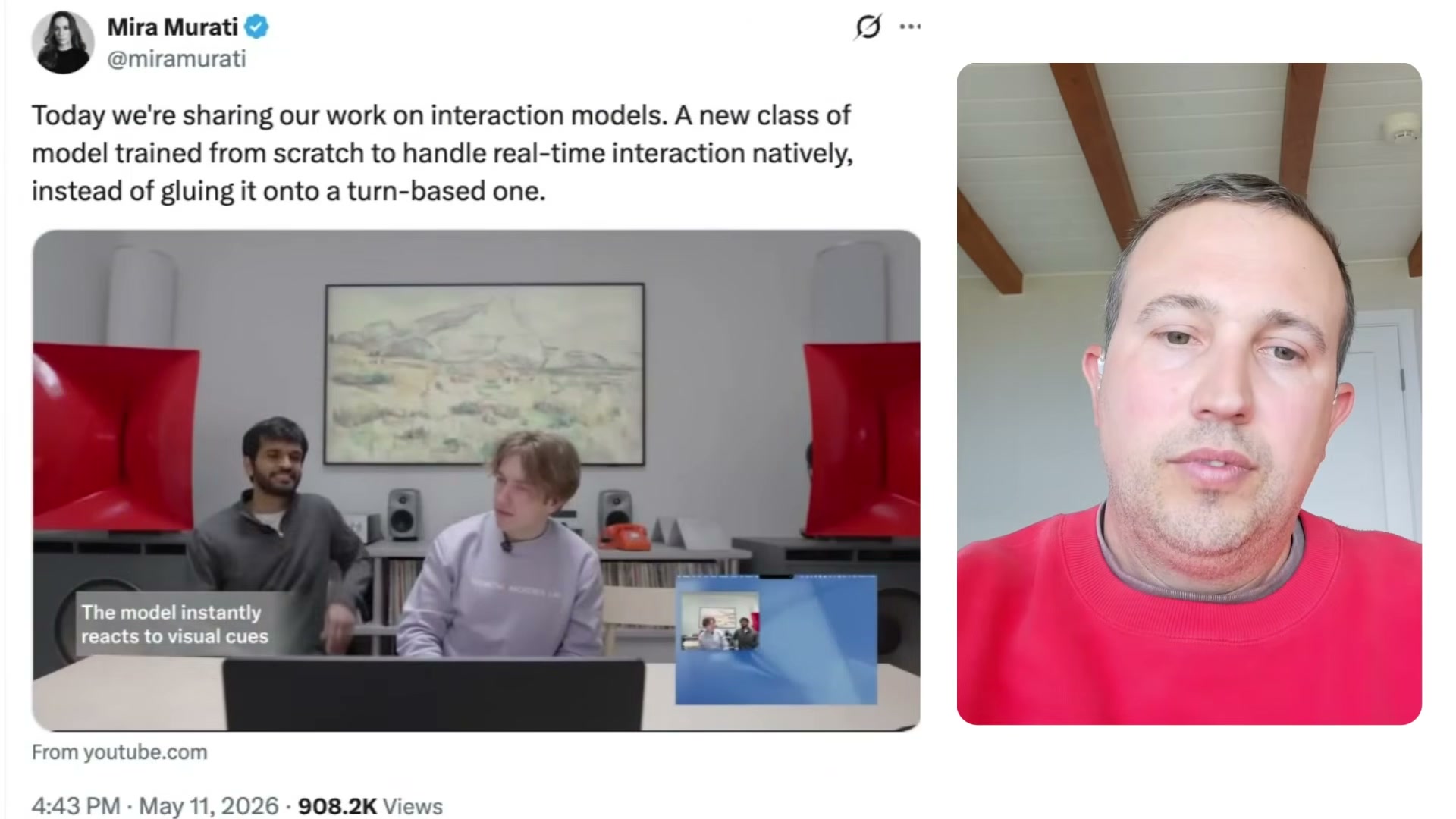

- Watch the Thinking Machines live demo to understand what real-time multimodal AI actually means in practice. Mira Murati — formerly CTO of OpenAI — founded Thinking Machines to build AI natively for real-time interaction rather than adapting text-based models with voice bolted on. The demo shows the model watching a live video stream and responding mid-conversation when someone enters the frame, without waiting for a turn to end — a capability the company calls audio interjection.

- Watch the OpenAI GPT Realtime 2 demo to understand the second major breakthrough. The headline improvement is not audio quality — it’s conversational naturalness and the ability to trigger back-end actions like CRM updates in the middle of a call, with low enough latency that the interaction feels uninterrupted.

- Watch the Sesame AI demo to see what emotive, brand-aligned voice delivery looks like when it’s done well. Capability and polish are different engineering problems, and Sesame AI is an early example of solving the second one — voice that sounds connected to a brand rather than generic.

- Treat voice as a channel, not a feature or an IT project. The strategic argument here is precise: chat is moving to voice, and customer expectations will follow. Brands that hand voice AI to engineering rather than marketing will lose control of how they sound — and in an attention-scarce environment, first impressions in voice carry the same weight as visual identity.

-

Define your brand voice parameters — tone, warmth, accent — and use a tool like ElevenLabs to create a custom voice asset. This is a branding decision with the same strategic weight as choosing a typeface; it belongs in marketing, not a vendor default.

-

Write custom instructions (system prompts) for your voice agents to keep conversations on-brand and on-task. Voice agent conversations can run 5–15 minutes, and without guardrails they will drift in ways that undermine the brand experience you’re building.

-

Call your own company phone number and experience the current voice touchpoint exactly as a customer does. Listen for tone, routing logic, hold messaging, and whether any of it reflects the brand you want to project.

-

Pull baseline call volume data and document current routing effectiveness. Without a baseline, you have no way to measure the impact of changes you make.

-

Define the ideal end-to-end voice experience — from first word to resolution — before building anything new. The goal is not to sound human; it’s to sound like your brand.

-

Start building voice strategy now, while adoption curves are still in their early stage. The latency barrier has been solved; historically, that is the inflection point where category adoption accelerates quickly.

How does this compare to the official docs?

The steps above reflect the strategic framework the episode lays out from 30,000 feet — Act 2 stress-tests each recommendation against the technical documentation for ElevenLabs, the OpenAI Realtime API, and Thinking Machines, so you can move from insight to implementation with confidence.

Here’s What the Official Docs Show

Act 1 gives you the strategic map — the right mental model for why real-time voice AI belongs in your marketing stack right now. What follows layers in what official product pages confirm, clarify, and extend, so you can move from insight to implementation with accurate information in hand.

Step 1 — Watch the Thinking Machines live demo

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 2 — Watch the OpenAI GPT Realtime 2 demo

The video’s approach here matches the current docs exactly in confirming that OpenAI is actively advancing voice capabilities in its API. One product name, however, needs a correction: as of May 14, 2026, no product named “GPT Realtime 2” appears on OpenAI’s public pages — the current flagship models are GPT-5.5 and GPT-5.5 Instant, and OpenAI’s voice-adjacent release is referenced in a news article titled “Advancing voice intelligence with new models in the API.” The strategic premise holds; the specific model name does not match what’s publicly visible.

Step 3 — Watch the Sesame AI demo

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 4 — Treat voice as a channel, not a feature or IT project

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 5 — Define brand voice parameters and build a custom voice on ElevenLabs

The video’s approach here matches the current docs exactly. ElevenLabs explicitly lists Voice Cloning as a core capability, and enterprise adoption across Salesforce, Disney, NVIDIA, and Meta confirms this is production-grade tooling — not a hobbyist platform. One structural clarification the tutorial skips: ElevenLabs has reorganized into three named product lines. Brand voice creation and cloning live in ElevenCreative. The conversational agent work the tutorial describes in Steps 6–9 belongs in ElevenAgents, which is explicitly positioned for customer experience. ElevenAPI is the developer access layer. Knowing which product line you’re configuring saves meaningful onboarding time.

Step 6 — Write system prompts for your voice agents

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 7 — Call your own company phone number

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 8 — Pull baseline call volume data and document routing effectiveness

The video’s approach here matches the current docs exactly. ElevenAgents ships a built-in analytics dashboard that surfaces exactly the metrics the tutorial recommends tracking — total call volume, average call duration, per-call cost, overall success rate, and CSAT — without requiring a third-party analytics layer. The dashboard screenshot confirms 33.9K calls monitored, a 75.1% success rate, and a $0.044 average per-call cost as representative live benchmarks.

Step 9 — Define the ideal end-to-end voice experience before building

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Step 10 — Start building voice strategy now

No official documentation was found for this step — proceed using the video’s approach and verify independently.

Useful Links

- OpenAI | Research & Deployment — OpenAI’s homepage and news feed, including GPT-5.5 product announcements and the “Advancing voice intelligence with new models in the API” article confirming active voice API development

- Free AI Voice Generator & Voice Agents Platform | ElevenLabs — ElevenLabs product overview covering all three product lines (ElevenCreative, ElevenAgents, ElevenAPI), Voice Cloning capabilities, and enterprise customer roster

0 Comments