AI search is no longer a future trend — it’s where your customers are making decisions right now. According to NotebookLM research on GEO trends, 80% of consumers now rely on AI-written results for at least 40% of their searches, often bypassing traditional search results entirely. This tutorial walks you through a systematic process to measure which large language model platforms — ChatGPT, Perplexity, Gemini, or others — are actually driving visibility, traffic, and conversions for your brand, so you can stop guessing and start allocating your GEO effort where it pays off.

What This Is: Generative Engine Optimization and LLM Performance Measurement

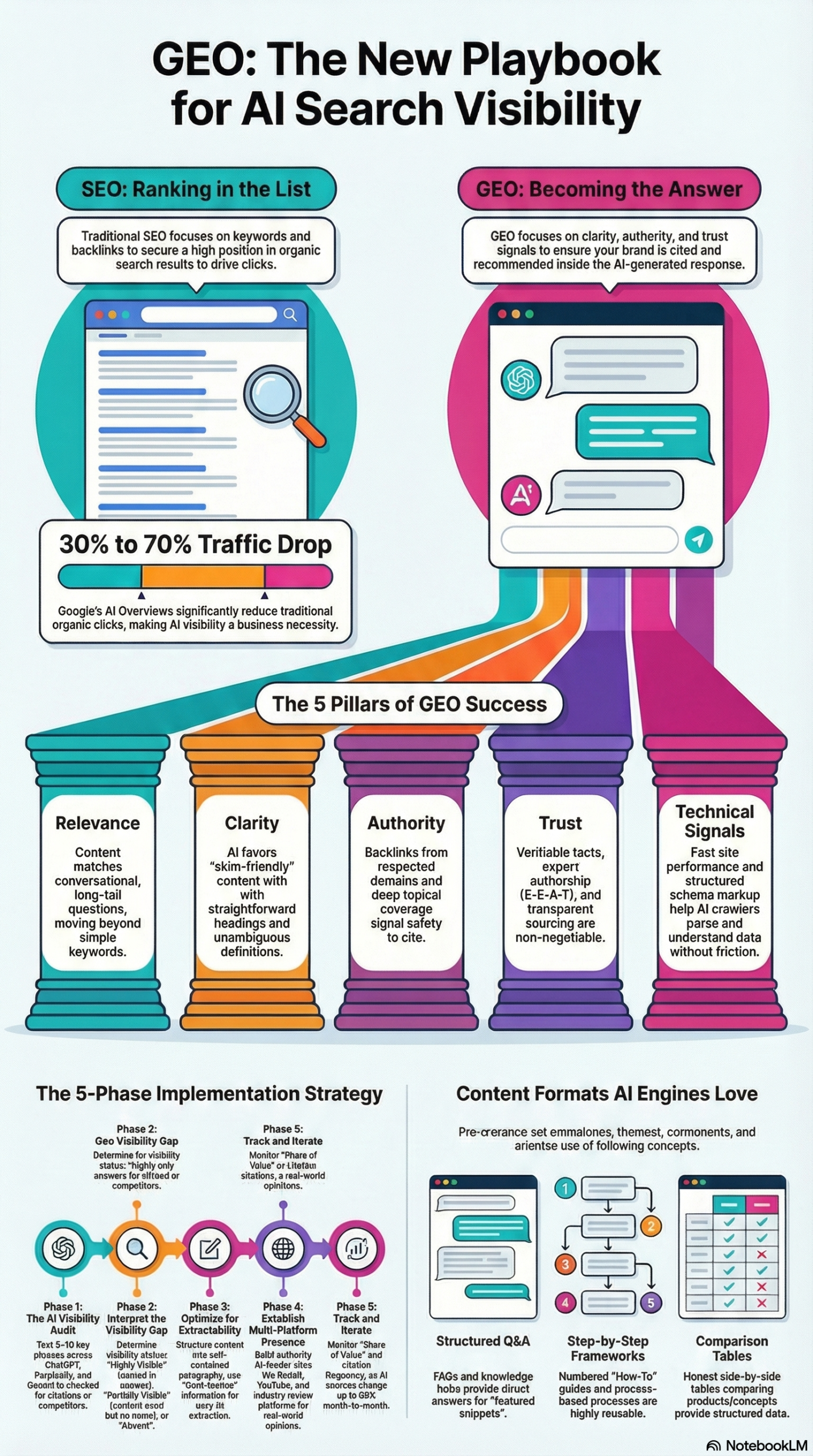

Traditional SEO gave you clean metrics: rankings, impressions, clicks. You could look at Google Search Console and know exactly how a keyword was performing. Generative Engine Optimization (GEO) breaks that model. Instead of ranking on a results page, your content either becomes the answer inside an AI-generated response — or it doesn’t exist at all.

As explained in the NotebookLM GEO research report, the core difference is this: “SEO gets your content onto a results page. GEO helps your content become the answer inside those results.” That distinction is not semantic — it fundamentally changes what you optimize for, how you measure success, and which tools you use to track performance.

There are now four primary AI answer engines that matter for most brands: Google AI Overviews, ChatGPT (OpenAI), Perplexity AI, and Gemini (Google DeepMind). Each one sources content differently, weights authority signals differently, and serves different user demographics. A brand that dominates ChatGPT citations may be completely absent from Perplexity — and vice versa. Understanding why requires understanding how each engine crawls, retrieves, and synthesizes content.

Google AI Overviews integrate directly into search results pages and pull primarily from Google’s existing index, weighted heavily by PageRank-style authority signals. Perplexity operates more like a research engine, crawling the live web in real time and citing sources explicitly — meaning citation visibility is measurable. ChatGPT (via its browse mode and GPT-4o) blends its training data with live web retrieval, making it harder to track but critical for top-of-funnel brand awareness. Gemini leverages Google’s Knowledge Graph and index, with deep integration across Google Workspace and Android.

The webinar led by SEO & AI Visibility Expert Natalie Johnson of SweetGlow Marketing and Danielle Wood of CallRail, covered in Search Engine Journal, addresses exactly this challenge: marketers are treating all LLM platforms as equal optimization targets, when in reality the conversion rates and intent levels differ significantly by platform and by industry. The actionable shift they advocate is to move from spreading GEO effort equally across all platforms to concentrating resources where high-intent traffic is actually converting.

This is not a theoretical exercise. Brands that fail to differentiate between platforms risk spending hours restructuring content for Perplexity when their buyers are actually coming from ChatGPT — or optimizing for AI Overviews while completely missing the Gemini answers that dominate their product category. The measurement framework described in this tutorial gives you a data-driven method to make that determination for your specific brand, vertical, and buyer persona.

Why It Matters: The Stakes of Getting LLM Attribution Wrong

The GEO research report documents a concrete business impact: Google’s AI Overviews are reducing organic traffic by 30% to 70% for many businesses. That is not a slow erosion — that is a cliff. If you are not visible inside AI-generated answers, you are effectively invisible to a growing share of your audience.

But the more dangerous mistake is misallocating your GEO effort. Here is what that looks like in practice:

An agency running SEO for a B2B SaaS client invests three months restructuring landing pages to rank in Google AI Overviews. Traffic from AI sources increases slightly. But their enterprise buyers — who tend to use ChatGPT and Perplexity for vendor research — aren’t coming from Google AI Overviews at all. The agency optimized for the wrong platform because they never measured where conversions were actually originating.

For marketers and agencies, LLM attribution data answers the budget question directly. When you can show a client that 68% of their AI-referred conversions are coming from Perplexity while only 12% come from ChatGPT, you have a clear case for where to focus content restructuring efforts.

For in-house SEO teams, platform-specific performance data tells you which content formats are resonating. Perplexity, for example, tends to cite structured, source-heavy content explicitly. If Perplexity is your top-converting AI channel, that tells you to invest in comparison tables, cited statistics, and structured Q&A sections — the exact formats Perplexity’s retrieval favors.

For e-commerce and D2C brands, knowing which LLM drives purchase-intent traffic matters enormously. A consumer asking ChatGPT “what’s the best running shoe for flat feet under $120?” is much further down the funnel than someone asking Google a broad informational query. LLM-specific attribution lets you quantify this intent gap and tie it to revenue.

What makes this different from standard channel attribution is the opacity of the source. LLM traffic often appears in analytics as direct or as ambiguous referral traffic, unless you have the right UTM structures and server-side logging in place. This tutorial will show you how to instrument your tracking before you start optimizing.

The Data: LLM Platform Comparison for Brand Visibility

Based on the GEO research report and the Search Engine Journal webinar framework, here is how the major LLM platforms compare across dimensions that matter for brand visibility work:

| Platform | Content Source | Citation Visibility | Crawl Type | Primary User Intent | Best For |

|---|---|---|---|---|---|

| Google AI Overviews | Google Index + Knowledge Graph | Low (no direct links shown) | Bot-crawled HTML | Informational + navigational | Mass-market brands, local SEO |

| Perplexity AI | Live web retrieval | High (inline citations) | Real-time crawl | Research + comparison | B2B, tech, finance verticals |

| ChatGPT (Browse) | Live web + training data | Medium (sources listed) | Real-time + pretrained | Conversational + task-oriented | B2C, product research, broad queries |

| Gemini | Google Index + Workspace | Medium (Google integration) | Bot-crawled HTML | Integrated task assistance | Enterprise, Google ecosystem users |

| Bing Copilot | Bing Index + GPT-4 | High (inline citations) | Bot-crawled HTML | Search + productivity | Windows users, Microsoft ecosystem |

| Optimization Signal | Google AI Overviews | Perplexity | ChatGPT | Gemini |

|---|---|---|---|---|

| Structured HTML content | Critical | Important | Moderate | Critical |

| Schema markup (JSON-LD) | Critical | Moderate | Low | Critical |

| Backlink authority | High weight | Moderate weight | Low weight | High weight |

| JavaScript rendering | Blocked | Partially blocked | Partially blocked | Blocked |

| E-E-A-T signals | Critical | Important | Moderate | Critical |

| Recency of content | Moderate | High | Moderate | Moderate |

| Citation/source count | Moderate | High | Moderate | Moderate |

Step-by-Step Tutorial: How to Identify Which LLM Is Working for Your Brand

Prerequisites

Before starting this audit, you need:

– Access to your website analytics (Google Analytics 4 or equivalent)

– Ability to add UTM parameters or custom tracking to your site

– A list of 10–20 target queries your brand should appear in

– Access to Google Search Console

– Accounts on ChatGPT, Perplexity, Gemini, and Bing Copilot (free tiers work for audits)

Phase 1: Establish Your Baseline with an AI Visibility Audit

The first step is to understand where you stand before you optimize anything. This is the manual audit phase.

Step 1: Build your query list. Pull your top 20 non-branded organic keywords from Google Search Console. Add 10 question-format queries your buyers would ask an AI assistant — not just keyword phrases, but full questions like “what is the best [your category] for [use case]?” These conversational queries are exactly how AI engines receive prompts.

Step 2: Run each query across all four platforms. Open ChatGPT, Perplexity, Google (for AI Overviews), and Gemini. Enter each query and record the response. You are specifically looking for:

– Is your brand mentioned by name?

– Is your brand cited as a source?

– Is a competitor mentioned instead?

– What is the tone — positive, neutral, or negative?

– What content is being cited (blog posts, product pages, third-party reviews)?

Step 3: Build a visibility scorecard. Create a spreadsheet with columns: Query | Platform | Brand Mentioned (Y/N) | Cited Source | Competitor Named | Tone. This becomes your baseline AI Visibility Score. According to the GEO research framework, tracking “share of voice” — how frequently you appear vs. competitors — is the primary metric for AI visibility performance.

Phase 2: Instrument Your Analytics for LLM Traffic Attribution

Manual audits tell you where you appear. Analytics tell you what that appearance is worth. The challenge is that most LLM platforms do not pass clean referral data by default. Here’s how to capture it.

Step 4: Identify existing LLM referral traffic. In Google Analytics 4, navigate to Reports > Acquisition > Traffic Acquisition. Filter for referral traffic and look for these domains in your source data:

– chat.openai.com (ChatGPT)

– perplexity.ai

– gemini.google.com

– copilot.microsoft.com or bing.com

– you.com, phind.com, claude.ai (secondary platforms)

Note: A significant portion of LLM-driven traffic arrives as “direct” traffic in GA4 because many users copy URLs from AI answers rather than clicking through tracked referrals. This means your LLM traffic is likely higher than your referral data shows.

Step 5: Set up UTM-aware landing pages. For Perplexity (which shows explicit citations), you can verify citation clicks by checking your referral report directly. For ChatGPT and Gemini where click attribution is harder to capture, create dedicated landing page variants with UTM parameters baked into the canonical URL you want cited. Use utm_source=chatgpt or utm_source=perplexity appended to the URLs you are actively building citations for. Submit these pages via your sitemap so crawlers index the UTM-aware versions.

Step 6: Set up a conversion goal segmented by AI source. In GA4, create a custom segment called “AI Referral Traffic” that includes sessions from the LLM referral domains identified in Step 4. Apply this segment to your conversion goals — form fills, purchases, demo requests — so you can measure conversion rate by AI platform. This is the measurement model the Search Engine Journal webinar recommends: “ties AI search activity to real business outcomes.”

Phase 3: Diagnose Why You Are or Are Not Appearing

Once you have your baseline and your analytics instrumented, you can diagnose the gaps. The GEO research report identifies five pillars that determine AI visibility: Relevance, Clarity, Authority, Trust, and Technical Signals. Use these as your diagnostic framework.

Step 7: Run a content clarity audit. Open your top 10 landing pages. For each one, answer:

– Does the first 100 words contain a clear, one-sentence definition of what this page is about?

– Are headings descriptive enough to stand alone without surrounding context?

– Does each major paragraph make sense if extracted in isolation — without relying on “as discussed above” or “see our earlier section”?

Per the GEO research report, AI systems break content into “chunks” or “vectors” and retrieve relevant passages to assemble an answer. If your paragraphs require context from surrounding sections to make sense, they will not be selected by AI engines. Rewrite for independence.

Step 8: Check your technical crawlability. Per the GEO research report, most LLM crawlers cannot render JavaScript. Use Google Search Console’s URL Inspection tool to view how your pages render, then cross-check with the following:

– Is your core content visible in the HTML source (not loaded by JavaScript)?

– Have you verified that LLM crawlers — specifically the GPTBot, ClaudeBot, and PerplexityBot user agents — are not blocked in your robots.txt?

– Are you running aggressive bot-blocking at your CDN level that might catch AI crawlers as false positives?

Step 9: Audit your entity consistency. According to the GEO research report, AI systems determine a brand’s category and context by comparing descriptions across platforms. Check that your brand description is identical — same phrasing, same category terms — across your LinkedIn company page, X (Twitter) bio, Crunchbase profile, and your own website’s About page. Inconsistencies cause AI engines to misclassify your brand or fail to build a confident entity association.

Phase 4: Prioritize Which LLM to Optimize For First

Step 10: Cross-reference your audit data. You now have two data sets: your manual visibility scorecard (Phase 1) and your GA4 LLM attribution data (Phase 2). Cross-reference them. Look for platforms where:

– You appear in AI responses but are NOT getting attributed traffic (citation with no click — a technical tracking gap)

– You DO get referral traffic from a platform but are NOT appearing in manual audits (possible indirect citation via third-party sites)

– You are absent on a platform where your top competitors are consistently cited

Step 11: Apply the “conversion weight” filter. Not all LLM traffic is equal. Per the Search Engine Journal webinar, the goal is to “stop spreading GEO effort equally and concentrate it where it converts.” Apply your GA4 conversion rate data to your LLM traffic segments. If Perplexity sends 40% fewer sessions than ChatGPT but converts at 3x the rate, Perplexity is your highest-priority optimization target.

Step 12: Set a 30-day sprint goal. Based on your analysis, choose one platform and one content gap to address in the next 30 days. This might be restructuring three product pages to pass Perplexity’s citation criteria, or adding JSON-LD schema to your top-converting pages to improve Google AI Overviews visibility. The sprint model prevents the common mistake of trying to optimize for all platforms simultaneously with diluted effort.

Phase 5: Monitor, Iterate, and Expand

Step 13: Run your audit queries weekly. AI engine outputs are not static. The same query can produce different results as the engine’s training data updates, as competitors publish new content, and as your own content earns or loses citations. Block 30 minutes weekly to re-run your top 10 queries across all platforms and update your scorecard.

Step 14: Track sentiment alongside citations. Being mentioned in an AI response is not always positive. Per the GEO research report, sentiment tracking is a critical component of AI visibility monitoring — you need to know if AI engines are describing your brand with a positive, neutral, or negative framing. Read the full AI response, not just the citation.

Step 15: Expand to “query fanout” research. When you enter a query into Perplexity or ChatGPT, the engine internally generates a set of sub-questions to assemble its answer. According to the GEO research report, observing this “fanout” — the secondary searches an AI generates — reveals content gaps you can fill with targeted pages. In Perplexity, you can often see the search queries the engine ran to build its response. Capture these and add them to your content roadmap.

Real-World Use Cases

Use Case 1: B2B SaaS — Identifying Which LLM Drives Demo Requests

Scenario: A mid-market project management software company wants to know if their GEO efforts are translating to pipeline.

Implementation: They run a manual audit across ChatGPT, Perplexity, and Gemini for queries like “best project management software for remote teams” and “project management tools with time tracking.” They find they appear consistently in Perplexity but are absent from ChatGPT responses. In GA4, they segment referral traffic by LLM source and find that Perplexity referrals convert to demo requests at 4.2% — well above their 1.8% organic average — while ChatGPT referrals are negligible. They prioritize restructuring their comparison pages to increase Perplexity citations further and create a targeted content series addressing the ChatGPT gap.

Expected Outcome: 20–35% increase in Perplexity citation frequency within 60 days, measurable lift in demo conversion from AI-referred traffic.

Use Case 2: E-Commerce — Mapping LLM Platform to Purchase Intent

Scenario: A direct-to-consumer supplement brand notices an uptick in “direct” traffic that doesn’t match any campaign. They suspect LLM-driven dark traffic.

Implementation: They check their GA4 referral report and find unexplained traffic from perplexity.ai. They run manual audits for product-specific queries (“best magnesium supplement for sleep”) and find their brand appearing in Perplexity answers with explicit citations to a third-party review site — not their own site. They restructure their product pages with clear ingredient definitions, sourced health claims with PubMed citations, and a structured FAQ section targeting the exact question format Perplexity users ask. Per the GEO research report, using comparison tables and modular paragraphs dramatically improves extraction probability.

Expected Outcome: Direct citation from brand-owned pages within 45–60 days, with measurable referral attribution replacing dark traffic.

Use Case 3: Agency — Building a GEO Reporting Dashboard for Clients

Scenario: A digital marketing agency wants to add AI visibility reporting to its monthly client deliverables.

Implementation: The agency builds a standardized “AI Visibility Score” for each client using the scorecard methodology from Phase 1. They run 20 target queries monthly across four platforms, tracking brand appearance rate, citation source, and sentiment. They combine this with GA4 LLM attribution segments to show month-over-month trends. Following the Search Engine Journal webinar recommendation to tie “AI search activity to real business outcomes,” they add a conversion-by-LLM-source column to their reporting.

Expected Outcome: Clients gain a clear view of AI channel ROI, enabling evidence-based GEO budget decisions rather than platform-equal investment.

Use Case 4: Enterprise Brand — Fixing Entity Misclassification

Scenario: A software company called “Signal” discovers that AI engines are confusing their brand with competitor tools or unrelated entities when answering queries.

Implementation: Following the entity clarity guidance in the GEO research report, they audit their brand description across all platforms. They find inconsistencies: LinkedIn describes them as “workflow automation,” their website says “business process management,” and Crunchbase says “SaaS.” They standardize all descriptions to one precise phrase, add JSON-LD Organization schema with consistent name/description/URL triples, and publish a “What is Signal?” FAQ page targeting the disambiguation question directly.

Expected Outcome: Reduced entity confusion in AI responses within 30–60 days, measurable improvement in correct brand categorization across platforms.

Use Case 5: Local Business — Targeting Google AI Overviews for High-Intent Queries

Scenario: A regional accounting firm wants to appear in AI Overviews for local tax preparation queries.

Implementation: They run a manual audit of Google AI Overviews for queries like “best CPA for small business taxes [city]” and find local competitors with more structured content dominating the responses. They implement LocalBusiness schema with JSON-LD, restructure their services pages to lead with plain-English definitions per the GEO research report‘s “front-loading” guidance, and build a Q&A section targeting the exact question formats AI Overviews tend to pull from. They also ensure their Google Business Profile is fully populated — a key data source for Google’s AI answers.

Expected Outcome: Appearance in AI Overviews for 3–5 target local queries within 60 days, measurable increase in call and form-fill conversions.

Common Pitfalls

Pitfall 1: Optimizing for all platforms equally without data. The Search Engine Journal webinar makes this the central point: before you reallocate budget or rewrite your GEO roadmap, measure where you actually convert. Spreading effort equally across four LLM platforms means doing everything at 25% effectiveness. Fix it by running the LLM attribution analysis in Phase 2 before touching any content.

Pitfall 2: Blocking LLM crawlers by accident. Per the GEO research report, brands must verify with IT teams that aggressive DDoS prevention is not blocking OpenAI, Anthropic, or Perplexity crawlers. A surprising number of brands have optimized content for AI visibility and then find it invisible because GPTBot is blocked in their robots.txt or caught by their CDN’s bot management rules. Check this before any other technical optimization.

Pitfall 3: Writing for human readers instead of machine extraction. Content that flows beautifully for a human — with smooth transitions, callbacks to earlier sections, and narrative arcs — is exactly what AI engines struggle to parse. Paragraphs that reference “as we discussed above” or “as mentioned earlier” lose meaning when extracted in isolation. Every paragraph must stand alone.

Pitfall 4: Ignoring third-party citation sources. According to the GEO research report, AI systems scan the entire digital ecosystem — not just your owned website. Brands that focus exclusively on restructuring their own pages while ignoring their Reddit presence, G2 reviews, or industry press coverage are missing the sources AI engines actually pull from for many queries. Your optimization scope must extend beyond your domain.

Pitfall 5: Treating AI visibility as a one-time project. LLM engines update their training data, crawling behavior, and ranking signals continuously. A brand that achieves strong citation frequency in January may see it drop by March as a competitor publishes more authoritative content. The monitoring cadence in Phase 5 is not optional — it is how you protect gains.

Expert Tips

Tip 1: Use Perplexity’s “Sources” panel as a competitive intelligence tool. When Perplexity answers a query where you want to rank, it shows exactly which URLs it cited. Click through those sources and analyze them: What format are they using? What heading structure? What data points did Perplexity extract? That reverse-engineering tells you exactly what to replicate in your own content — better than any general GEO guide.

Tip 2: Write explicit definition sentences for every technical term. The GEO research report captures this guidance directly: “If you use technical terms in your content, explain them. Ideally in a simple sentence.” AI engines have a strong preference for content that defines its own terms. A dedicated “glossary” section at the end of technical pages can dramatically increase citation probability, as engines pull these clean, standalone definitions when assembling responses.

Tip 3: Build content that addresses “query fanout” sub-questions. AI engines internally decompose complex queries into sub-questions. If you are targeting the query “best CRM for small businesses,” the engine may internally ask: “What CRM features matter for small businesses?”, “What is the average cost of CRM software?”, and “How hard is CRM implementation?” Create dedicated pages or FAQ sections answering each of these sub-questions explicitly. According to the GEO research report, creating content that targets these secondary searches is a key advanced strategy.

Tip 4: Prioritize earned mentions over backlinks for AI authority. Traditional SEO prioritizes backlinks from authoritative domains. For AI visibility, the GEO research report notes that customer reviews on G2 or Trustpilot, community discussions on Reddit, and industry journalism carry substantial weight because AI engines scan the entire digital ecosystem. A consistent presence in these earned channels builds the type of distributed authority that AI engines use to validate brand trustworthiness.

Tip 5: Monitor your AI sentiment score, not just your citation count. Being cited in an AI response where the engine describes your product as “complex to set up” or “expensive for small teams” is worse than not being cited at all. Run a quarterly sentiment audit of every AI response that mentions your brand, and treat negative sentiment as a content crisis requiring an active response — whether through new FAQ content, review solicitation on third-party platforms, or direct page updates.

FAQ

Q: How do I know if my traffic is coming from LLMs if they don’t always show referral data?

The honest answer is you cannot capture 100% of it with current tools. Focus on what you can measure: check your GA4 referral report for the specific LLM domains listed in Phase 2 of this tutorial. For traffic that appears as “direct,” look for correlations — if direct traffic spikes after your brand appears in a high-visibility AI response, that is likely LLM-dark traffic. Some analytics platforms are beginning to add LLM referral detection natively; watch for GA4 and Semrush updates in this area.

Q: Which LLM platform should I prioritize if I have no data yet?

Start with Perplexity for its explicit citation visibility — it is the easiest to audit manually and the most measurable. Then add Google AI Overviews if your brand targets broad informational queries or has strong local SEO signals. ChatGPT and Gemini require more effort to track but are critical for verticals where buyers use AI assistants for research. The Search Engine Journal webinar emphasizes that conversion rates differ by industry, so no single answer applies universally — run your own audit first.

Q: Does traditional SEO still matter, or should I shift entirely to GEO?

Both. The GEO research report is explicit: “While traditional SEO remains the foundation, GEO introduces new principles regarding information structure, entity clarity, and multi-platform presence.” Google AI Overviews are built on top of the Google index, meaning organic rankings still feed AI visibility. The shift is to layer GEO practices — structured content, entity consistency, technical crawlability — on top of your existing SEO foundation, not to replace it.

Q: How often should I re-run my LLM visibility audit?

Monthly for your top 10–15 queries, and weekly for any queries tied directly to active campaigns or conversion goals. AI engine outputs can change within days following a competitor’s content update or an engine’s crawling cycle. The GEO research report recommends continuous monitoring of citation frequency and sentiment as a standing practice, not a quarterly exercise.

Q: Can I get my brand cited in LLMs even if I have a small website with limited authority?

Yes — especially on Perplexity and ChatGPT, which weight content quality and recency alongside domain authority. The most actionable path for lower-authority brands is to generate earned mentions on high-authority third-party platforms: detailed answers on Reddit in your niche, reviews on G2 or Trustpilot, and guest contributions to industry publications. Per the GEO research report, AI engines actively pull from these earned channels, not just owned domain content. Building a distributed authority profile is often faster for smaller brands than trying to compete on domain authority alone.

Bottom Line

Knowing which LLM is actually working for your brand is not a nice-to-have — it’s the prerequisite for every GEO decision you make. The methodology in this tutorial gives you a structured path: build a manual visibility scorecard, instrument your analytics for LLM attribution, diagnose your technical and content gaps, and concentrate your optimization effort on the platform that converts. According to the GEO research report, 80% of consumers now rely on AI-generated results for nearly half their searches — the brands that establish strong citation presence now will have a compounding advantage as AI search continues to displace traditional results pages. Start with a 10-query manual audit this week, add LLM segments to your GA4 conversion goals, and prioritize the single platform where your buyers are actually making decisions.

0 Comments