Semrush’s content research workflow turns a single seed keyword into a data-backed pipeline covering blog posts, social content, email, and video — without a single brainstorm session. Published March 27, 2026, Semrush’s own guide walks through seven distinct tools to surface what your audience is actually searching for, what your competitors are ranking on, and where your brand is invisible in AI-generated answers. In this tutorial, you will build that same pipeline from scratch using real Semrush features, organized by research phase and output channel.

What This Is

Finding content ideas is a solved problem — if you use the right toolset. Semrush’s content ideation workflow spans seven interconnected tools: Keyword Magic Tool, Topic Research, Organic Research (for Reddit analysis), Social Content AI, Competitor Analysis (Top Pages + Backlink Analytics), Keyword Gap, and the AI Visibility Overview inside the AI Visibility Toolkit.

The workflow begins with a seed keyword and fans out across multiple discovery sources. According to Semrush’s content ideas guide, the running example throughout their documentation is “pour over coffee” — a niche topic that, when fed through all seven tools, produces 30+ content ideas organized by content type (beginner education, comparisons, techniques, products, lifestyle/trends, brand storytelling), discovery source (which tool surfaced it), and best-fit channel (blog, social media, email, video).

The fundamental logic here is worth internalizing before you start: each tool interrogates a different data source. Keyword Magic Tool mines Google search behavior. Topic Research maps thematic relationships between subtopics. Reddit analysis via Organic Research captures natural-language audience conversations happening in real communities. Social Content AI surfaces trending and evergreen social angles. Competitor Analysis (Top Pages + Backlink Analytics) shows you what already has proven demand. Keyword Gap identifies ranking blind spots relative to your specific competitors. And AI Visibility Overview reveals where competitors are already showing up inside ChatGPT, Perplexity, and other LLM-generated responses — but you are not.

These tools are not parallel alternatives to pick from. They are sequential layers that compound on each other. The keyword you find in Keyword Magic Tool gets validated by Topic Research, enriched by Reddit thread language, checked against competitor data, and finally tested against AI search exposure. By the end, you have a content list that is justified with real demand signals, not editorial gut instinct.

The NotebookLM research report reinforces why this multi-tool approach matters in 2026. The AI Visibility Toolkit specifically tracks brand mentions, sentiment, and “Share of Voice” across LLMs like ChatGPT and Gemini. Meanwhile, ContentShake AI — the production-side complement to these research tools — generates personalized topic ideas and automated drafts with built-in SEO and readability checks. Together, they form a research-to-production loop that previously required multiple separate tools from different vendors.

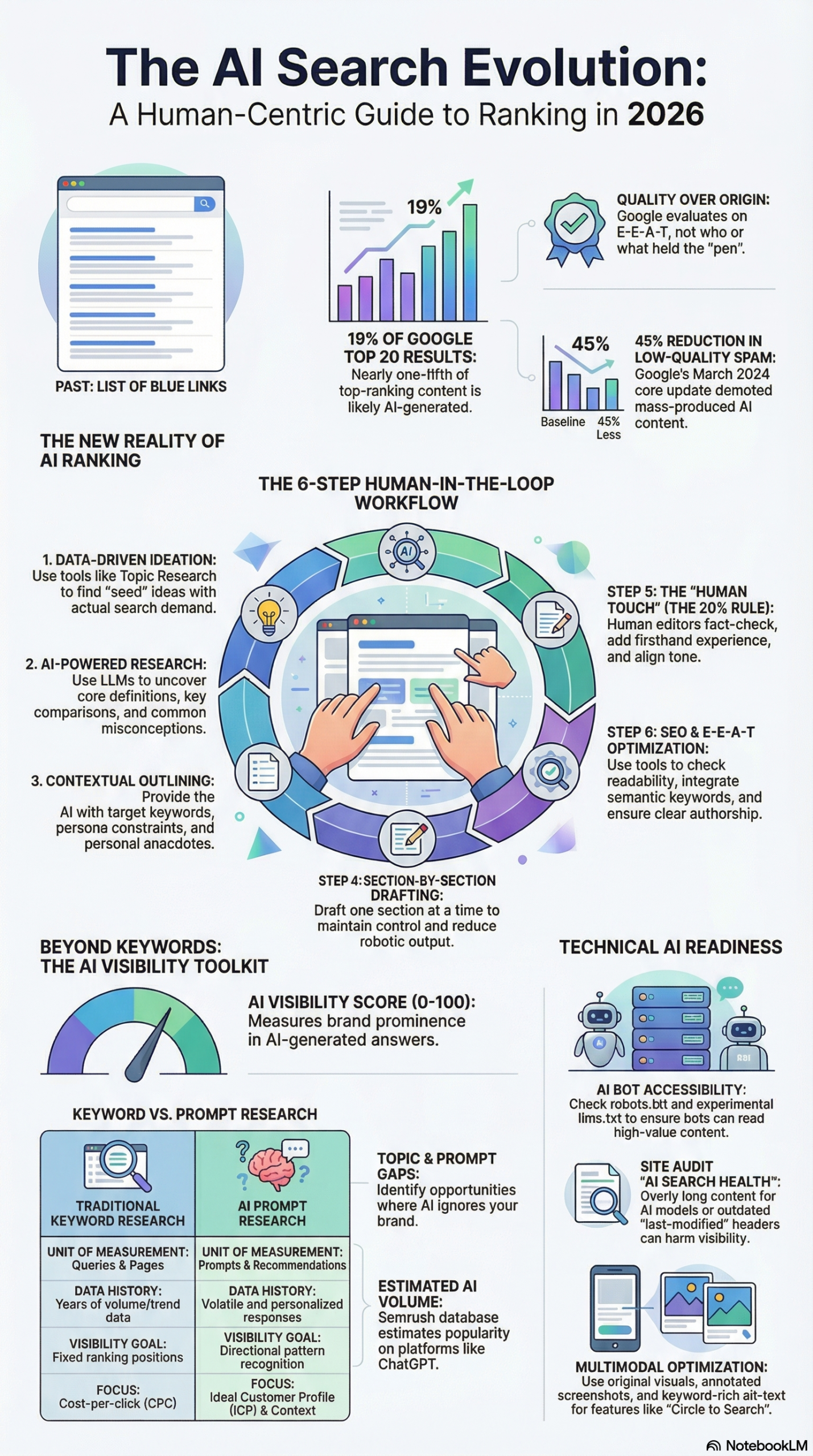

What makes this toolset particularly significant now is the addition of the AI Visibility layer. According to the NotebookLM research report, approximately 19% of content in Google’s top 20 results is likely AI-generated, and 64% of marketers report that AI content performs as well as or better than human-written content. That means the content ideas you surface through Semrush are not just traditional SEO targets — they are also candidates for AI citation optimization, where showing up inside an LLM’s response has become the new “position one” for high-intent queries.

The Semrush ecosystem also includes the Keyword Strategy Builder, which organizes keywords into Topic Clusters with designated Pillar and Subpages to build topical authority. This is the structural output of all that keyword and topic research — not just a list of ideas, but a mapped architecture that tells you how pieces of content relate to each other and which pages need to exist before others.

Why It Matters

Content teams waste enormous effort creating pieces nobody searches for. The problem is rarely creativity — it is validation. Without data, you are simultaneously guessing at audience intent, competitor gaps, and AI search exposure, with no way to prioritize one piece over another when production capacity is limited.

Semrush’s content ideation workflow solves this for three specific practitioner types:

Content strategists and SEOs who need to pitch editorial calendars with defensible justifications. The Keyword Gap “Missing” filter alone produces a list of keywords every competitor ranks for but you do not — that is an instant priority list for stakeholder presentations, backed by real traffic data rather than projections.

Agencies managing multiple client brands who need to scale content research across accounts without ballooning research hours. The ability to compare one domain against multiple competitors via Keyword Gap, then immediately surface the specific prompts where competitors appear in AI responses via AI Visibility Overview, compresses what used to be a multi-day research process into a 2-3 hour workflow per client.

Brand marketers optimizing for AI search who understand that, as the NotebookLM research report documents, “success in traditional SEO doesn’t guarantee visibility in AI search results” (a point emphasized by Alex Lindley). The AI Visibility Overview tool directly addresses this by showing the specific prompts being fed into AI engines, the AI’s actual responses, and exactly which brands are being named. If your competitor shows up in the AI’s answer to “best pour-over coffee maker” and you do not, that is an actionable content and digital PR target — not a vague impression.

What differentiates Semrush from platforms like Ahrefs or Moz is the native AI visibility layer. Both competing platforms have strong keyword and competitor research tools, but neither currently offers the direct LLM mention tracking that Semrush has built into the AI Visibility Toolkit. For 2026 content strategy, that distinction is material — especially as more buying-intent searches shift from traditional Google results to AI-synthesized recommendations.

The workflow also integrates social and community research in a way most SEO platforms do not. Using Organic Research to find Reddit threads currently ranking on Google page one — then reading those threads to surface natural-language objections and real audience frustrations — is a research technique that produces content angles you cannot reverse-engineer from keyword volume data alone. It is the difference between a headline that says “Pour Over Coffee Guide” and one that says “Is Pour Over Coffee Actually Worth the Effort? Here Is What Real Brewers Say.”

The Data

The following tables summarize the seven Semrush tools in the content ideation workflow, their data sources, primary uses, and estimated time investment. Data sourced from Semrush’s content ideas guide and the NotebookLM research report.

Tool Reference: Semrush Content Ideation Suite

| Tool | Data Source | Primary Use | Content Output |

|---|---|---|---|

| Keyword Magic Tool | Google search behavior | Keyword discovery & filtering | Blog topics, FAQ content |

| Topic Research | Semrush topic graph + search data | Thematic mapping & clusters | Pillar pages, content clusters |

| Organic Research (Reddit) | Google SERPs for reddit.com | Audience language & pain points | Community-first content, social angles |

| Social Content AI | Social trends + platform data | Trending & evergreen social angles | Social media, lifestyle content |

| Top Pages + Backlink Analytics | Competitor traffic & backlink data | Demand validation | High-priority blog targets |

| Keyword Gap | Cross-domain ranking comparison | Blind spot identification | Priority content list |

| AI Visibility Overview | LLM response data (ChatGPT, Perplexity) | AI search gap analysis | AI-optimized content, PR targets |

Workflow Phases: Time and Output Estimates

| Research Phase | Tools Used | Est. Time | Output |

|---|---|---|---|

| Seed keyword expansion | Keyword Magic Tool | 30 min | 20–50 keyword variants |

| Thematic mapping | Topic Research | 20 min | 5–10 subtopic clusters |

| Audience research | Organic Research (Reddit) | 30 min | Natural-language angles |

| Social angles | Social Content AI | 15 min | 10+ social content ideas |

| Competitor validation | Top Pages + Backlink Analytics | 30 min | Proven traffic targets |

| Gap analysis | Keyword Gap | 20 min | Missing + untapped keywords |

| AI exposure audit | AI Visibility Overview | 20 min | AI citation gaps |

| Total | All 7 tools | ~2.5 hrs | 25–40 content ideas |

AI vs. Traditional SEO: Key Performance Indicators

| Metric | Traditional SEO Focus | AI Search Optimization (AIO) Focus |

|---|---|---|

| Primary goal | Page 1 Google ranking | Brand mention in AI response |

| Research method | Keyword volume + CPC | Prompt research (evaluation queries) |

| Key signals | Backlinks, DA, on-page optimization | Schema, freshness, keyword co-occurrence |

| Measurement tool | Google Search Console, Semrush Rankings | AI Visibility Overview (Share of Voice) |

| Content format | Long-form, comprehensive | Modular: question headers + 40–60 word answers |

| Discovery stage | Informational (top of funnel) | Middle- and bottom-of-funnel (evaluation) |

Data from the NotebookLM research report.

Step-by-Step Tutorial: Build a Data-Backed Content Pipeline with Semrush

Prerequisites

- An active Semrush account (Pro, Guru, or Business tier — AI Visibility features require Guru or above)

- A seed keyword or product/service category to research

- A list of 2–5 competitor domains in your niche

- A spreadsheet or content management system to log your findings as you go

Expected total time: 2–3 hours for a complete pipeline build

Expected output: 25–40 content ideas organized by channel, cluster, and priority level

Phase 1: Seed Keyword Expansion with Keyword Magic Tool

The Keyword Magic Tool is the entry point. Its job is to take your broad seed concept and surface the specific search phrases your audience uses, filtered for realistic ranking difficulty based on your domain strength.

Step 1 — Enter your seed keyword. Open Keyword Magic Tool and type your seed term. Following the Semrush guide’s example, enter “pour over coffee.” Set your target country and language to match your audience.

Step 2 — Apply search volume and difficulty filters. Filter for monthly search volume above your minimum threshold (typically 100+ for niche topics, 1,000+ for broader categories). Then apply a Keyword Difficulty filter. If your domain authority is low to medium (DA 20–50), cap KD% at 40–50. This surfaces realistic ranking targets, not aspirational ones you cannot compete for yet.

Step 3 — Switch to the Questions tab. This single step surfaces question-based queries (“how to make pour over coffee,” “is pour over worth it,” “what grind size for pour over”) that map directly to FAQ sections, beginner guides, and video scripts. Question keywords also tend to have lower competition and higher click-through rates when they earn featured snippets.

Step 4 — Enable Personal Keyword Difficulty. If your Semrush account has your domain connected, switch to Personal Keyword Difficulty. This recalibrates difficulty scoring based on your specific domain’s historical performance, giving you a more accurate picture of what you can realistically rank for versus the generic domain-agnostic KD% score.

Step 5 — Export your top 20–30 keywords. Copy these into a spreadsheet. For each keyword, log the search volume, KD%, search intent label (informational, commercial, transactional), and the likely content type it maps to (how-to guide, comparison, buyer’s guide, FAQ, listicle). This tagging will determine which cluster each piece belongs to in the next phase.

Phase 2: Thematic Mapping with Topic Research

Keyword Magic Tool gives you individual phrases. Topic Research gives you the thematic architecture that connects them.

Step 6 — Open Topic Research with the same seed keyword. The tool maps related subtopics rather than exact phrases. It clusters related content angles around your core theme and surfaces trending headlines and audience questions for each subtopic.

Step 7 — Switch to Mind Map view. You will see your seed keyword at the center with branches extending to subtopics — for “pour over coffee,” these might include “brewing techniques,” “equipment comparisons,” “beginner basics,” “coffee bean origins,” and “pour over vs. other methods.” Each branch represents a potential pillar page or content cluster in your site architecture.

Step 8 — Identify high-volume and trending subtopics. Click into individual topic cards. Each card shows trending headlines, frequently asked questions, and related searches. Flag topics marked “High Volume” or “Trending” — these are your highest-priority cluster candidates because they have both proven demand and current momentum.

Step 9 — Build your cluster map. Transfer the most promising subtopics to your spreadsheet as cluster headings. Each cluster will eventually contain 3–8 supporting pieces of content. Per the NotebookLM research report, the Keyword Strategy Builder complements this step by designating specific Pillar Pages and Subpages within each cluster, which is how Semrush’s ecosystem formalizes the topical authority architecture this research is building toward.

Phase 3: Audience Language Research via Reddit in Organic Research

This is the most underused technique in the workflow and consistently produces the highest-quality content differentiation. The insight is simple: Reddit threads are ranking on page one of Google for your target keywords. Reading those threads gives you the exact language your audience uses, not a keyword-abstracted version of it.

Step 10 — Open Organic Research and enter reddit.com as the domain. You are not analyzing your own site — you are using Semrush to audit Reddit’s own organic rankings.

Step 11 — Filter by your target keyword. In the keyword filter field, enter one of your target keywords from Phase 1. This filters Reddit’s organic ranking data to show you which specific Reddit threads are currently ranking on Google page one for that search phrase.

Step 12 — Click through to the actual Reddit threads. Read the top posts and the comment threads beneath them. You are mining for four categories of signal:

– Natural-language phrases your audience uses (not sanitized SEO terminology)

– Objections and skepticism (“Is pour-over actually worth the effort compared to a drip machine?”)

– Beginner confusion points (“I have no idea where to start — overwhelmed by options”)

– Strong opinions and debates that could anchor a comparison or opinion-led piece

Step 13 — Capture 5–10 specific phrases, questions, or frustrations. These become actual H2/H3 headings in your content — phrased the way your audience thinks, not the way a keyword tool abstracts it. A heading like “Is Pour Over Coffee Worth the Work? Here Is What 200 Home Brewers Actually Said” will outperform “Benefits of Pour Over Coffee” every time in click-through rate and time-on-page, because it mirrors real audience language.

Phase 4: Social and Trend Research with Social Content AI

Step 14 — Open Social Content AI and browse the trending news feed. Filter by your topic category. This surfaces current news stories, viral posts, and industry developments adjacent to your keyword. These are time-sensitive angles suited to social posts, email newsletter intros, and short-form video scripts that need to feel current.

Step 15 — Switch to Ideas by Topic. Enter your seed keyword to surface evergreen content angles with lifestyle and aspirational framing. Social content operates differently from search-intent content — it needs to be shareable and visually compelling, not just comprehensive. The angles surfaced here often have emotional or narrative dimensions that pure keyword research misses entirely.

Step 16 — Tag and log 5–8 social content ideas. Add these to your spreadsheet with channel assignments (Instagram, LinkedIn, TikTok, Pinterest, email newsletter) based on format fit. A “top 5 pour-over coffee mistakes” angle plays well on short-form video. A “why pour-over changed how I think about morning rituals” angle fits LinkedIn or email better.

Phase 5: Demand Validation with Top Pages and Backlink Analytics

This phase answers the question: what content already has proven traffic and link equity in your space? You are not copying competitors — you are validating demand before investing in production.

Step 17 — Run Top Pages reports for each competitor domain. Open Organic Research, switch to a competitor’s domain, and navigate to Top Pages. This shows you which of their pages drive the most organic traffic. Do this for each competitor in your list. A competitor’s beginner guide to pour-over coffee being their third-highest traffic page is a data-backed signal that this content type has significant demand.

Step 18 — Cross-reference with Backlink Analytics. Run the same competitor pages through Backlink Analytics to see which have accumulated the most inbound links. High-traffic combined with high-backlink pages are your priority targets for building superior alternatives — what is commonly called “skyscraper content” — because the same domains that link to those pages are also potential link sources for a better, more current version.

Step 19 — Identify your gaps by comparison. Look for high-traffic competitor pages where you have no equivalent piece. Flag these in your spreadsheet with “No Equivalent” — they are high-priority entries into your content roadmap because the traffic demand is demonstrated and you currently have zero share of it.

Phase 6: Competitive Gap Analysis with Keyword Gap Tool

Step 20 — Open Keyword Gap and enter your domain plus 2–4 competitor domains. This is one of the most actionable research tools in the Semrush suite because it does not just show you competitors’ keywords — it specifically identifies the delta between their visibility and yours.

Step 21 — Filter by “Missing” keywords. These are keywords where all of your listed competitors rank, but you have zero organic presence. This is your highest-priority list because demand is proven (multiple competitors rank) and your gap is absolute (you have no foothold whatsoever).

Step 22 — Filter by “Untapped” keywords. At least one competitor ranks for these, but you do not. Lower urgency than Missing keywords but still validated demand. These are often faster wins because competition is thinner — only one competitor has identified this territory.

Step 23 — Cross-reference with your Phase 1 keyword list. Any keyword that appears in both Keyword Magic Tool results AND shows up as Missing or Untapped in Keyword Gap jumps to the top of your editorial priority queue. It is validated from two independent angles: it has search volume, and competitors are actively capturing that traffic while you are not.

Phase 7: AI Exposure Audit with AI Visibility Overview

The final phase is the one most content teams are not running yet — and the competitive advantage window for early adopters is real.

Step 24 — Open AI Visibility Toolkit and run the Visibility Overview. This produces a 0–100 benchmark score for how often your brand is mentioned in LLM responses compared to competitors for your target topic area. Per the NotebookLM research report, this tool tracks brand mentions, sentiment, and Share of Voice specifically across LLMs like ChatGPT and Gemini.

Step 25 — Navigate to Topic Opportunities. This section shows you specific prompts being entered into AI engines where competitors appear in the AI’s response but your brand does not. These are not hypothetical gaps — they are documented instances of competitor visibility in real AI responses to real queries.

Step 26 — For each gap prompt, audit the competitor content being cited. The NotebookLM research report documents that AI engines cite content based on structured data (schema markup), content freshness, and “keyword co-occurrence” — where a brand is frequently mentioned near relevant topical keywords in content that LLMs have ingested. The content gap is often a missing FAQ page, an unoptimized comparison guide, or a lack of proper Schema markup rather than a fundamental content quality deficit.

Step 27 — Tag AI-visibility gap pieces and plan technical treatment. Add these to your content list with an “AIO” tag. These pieces require specific technical execution: FAQPage, HowTo, and Article Schema markup; question-framing in H2/H3 headers; and 40–60 word direct answers immediately following each question header. Per the NotebookLM research report, this content modularization structure increases the likelihood of being cited in AI Overviews and featured snippets — which often draw from the same content.

Expected Outcomes After All Seven Phases

After completing the full workflow, your spreadsheet should contain:

- 20–30 blog/long-form content targets, tagged by cluster, priority level, and keyword data

- 5–10 social and email content angles, tagged by channel

- 3–5 “skyscraper” targets validated by competitor traffic and backlink data from Phase 5

- 5–10 AI visibility gaps with specific LLM prompt data from Phase 7

- A cluster map showing pillar pages, supporting subpages, and internal link architecture

- A justification log for every single idea — no piece on the list lacks a demand signal behind it

Real-World Use Cases

Use Case 1: Boutique E-Commerce Brand Building Organic Traffic From Zero

Scenario: A specialty coffee equipment retailer has a Shopify store with 15 pages, a domain authority of 12, and no existing content strategy. They need to build organic traffic without a dedicated content team.

Implementation: Begin with Keyword Gap comparing against three to four established competitors in the space. Filter the “Missing” keywords list for those with KD% under 35 — these are achievable ranking targets at DA 12. Use Topic Research to organize the resulting keywords into two to three content clusters, each anchored by a pillar page (e.g., “Complete Pour Over Coffee Guide,” “French Press Brewing Guide”). Use Reddit analysis via Organic Research to write H2 headings that mirror how beginners actually talk about these topics rather than how keyword tools label them. Assign a production schedule of two supporting posts per pillar page per month.

Expected Outcome: Within six to nine months, the pillar pages establish topical authority in their clusters, and supporting posts begin ranking for long-tail variations of the cluster keywords. Per the NotebookLM research report, organizing content into Topic Clusters via the Keyword Strategy Builder is the documented approach for building topical authority within the Semrush ecosystem.

Use Case 2: B2B SaaS Company Targeting AI Search Visibility

Scenario: A project management SaaS company wants to appear in ChatGPT and Perplexity responses when users ask evaluation-intent questions like “what’s the best project management tool for remote teams?” The company ranks on page three for the keyword in traditional search but is absent from AI responses entirely.

Implementation: Run the AI Visibility Overview to surface specific prompts where competitors (Asana, Monday.com, ClickUp) appear but the brand does not. For each gap prompt, audit what the competitor has on that topic — comparison pages, integration guides, case studies with measurable outcomes. Build equivalent content with proper Schema markup (FAQPage for comparison questions, HowTo for setup guides, Article for case studies). Pair this with a targeted digital PR campaign to earn mentions from the niche blogs and review sites that currently cite competitors but not this brand — these publications are likely in LLM training data.

Expected Outcome: Per the NotebookLM research report, AI citation is influenced by “keyword co-occurrence” — frequent brand mentions near relevant keywords in content ingested by LLMs. A sustained three-to-six month effort combining content production with digital PR outreach should produce measurable improvement in AI Visibility Score.

Use Case 3: Content Agency Scaling Research Across Multiple Client Accounts

Scenario: A digital marketing agency manages content strategy for twelve B2C clients across different verticals. The current research process takes a full day per client per quarter, which is unsustainable at scale.

Implementation: Templatize the seven-phase Semrush workflow into a repeatable SOP with assigned time blocks. For each client, the research flow uses Keyword Magic Tool (30 min) → Keyword Gap (20 min) → AI Visibility Overview (20 min) → Reddit analysis (30 min) → documentation (20 min). The output is a priority-ranked list of 10–15 content ideas per month, each tagged with the specific demand signal that justified its inclusion. Per the NotebookLM research report, the 80/20 rule applies to production: AI tools like ContentShake AI handle 80% of drafting labor, while human editors contribute the final 20% — firsthand examples, brand voice calibration, and fact-checking.

Expected Outcome: Research time per client drops from a full day to under two hours. Every content idea arrives to the client with built-in justification data (search volume, competitor gap, AI exposure), which compresses stakeholder approval cycles and reduces scope disagreements.

Use Case 4: Media Publisher Building a Weekly Newsletter Content Calendar

Scenario: A specialty media brand publishes a weekly newsletter on coffee culture to 45,000 subscribers. The editor needs four to six content ideas per week that balance timeliness, evergreen depth, and social shareability — and currently spends three hours per week in editorial meetings that produce inconsistent results.

Implementation: Use Social Content AI’s trending news feed for time-sensitive angles (new product launches, viral brewing techniques, industry coverage). Use Topic Research’s Mind Map to plan evergreen deep-dives for the main feature slot each week. Use Reddit analysis via Organic Research to identify high-engagement community threads to summarize, contextualize, or debate in a weekly “What the Community Is Saying” section. Each idea gets tagged “timely” or “evergreen” and assigned a format (newsletter feature, social teaser, YouTube script extension).

Expected Outcome: A documented, repeatable weekly content calendar process that takes 90 minutes instead of a three-hour meeting. Content ideas are grounded in actual audience behavior and platform data rather than editorial intuition, reducing the likelihood of publishing pieces that miss audience interest.

Common Pitfalls

Pitfall 1: Treating High Search Volume as the Only Filter

High-volume keywords often carry high KD%, which means they are realistic targets only for high-authority domains. Building a content calendar full of 10,000-searches-per-month keywords your domain cannot rank for wastes production resources on pieces that will not generate traffic for 12–18 months, if ever. Fix: Always pair volume with Keyword Difficulty. Use Personal Keyword Difficulty if your domain is connected. Per the Semrush guide, filtering for realistic KD% based on domain strength is explicitly built into the Keyword Magic Tool workflow — use it.

Pitfall 2: Skipping the Reddit Research Phase

Most content teams skip this step because it feels manual and qualitative compared to the structured data in other Semrush tools. That is exactly why it is valuable: your competitors are likely skipping it too. The natural-language angles, audience objections, and beginner confusion points surfaced from actual Reddit threads produce content headings that resonate in ways keyword-abstracted titles cannot. Do not skip it.

Pitfall 3: Publishing Without Cluster Architecture

Publishing a single piece targeting a keyword, with no supporting content cluster around it, leaves topical authority on the table and limits how that piece can internally link to support the rest of your site. The NotebookLM research report documents the Keyword Strategy Builder’s approach of designating pillar pages and supporting subpages. A standalone post without cluster context ranks harder, accumulates links less naturally, and does less structural work for your domain than the same post placed inside a properly mapped cluster.

Pitfall 4: Treating AI Visibility as a Future Problem

As noted in the NotebookLM research report, “success in traditional SEO doesn’t guarantee visibility in AI search results.” These are parallel tracks, not sequential ones. Waiting until your traditional SEO is mature before running AI Visibility audits means ceding ground to competitors who are building AI citation equity right now. Start the AI Visibility Overview process immediately, even at a monthly cadence. The data it surfaces informs content angles that serve both traditional and AI search simultaneously.

Pitfall 5: Running the Research Once and Not Refreshing

A Keyword Gap analysis is a snapshot of competitive rankings at a single point in time. Competitors publish new content, AI models update their training data, and search behavior shifts after industry events and product launches. Run Keyword Gap and AI Visibility audits quarterly at minimum. Re-run Keyword Magic Tool after any major development in your category that might shift search intent or introduce new question types.

Expert Tips

Tip 1: Use Keyword Co-occurrence Intentionally to Build AI Citation Equity

The NotebookLM research report identifies “keyword co-occurrence” — your brand being mentioned near relevant topical keywords in content that LLMs have ingested — as a documented AI citation factor. This means your content strategy should deliberately create contexts where your brand name appears naturally alongside core category keywords. Comparison pages, best-of roundups that include your brand, and press releases distributed to indexable publications all contribute to this co-occurrence signal in ways that are invisible to traditional link-counting metrics but material for AI visibility.

Tip 2: Structure Every Major H2/H3 as a Direct Question, Then Answer in 40–60 Words

The NotebookLM research report specifically documents this as the content modularization strategy for AI citation: phrase headers as questions, follow immediately with a concise 40–60 word answer, then expand with supporting detail. This structure serves both featured snippets and Google’s AI Overviews, which frequently draw from the same content. Writing it this way costs nothing extra in production and consistently outperforms unstructured prose for both formats.

Tip 3: Use Backlink Analytics to Build Your Digital PR Target List

The NotebookLM research report explicitly calls out using Competitor Research to identify “publishers (niche blogs, review sites) that cite your competitors but not your brand.” This is not just a link-building tactic. These publishers are often in LLM training data, which means earning citations from them contributes to both traditional domain authority and AI visibility simultaneously. Build this publisher list as part of your quarterly research cycle and run it through a prioritized outreach workflow.

Tip 4: Tag Every Content Idea with Its Discovery Source Before Handing Off to Writers

When content ideas land in a CMS or editorial calendar without documented provenance, the justification for their priority is invisible to the writers producing them and the stakeholders approving them. Tag every single idea with the discovery tool (Keyword Gap, AI Visibility, Reddit, Top Pages) and the specific signal (e.g., “Missing keyword — 3 competitors rank — KD 28 — 1,600 monthly searches”). Writers who understand why a piece matters produce better-aligned content and make fewer scope decisions that undermine the original research intent.

Tip 5: Run a Site Audit Technical AI Health Check Before Any AI-Optimized Content Push

The NotebookLM research report documents the Site Audit tool’s ability to flag issues like missing llms.txt files and outdated “last-modified” headers that reduce AI crawler prioritization. Before executing a content push aimed at improving AI Visibility Score, verify that your site’s technical AI-readiness baseline is solid — robots.txt directives are not blocking AI-specific user agents, semantic HTML tags (<article>, <header>, <section>) are correctly implemented, and Schema markup is deployed on existing key pages. Content optimization built on a flawed technical foundation produces slower results than it should.

FAQ

Q: Do I need all seven tools, or can I start with fewer?

You can absolutely start with three: Keyword Magic Tool (keyword discovery), Keyword Gap (gap analysis), and Topic Research (thematic mapping). These cover the research foundation for most blog content calendars. Add Reddit analysis via Organic Research when you need stronger content differentiation. Layer in AI Visibility Overview once your team is ready to optimize for LLM citations. Social Content AI adds the most value for brands with active social channels or email newsletters. You do not need all seven on day one — sequence them as your team’s research maturity develops.

Q: Which Semrush plan do I need to access AI Visibility features?

Per the NotebookLM research report, the AI Visibility Toolkit — which tracks brand mentions and Share of Voice across LLMs like ChatGPT and Gemini — is part of Semrush’s Guru and Business tier offerings. Pro plan covers the traditional keyword and competitor research tools (Keyword Magic Tool, Topic Research, Keyword Gap, Organic Research, Backlink Analytics). If AI search visibility is a current business priority, the Guru tier unlock is the relevant entry point.

Q: How often should I run this full seven-phase workflow?

For most teams, run the complete workflow quarterly for strategic content planning, with partial refreshes (Keyword Gap + AI Visibility Overview) monthly. If your category moves fast — AI tools, cryptocurrency, health technology, financial services — run AI Visibility checks monthly at minimum. LLM training data and citation patterns shift more frequently than traditional SERP rankings, and competitive positions can change meaningfully within a single quarter.

Q: How do I prioritize when the workflow produces 30+ content ideas but production capacity is limited?

Use a two-axis priority matrix: demand signal strength (search volume, competitor traffic, AI visibility gap) vs. production effort. High-demand, low-effort pieces (FAQ additions, beginner guides in established clusters, short supporting posts) go into the current month. High-demand, high-effort pieces (pillar pages, original research, comprehensive comparisons) go into the quarterly roadmap with dedicated resource planning. Low-demand pieces, regardless of production cost, are deprioritized unless they serve a specific strategic purpose like AI citation gap coverage.

Q: Does this workflow apply to non-English markets?

Keyword Magic Tool and Topic Research support 26+ languages and all major international markets, making Phases 1 and 2 fully applicable globally. Reddit analysis (Phase 3) is strongest for English-language research; for other markets, use Organic Research to identify the equivalent local community platforms (regional forums, Quora equivalents) ranking in that country’s Google results. AI Visibility coverage for non-English LLM queries is still maturing as of early 2026, but the Visibility Overview tool does cover major markets. Check your specific region’s coverage in the Semrush AI Visibility documentation for current language support.

Bottom Line

Semrush’s seven-tool content research workflow gives practitioners a defensible, data-backed path from a single seed keyword to a full multi-channel content pipeline — and that pipeline now needs to account for both traditional Google rankings and AI-generated response visibility simultaneously. The NotebookLM research report is clear that traditional SEO performance and AI search visibility are not the same metric and do not automatically correlate, which means the AI Visibility Overview phase of this workflow is not optional for brands competing in any category where AI engines are fielding evaluation-intent queries. Start with Keyword Magic Tool and Keyword Gap if you are new to the Semrush ecosystem, layer in AI Visibility once your research foundation is established, and build toward the complete seven-phase workflow as production capacity scales. The teams running this process quarterly are not just producing more content — they are producing content that every piece can be justified, every gap is documented, and every production hour is allocated against a real demand signal.

0 Comments